Introduction

Natural language processing (NLP) is a field of artificial intelligence research and application that focuses on understanding and deciphering writings and generating human-like text.

1 In recent years there has been a leap in the capability of natural language processing models: particularly their ability to regularly generate text which humans struggle to identify as machine written.

2 Understandably, concerns have been raised around the societal impact of NLP, such as its role in generating fake news, spreading disinformation, or causing economic disruption.

3,4 Concerns have also been raised around the impact these models may have on education and academic integrity. For example, the New York Times recently reported that NLP may pose challenges to educators attempting to prevent plagiarism and cheating.

5 Such a possibility has direct implications on academic integrity and must be met directly by educators.

While policies on the use of artificial intelligence are likely best left to individual institutions, there is a clear issue with an NLP model being used to generate text which may be used as a response to an assignment at the instructor level. Given that NLP models are now generating code, and even solving word-based mathematics problems, these concerns extend to engineering curricula. Presently, there are no universally accepted definitions of plagiarism.

6 Post-secondary institutions’ definitions of plagiarism are typically explained in academic integrity policy documents, but there is little consistency across universities as to how they define plagiarism.

7 However, many educators will likely consider it cheating for students to employ such a technology to generate responses and solutions on various assignments. More specifically, students using these tools would get credit for work they did not do.

7 In such cases, it would be unlikely that traditional plagiarism checkers could be used to detect this form of cheating since the NLP model generates an AI-generated response that is unique (rather than copying an existing response). As such, an investigation of the technology, and its capability, is necessary to identify how it may affect engineering education and academic integrity.

In this paper, the authors explore the impact of NLP on teaching and learning in undergraduate mechanical engineering programs. We begin with an overview of the internal workings NLP technology, followed by an examination of current NLP models of interest. Next, we investigate the literature surrounding the practical tasks where the technology has been applied. This is followed by a set of experiments to assess how NLP models may be used by students in mechanical engineering laboratory and computing courses. We conclude with a discussion of the implications of NLP technologies on undergraduate mechanical engineering education.

Current models and their capabilities

To appreciate the potential impact of extant NLP models on engineering education, it is important to gain an understanding of their capabilities. This section provides a basic overview of these models. Wherever possible, we also provide reference to the industry-standard GLUE and SuperGLUE (General Language Understanding Evaluation) benchmark evaluation datasets as a basis of comparison.

1,9First introduced in 2019, Google's Bidirectional Encoder Representations from Transformers (BERT) model

10 was intended to address the limitations of extant standard language models that were hindered by their unidirectional nature. GPT, for example, used a left to right architecture, meaning that each token (i.e., numerical representation of a word) could only attend to previous tokens in the layers of the transformer. In contrast, BERT alleviated that constraint by employing a masked language model which could consider tokens on the left and the right context. They believed this strategy would lead to a more efficient NLP model.

BERT established state-of-the-art performance on eleven NLP tasks, including outperforming GPT on the GLUE benchmark. Furthermore, it did this with a training set of 340 million parameters that were then fine-tuned for the specific benchmark task it was given. This was equivalent to GPT at the time and demonstrated that a bi-directional approach could be more efficient.

This approach was refined in 2020 with the introduction of the ELECTRA model

11 using a new strategy called replaced token detection. Like BERT, this is a masked language model that uses context from the left and right of a sentence. However, after pre-training, a second neural network, called a “discriminator,” is fine-tuned on the data. The authors

11 argued that this approach was more efficient because the pre-training task was defined over all input tokens rather than just those which were replaced.

Results from ELECTRA on GLUE suggest that the approach was another success in the NLP field. ELECTRA, while not achieving state-of-the-art performance, was able to outperform BERT and GPT on GLUE, while requiring significantly less training resources. As well, ELECTRA has been demonstrated to be an effective zero shot learner and could outperform BERT on many of these benchmark tasks.

12While the development of BERT and ELECTRA demonstrate that NLP models can increase in efficacy using innovative new pre-training methods and architectures, development on GPT-3 showed that increasing the size of pre-training data (i.e., to 175 billion parameters) could dramatically improve performance.

2 This was unprecedented in 2020, and GPT-3 demonstrated excellent performance on multiple data sets including SuperGLUE. In some cases, GPT-3 outperformed even fine-tuned models based on BERT. However, this required few-shot learning with the model. Depending on the task it was given, GPT-3 could achieve incredible performance with just a few in-context examples, demonstrating the performance benefits of a massive training set.

An early example of this success was a demonstration of GPT-3's ability to write short news articles that human evaluators could not accurately discern as AI-written.

2 This naturally concerned many, as it demonstrated that NLP models could easily generate human like text without fine-tuning, and it also made GPT-3 far more useful to individuals not specialised in NLP. Since then, many others have gone on to demonstrate the remarkable writing capability of GPT-3.

3While reasonable performance on typical language processing tasks was expected from GPT-3, researchers were surprised to see the model generated snippets of code.

5 This resulted from the large training set which inadvertently included code. This led OpenAI to develop Codex,

13 a GPT-3 model trained on Python code available from GitHub. Codex can now write basic Python code from natural language prompts, and the model can succeed at astonishing rates. Although the model cannot outperform humans on complex coding tasks, the researchers note that Codex “displayed strong performance on a dataset of human-written problems comparable to easy interview problems.”

13In the spring of 2020, Google released the Pathways Language Model (PALM)

14: another transformer model built using a training data set consisting of 540 billion parameters. With few-shot learning, this model achieved state-of-the-art performance on 28 of 29 benchmark tasks, further reinforcing the observation that large language models can achieve exceptional performance with few-shot learning because of their training data size. The performance increases from increased model size have yet to plateau, and so we may expect that if researchers increase the size of training data the performance of NLP models will continue to increase.

14While many more NLP models exist such as older variants of GPT-3 like the previously mentioned GPT and its successor GPT-2, or variants of BERT like RoBERTa, the models presented here demonstrate two important trends. First, innovative approaches in architecture and training data, like those applied in BERT and ELECTRA, may lead to more efficient models. Second, continuing to increase the training data set size will likely continue to produce more effective models. These trends likely mean that we will continue to see advancements in the field of NLP for years to come.

Practical applications of natural language processing

Although the models presented in the previous section are impressive, the various benchmark tests presented in the papers do little to demonstrate the practical application of the models. In some cases, the results from the data sets are not applicable to real-life tasks of meaningful complexity. This issue was explored by van der Lee et al.

9 when they sought to examine the best practices for humans evaluating machine-generated text. One example was the bilingual evaluation understudy (BLEU) metric, which is used to measure the quality of a translation between two languages by a machine. While a translation may score low on the BLEU metric, because it does not match a reference translation for a dataset, it may still be a valid translation. Similarly, the researchers’ tasks of a benchmark test may not be as useful in real-world applications. Based on this, they recommended that more human evaluation of machine-generated text is necessary for evaluating the efficacy of models and listed several recommendations for conducting such evaluation.

Thus, in examining the technical capabilities of NLP models, it is necessary to examine some of the novel ways in which they are applied. This is not to state that benchmark tests are useless, as it is impractical to rely solely on human examination of NLP models and benchmark tests are an excellent way to reliably compare models with consistent metrics. To understand the implications that these models may have it is necessary to examine the manner in which they may be used to complete tasks more akin to those seen in a classroom.

Wu et al.

15 at OpenAI developed an approach for generating summaries of novels using a fine-tuned model based on GPT-3. Their approach involved breaking down a novel into smaller sections and writing summaries of those smaller sections. This process continues until an overall summary is generated and then evaluated. The results were evaluated by humans, with 5% of summaries receiving a score of 6/7, and 15% receiving a score of 5/7 with the best produced model. Overall, many of the machine generated summaries were worse than those generated by humans, but the results are promising for NLP models completing complex tasks like this.

Crossley et al.

16 applied NLP technology to evaluate summaries of various texts written by students. They applied four different openly available NLP models, and through their own modelling of the evaluation of results from these NLP models were able to categorise summaries as either high or low quality. Their approach successfully determined the quality of a summary 80% of the time and determined the level of education of the text at about the same frequency. While this contribution does not represent a new model, it does show an interesting way in which educators might apply NLP, and students may even vet copied text for quality.

The previous two applications required large amounts of human input, which is not always readily available. Imran et al.

17 examined this challenge of sentiment analysis from student reviews of classroom teachings and professors. They developed an NLP model based on a smaller number of student reviews, which would then generate many student reviews, which were more balanced between positive and negative. Using this data, they generated another model focused on sentiment analysis, and showed that the approach improved accuracy. It is noteworthy that NLP generated prompts can be used to train more NLP models.

A unique use of NLP was displayed by Demirci et al.,

18 who applied GPT-2 to detect static malware. Their approach involved fine-tuning GPT-2 and Stacked BiLSTM, another pre-trained NLP model, to find malicious code. Their GPT-2 model performed exceptionally well, detecting malicious code 85.4% of the time. While this example may not apply directly to education outside of computer science, it does demonstrate the capability of these models once fine-tuning has been applied.

Perhaps the largest concern and obvious application of NLP models is the generation of human-like text. Salminen et al.

19 examined this issue in regards to fake reviews of online products. Using GPT-2 as a base, they created a fine-tuned model to generate fake reviews of various products. Furthermore, they fine-tuned GPT-2 and RoBERTa to detect fake reviews. The results were particularly interesting when compared to human evaluations. Humans only detected fake reviews from the model around 55% of the time (i.e., a little better than chance). The GPT-2 and RoBERTa models, in contrast, detected fake reviews with 96% and 83% accuracy, respectively. Their work once again demonstrates the importance of fine-tuning models, but provides further evidence that AI generated text is able to evade detection by human readers.

The issue of fake reviews is also of concern for the tourism industry, and Tuomi

20 has conducted preliminary research into the issue, though his results are limited. The work he collected thus far once again suggests that humans struggle to detect AI-generated text.

The results from the work of Salminen et al.

19 reinforces that of Brown et al.,

2 in that the short text generations from NLP models are difficult to detect by humans. However, Brown et al. note that the longer the text generations are more easily detected by humans. Thus, there remains a reasonable doubt of how effective NLP models would be at more academic tasks seen by students.

Kobis and Mossink

21 provide some insight into this with their work on AI generated poetry. Their approach involved fine-tuning GPT-2 to write poetry. This model would be given two starting lines, and from there it would generate a full-length poem. With this set of poems, they completed two Turing tests. In the first, they had amateur poets write poems with the same two starting lines as the machine-generated poems, while in the second they used professionally written poems, like those of Maya Angelou, to compare with, again with the first two lines. The findings were of significant interest. In the first set of Turing tests, human judges detected the correct origin of the poem 50.21% of the time, making it effectively chance. When asked of their confidence in determining the origin of the poem, however, human judges had an average confidence level of 62.27 on a scale from 0 to 100. Humans were not only poor at detecting the origin of the poem, but as well were consistently confident in their selection. These results changed once the model was placed against professional poets, where human judges showed a preference towards the human written poems and detected the correct origin beyond chance levels.

Presently, the applications of NLP models have largely focused on detection or text generation of some form. However, there has been a desire to apply the technology towards mathematics. Cobbe et al.

22 focused on this problem in 2021 at OpenAI. First, the collected and presented a dataset called GSM8 K, which consists of slightly over 8000 grade school mathematics problems. This is noteworthy, as it has become a frequently tested upon dataset. Until this point, NLP models had struggled with mathematics, as they were prone to small individual mistakes. The approach that Cobbe et al.

22 took was to apply verifiers. They used a fine-tuned model based on GPT-3 parameters to generate solutions and then had a verifier find the most frequent solution and elect this as the correct answer. The verifier approach (pre-trained with six billion parameters) performed as well as a fine-tuned model based on GPT-3 with 175 billion parameters, showing their approach was equivalent to increasing the pre-training data by a factor of 30. Unsurprisingly, their highest success rate, about 55%, was achieved by using fine-tuned generators and verifiers based on GPT-3's 175 billion parameter models.

Wei et al.

23 took a different approach to the challenge of solving mathematics problems, in particular word-based ones. Beginning with PaLM as their base model, they provided chain of thought prompting in the form of few and single-shot learning and achieved a remarkable success rate of 57% on GSM8 K. Their approach made use of the few shot learning capability that large language models exhibit, and their prompt simply walked through the problem solving process with the model the same way in which an educator would do so with a student. These results suggest that using prompts in this manner can elicit reasoning in a large language model.

Lewkowycz et al.

24 developed a model called Minerva, which was another fine-tuned model built from PaLM, trained on a high-quality data set consisting of numerous mathematics examples. Furthermore, Minerva used its own internal calculator. The fine-tuning process once again demonstrated exceptional performance, achieving a success rate of 78.5% on GSM8 K, establishing the current state of the art. Furthermore, it reinforced the importance of fine-tuning models to achieve exceptional, but select, performance.

As noted previously, the discovery that GPT-3 could generate simple programs from Python docstrings led to the development of a specialised GPT model, called Codex, that focuses on a variety of coding tasks.

13 In their release paper on Codex, OpenAI note that Codex currently generates the ‘right’ code in 37% of use cases.

13 Although Codex can generate correct code in many cases, clearly it requires close supervision on the part of the user. The success of the Codex project led to the development of CoPilot, a code completion module embedded in Microsoft Visual Studio.

25A similar approach was followed by Google with PaLM with its “PaLM Coder” model.

14 Given the larger size of PaLM's training set, it has been shown to outperform Codex on multiple coding datasets.

The applications of NLP are growing rapidly and span a wide range of application domains as well as a wide range of processing tasks. As well, these tools are becoming much for accessible. For example, in November 2021, OpenAI removed the wait list for its GPT-3 large language processing API, allowing the public to explore its capabilities and integrate the model into their app or service.

26 The API allows users to generate sequences of words starting from a source input called a prompt. The resulting completion is text that is statistically a good fit given the starting text (prompt). GPT-3's natural language processing capabilities are “shockingly good”

27 – as Floridi and Chiriatti

28 note, signal “the arrival of a new age in which we can now mass produce good and cheap semantic artifacts”.

There are clearly two main avenues in which NLP can continue to improve in capability. The first of these is to continue applying innovative approaches to how transformer models work, as was done in the work on BERT and ELECTRA. The second, and more straightforward manner, is to simply increase the size of pre-training data. This was clearly demonstrated in the work on PaLM, where the authors directly note that “scaling improvements from large [Language Models] have neither plateaued nor reached their saturation point.”

14 All researchers must do to increase the efficacy of a model is to give it more pre-training data. Thus, we can, and should, expect that NLP technologies will continue to improve in capability with time.

It follows that it is only a matter of time before we see NLP tools of this type enter our students’ educational toolkit. In the next section, we provide two examples of how this could be accomplished with OpenAI's GPT-3 and Codex models.

Two tests with OpenAI

In this section, we focus on two key writing activities performed by undergraduate mechanical engineering students: written material for laboratory reports, and industrial computer programming. The purpose of performing these tests is to explore the feasibility of a student completing an assignment using an NLP tool. If the task can be accomplished with little effort (i.e., no or little fine tuning) and with human-like output, then it may be conceivable for a student to use NLP tools in a similar way to complete an assignment. Thus, the tests will provide insight on whether NLP tools pose a possible threat to academic integrity in the undergraduate mechanical engineering education context.

For each test, the OpenAI API is provided with a short written prompt that is interpreted by the OpenAI execution engine to generate a completion. The execution engine determines the language model used by GPT-3 to perform the natural language processing task: we use text-davinci-003 for written material and code-davinci-001 for code generation. The GPT-3 response (i.e., completion) is affected by both its input (i.e., prompt) and the execution engine parameters. These parameters allow the user to control various factors such as the length of the response, the “creativity” of the response, and the consistency of responses (when multiple tests are performed).

Two key GPT-3 parameters are “temperature” and “Top P”. To understand how these two parameters work together it is important to note that NLP models are trained to predict the probability of the next word based on the input prompt.

29 If the model always samples the most likely next word, it runs the risk of getting stuck in loops. To get around this problem, sampling methods are based on sampling from a distribution; however, this also creates a problem, since sampling from a distribution can cause the model to go off topic (i.e., when it samples from the tail of the distribution).

When combined, the temperature and Top P variables allow the user to tune the model to generate sequences of arbitrary length (i.e., prevent it from getting stuck in loops) while staying on topic. Temperature,

T, is inspired by statistical thermodynamics: at lower temperatures the model is increasingly confident of its top choices, while at higher temperatures the model moves towards uniform sampling. Mathematically, the sampling probabilities are obtained by dividing a logits function by

T before feeding them into softmax function.

30 Top P,

p, controls how much of the tail of the probability function is removed. Mathematically, the cumulative distribution is computed and cut off as soon as the cumulative distribution function (CDF) exceeds

p.30From the user's perspective, these two parameters combine to influence the “creativity” of the response: i.e.,

T controls the randomness of the response, while

p controls the scope of the randomness. For our experiments, we set Top P at its maximum value of 1 to allow GPT-3 to explore the entire spectrum of responses and control the randomness using the temperature dial.

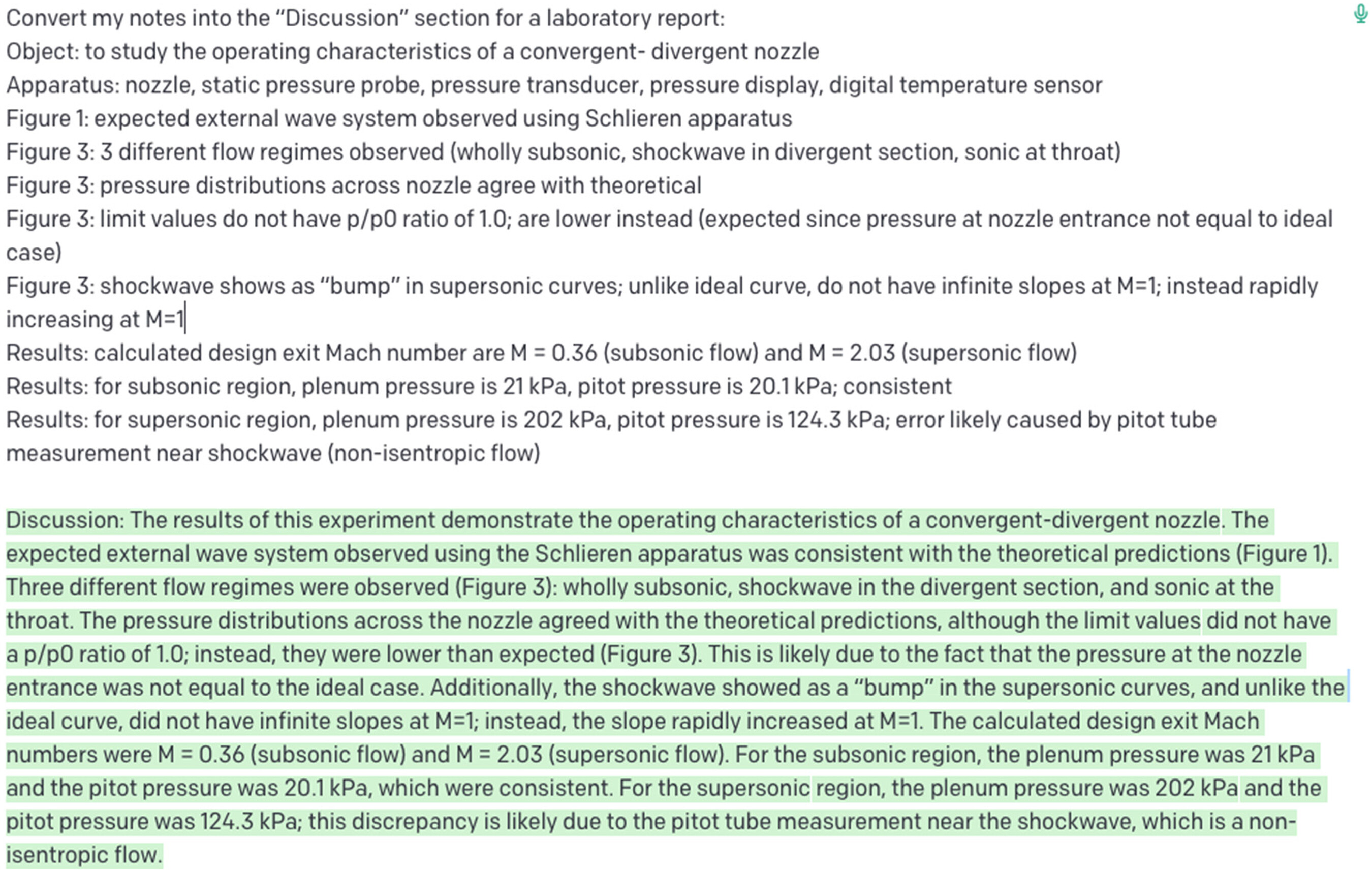

31Writing

Mechanical engineering undergraduate students are engaged in a wide range of writing activities during their program. This example involves producing written text from laboratory notes taken in a senior fluids course. In this case, the student has made point-form notes during the laboratory session that include comments on the experimental results. The objective is to produce a written “Discussion” section based on the point-form notes.

To produce the text, we used OpenAI's “Playground” API with the GPT-3 text-davinci-003 engine (

T = 0,

p = 1). The prompt is shown in text at the top of

Figure 1: it includes the phrase “Convert my notes into the ‘Discussion’ section for a laboratory report:” followed by a transcription of the student's point-form notes that were taken during the laboratory. The GPT-3 completion is shown highlighted in green at the bottom of

Figure 1.

The prompt is a very straight forward example of zero-shot text generation. In this case, the student simply asks GPT-3 to convert their notes into a “Discussion” section (i.e., the first sentence), then includes the point-form notes from the laboratory. No training examples are included in the prompt.

From a writing perspective, this completion can be assessed from the perspective of surface errors (i.e., grammar, spelling, syntax) and global errors (i.e., readability, comprehension).

32 The resulting completion contains nine sentences with an average of 23 words per sentence that are free of surface errors. The resulting completion also scores well in terms of readability based on Flesch-Kincaid readability tests,

33 which calculate the ease with which a reader can comprehend a passage of text based on number of syllables per word and number of words per sentences. The text generated is as at a Flesch Reading Ease of 43 (i.e., “university” level of difficulty). One can also view the completion in terms of the genre of writing (i.e., writing an undergraduate engineering laboratory report). Based on the authors’ experience with undergraduate engineering laboratory reports, the GPT-3 completion would not look out of place in an undergraduate laboratory report and could be inserted directly into the laboratory report in its current form or an edited form. While this completion required the technical content to be prepared by the student (i.e., the laboratory notes included in the prompt), GPT-3 has saved the student the task of producing the expository text.

To determine the cognitive level of this learning activity, it is important to view the learning activity in the context of the intended learning outcome.

34 For example, if the intended learning outcome is to simply “summarise” the notes into text that is free of surface and global errors, this learning activity can be viewed at the “comprehension” level of Bloom's taxonomy.

35 As noted previously, GPT-3 appears to be capable of this. However, it remains to be seen whether GPT-3 can produce writing that demonstrates higher-level functions such as analysis, synthesis, and evaluation. For example, if the intended learning outcome is to make conclusions about a lab experiment from the point form notes or combine the evidence together in a meaningful way, we cannot claim that GPT-3 has this capability. The implication here is that engineering students may be able to use NLP tools for simple tasks only, or as a “starting point” with significant editing for more complex writing.

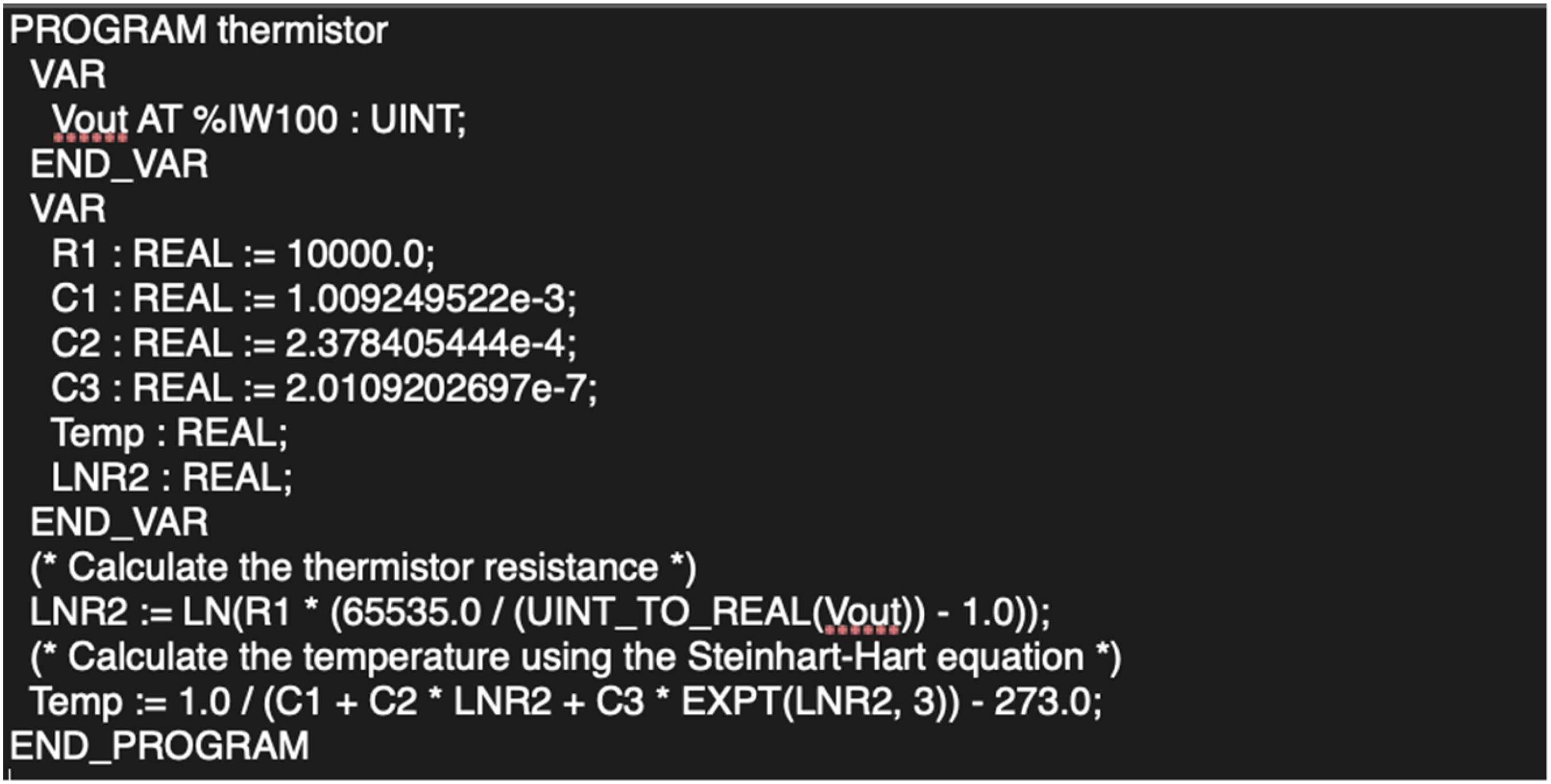

Programming

For the next set of tests, we focus on a laboratory exercise from a second-year undergraduate course in automation and controls taught by the authors. For this exercise, students were required to create a program to interface a thermistor to a programmable logic controller (PLC) using IEC 61131-3.

36 The exercise was conducted in a laboratory setting using the OpenPLC software platform

37 with the Arduino Uno R3 hardware platform.

38Thermistors and resistance temperature devices (RTDs) are basically variable resistors that change resistance with temperature. Given that the Arduino Uno R3 utilises analogue voltage inputs, students needed to recognise that the varying resistance must first be converted to a voltage using a simple voltage divider circuit. The thermistor resistance could then be determined by solving the voltage divider transfer function,

where

Rth is the thermistor resistance,

R1 is the series resistor (roughly equal to the maximum thermistor resistance), and

Vin and

Vout are the circuit's input and output voltages respectively.

Once

Vout is acquired and converted to the thermistor resistance using equation (

1), code had to be written to convert the resistance to a temperature in degrees Celsius (

oC) using the Steinhart-Hart equation.

39 The authors’ solution in IEC 61131-3 ST (structured text) notation is shown in

Figure 2. For this implementation

Vin = 5 V and

R1 = 10 kΩ. OpenPLC uses a 16-bit convention for analog I/O.

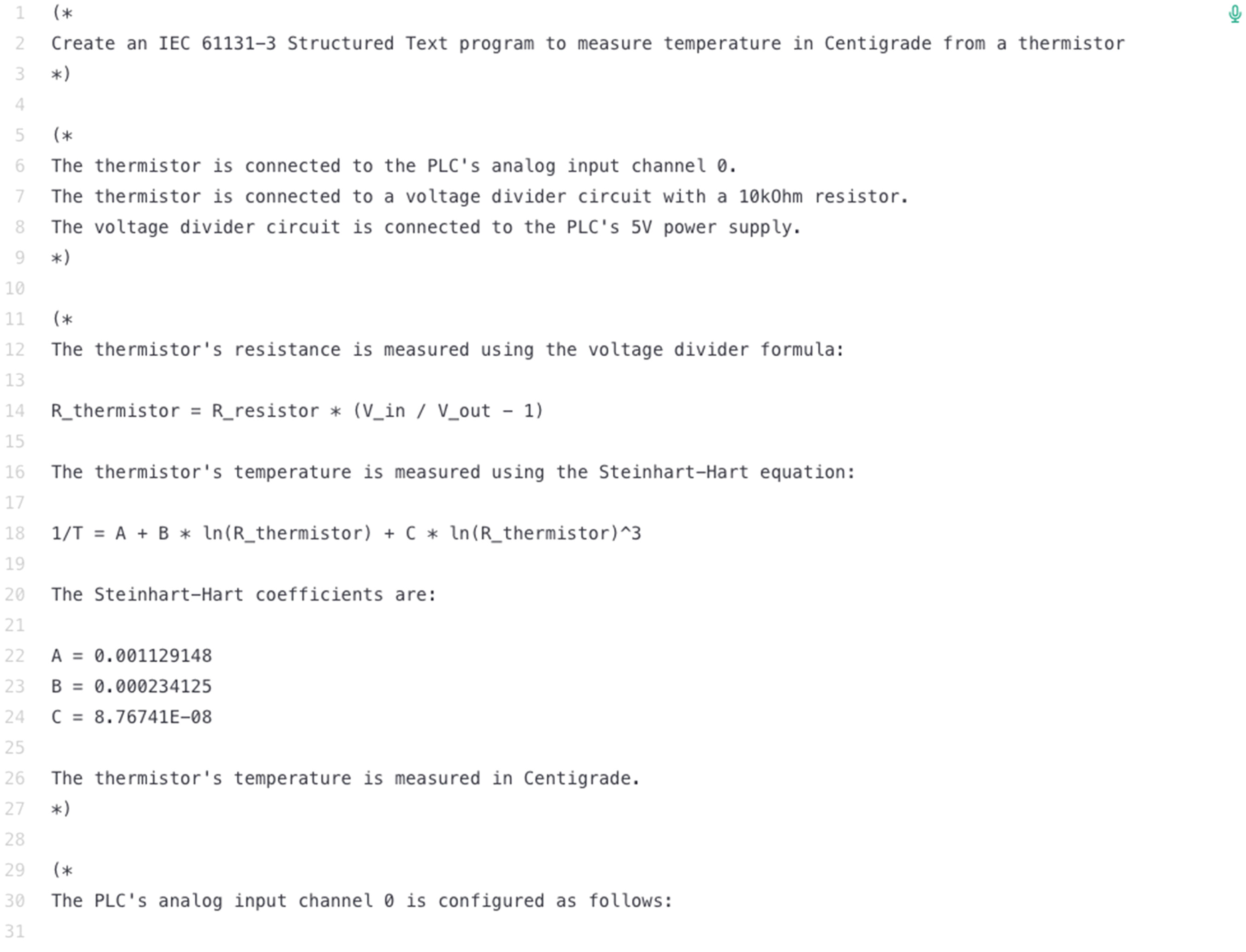

For the Codex tests, we chose to focus on ST given that Codex generates text-based completions. The prompt and completion for the thermistor laboratory problem are shown in

Figure 3.

The Codex prompt, shown at the top of

Figure 3 (lines 1–3), is in the form of an ST comment (i.e., comments in ST are enclosed by “(*” and “*)”). As can be seen from

Figure 3, the completion (lines 5–30) was unsuccessful in this case. Rather than generating ST code, Codex generated additional comments. However, it is interesting to note that the comments show that Codex recognises that the voltage divider transfer function must be solved (lines 12–14) and that the Steinhart-Hart equation is required (lines 16–25) even though it cannot generate the required ST code. Given that Codex is trained on GitHub, it is likely that there are insufficient IEC 61131-3 examples to provide successful completions in this set of languages. However, when prompted to generate an Arduino program, Codex generates a successful completion in C as shown in

Figure 4. With this solution, Codex recognises both the need to solve the voltage divider transfer function and the need to use the Steinhart-Hart equation.

Comparing the Codex implementation to the authors’ implementation, both successfully convert the thermistor input to a temperature reading in Celsius. The main difference between the solutions is in the representation of the analog input, Vout, to the Artuino: i.e., the authors’ use a 16-bit word while Codex uses a 10-bit word. Given that the Codex prompt specified an Arduino program, the 10-bit word is correct since it is consistent with the Arduino's 10-bit analog-to-digital converter. Our solution uses a 16-bit word for consistency with the OpenPLC analog I/O convention. The two implementations also differ in the choice of task interval; however, this is an implementation detail that could be updated at runtime.

At this stage, the student could choose to translate the C program (Arduino solution) to IEC 61131-3 ST. Arguably, this would be a straight-forward task given the close correspondence between each language's syntax. However, this may not always be the case: especially when the solution requires an understanding of the real-time behaviour of the automation system.

Given that GPT-3 can be trained for specific use cases,

40 it should be possible to generate an ST completion directly. The trade-off, however, will be between training effort and translation effort. A very large training set (i.e., “fine-tuning”) would result in a model that would perform as well with ST as it does with Python and C. However, it is highly unlikely that this approach would be used by students to solve the thermistor laboratory problem. A more reasonable approach would be to provide GPT-3 with a small number of ST examples (i.e., one-shot or few-shot setting) that it could use to understand the syntax for the specific use case.

To test this approach, we began with a simple “statistics” example that includes key elements of the IEC 61131-3 ST syntax for this problem. More specifically, the prompt shown in

Figure 5 includes information on the specification of variables, the scaling of inputs, type conversions, and mathematical operations.

The resulting successful completion is shown in

Figure 6. This example illustrates the power of the GPT-3 Codex model: with only a single example, it can build on its built-in training set to infer a solution for this very specialised use case. This does not mean that a solution to any IEC 61131-3 problem can be achieved with a one-shot setting; however, by carefully selecting related examples, a solution can be obtained.

Conclusions

Given the rapid advances in NLP models, it is likely – if not inevitable – that engineering educators will encounter this technology in the classroom. Regardless of whether this will take the form of an assistive technology (e.g., to support diverse learners with access concerns) or a disruptive technology (e.g., to enable cheating behaviours), it will need to be addressed directly by faculty and their institutions. Our investigations into the current state of NLP have shown that extant models are capable of producing results for general natural language (i.e., free of surface and global errors) and coding tasks (i.e., at the level of easy interview problems

13) but require additional context-specific training to be applied to typical undergraduate mechanical engineering problems. For example, OpenAI is quite capable of converting laboratory notes to written text but requires additional training to generate code for an industrial automation system.

From the student perspective, NLP technology is currently very accessible, both as a stand-alone API (e.g., the OpenAI Playground

41 and ChatGPT

42) and as a plug-in to existing engineering tools (e.g., the VisualStudio CoPilot plug-in

25). For learners who struggle with surface errors, such as those with learning disabilities or second language learners, this tool might reduce barriers that impact their ability to express their technical knowledge. However, the main barrier lies in the effort required to provide these models with the necessary context-specific training to make them applicable to undergraduate mechanical engineering problems. Based on our review of the literature, there are two paths to achieve this. The first, fine-tuning, can clearly achieve excellent results as Kobis and Mossink demonstrate with their AI generated poetry using a fine-tuned model based on GPT-2.

21 The obvious barrier for students is that a proper fine-tuned model requires thousands of examples, and thus, is labour intensive. In this way, NLP tools need fine-tuning to adapt the specific contexts and genre requirements of discipline-specific tasks.

We believe it is unlikely that students would employ such a strategy on their own. The amount of work required to create a fine-tuned model is well beyond that of a typical assignment or paper. Where it may become of some concern is in contract cheating, a scenario in which students pay for someone else to complete their assigned task and submit it as their own.

43 Should an individual or organisation create a fine-tuned model capable of completing select assignments, students may readily use it to complete their work. Given the high efficacy, but highly specialised nature, of these models they would likely only find use in niche situations, thus necessitating the development of many different fine-tuned models to cover a wider range of tasks.

The second way NLP responses may be improved to a more useful form, one- or few-shot setting, was demonstrated in the previous section. The results from GPT-3

2 and from PaLM

15 support the use of few-shot learning to increase the performance of models on datasets. Literature surrounding the use of this method for practical applications, however, is limited at best.

Conceivably, students could use an NLP platform (e.g., OpenAI's GPT-3) and quickly generate a useful response to a question with few-shot learning. They would require similar questions and responses, which could be gathered from former students, textbooks, or online sources, and be given as input to a model. The model could then conceivably generate a useful response. Such a methodology could be easily deployed by students as demonstrated by the previous industrial automation system coding example using Codex. In their present state, NLP models do not appear to pose a significant threat to academic integrity in the engineering classroom given the extra work required to move beyond zero-shot learning. Since NLP platforms rely on the existing training and data, they do have the potential to challenge instructors in their assessment designs. Repeated use of the same assignments and structures increase the likelihood of the NLP platforms creating reasonable responses. Unfortunately, it is not possible to “design out” cheating by changing assessments; however, Bretag et al.

44 showed that some assessment types are less susceptible to academic misconduct. Specifically, authentic assessments such as those requiring demos, oral exams, or practical examinations may be less susceptible to breaches of academic integrity, but there is no such thing as a cheat-proof assessment.

44 For engineering educators, the key will be understanding the capabilities of NLP as this technology advances, and applying this knowledge to our design and application of intended learning outcomes, learning activities, and assessments.