Enhancing Clinical Trial Selection for Cancer Patients Using Large Language Models

Abstract

Purpose:

Identifying appropriate clinical trials for cancer patients with specific gene mutations remains a significant challenge, largely due to limitations in current search tools like ClinicalTrials.gov, which at times return irrelevant or misleading results. This diagnostic accuracy study investigates the efficacy of 2 large language models (LLMs), GPT-4.0 and Gemini 2.0, in evaluating the eligibility of patients with specific cancer-related gene mutations for clinical trials.

Methods:

The study prompts GPT 4.0 and Gemini 2.0 with trial details from ClinicalTrials.gov and a particular cancer mutation. We then assess model performance against physician-curated benchmarks across 6 gene mutations (ALK, BRAF, EGFR, ERBB2, KIT, and KRAS).

Results:

The results demonstrate good F1-scores for both LLMs—averaging 64% for GPT-4.0 and 70% for Gemini 2.0—highlighting their potential to streamline clinical trial matching. Furthermore, decision trees provided interpretability by identifying key textual indicators that LLMs use.

Conclusion:

This work demonstrates the feasibility of using proprietary LLMs such as GPT 4.0 and Gemini 2.0 “off the shelf” with both limited LLM fine-tuning and limited patient information to evaluate clinical trial eligibility.

Introduction

The advent of Large Language Models (LLMs) in 20181 has significantly impacted various domains, including medicine, education2 and industry. In the medical field, LLMs have demonstrated their utility in answering patient inquiries and assisting in medical education. They are well-positioned to assist researchers in analyzing text-based data, such as clinician notes.1 This study explores the efficacy of two prominent LLMs—Gemini 2.0 and GPT-4.0—in determining the appropriateness of clinical trials for cancer patients with specific gene mutations.

Clinical trials are essential for advancing cancer treatment; however, identifying suitable trials remains a challenge. The primary source of clinical trial data, ClinicalTrials.gov, is widely used, receiving over 2 million page views per month and 90 000 unique visitors daily as of December 2024.3 However, the platform has limitations in presenting clinical studies, particularly for cancer patients.

The search functionality is largely text-based and non-specific, often retrieving extraneous or irrelevant results due to ambiguous terminology and exclusion criteria within trial descriptions. For instance, a search for the “EGFR mutation” may retrieve trials related to “eGFR,” a drug for kidney disease, due to the text-matching approach. Similarly, searching for “KRAS mutation” may return a study that explicitly excludes patients with KRAS mutations, as the trial description states that only those with a “wild-type K-Ras gene” are eligible. Such limitations necessitate the development of improved filtering mechanisms to enhance search precision.

Natural Language Processing

Previous work on clinical trial refinement exists that focuses on traditional natural language processing. Bui and Zeng-Treitler4 built a system (regular expression discovery [RED]) that uses a top-down approach involving natural language processing and text matching to categorize smoking and pain status data sets. The authors use the matching text standard to a set of clinical trials to create regular expressions for searching for new trials of the same type. They developed a new “novel regular expression classifier” (RED) and created 2 text classifiers based on this classifier. The accuracy and F score for the system are 83.0% and 85.7%, respectively.

Frenz5 created a Perl Regular (PREP), allowing clinicians to search PubMed for clinical trials focusing on mutations that cause deafness. The system utilizes regular expressions. The system largely mirrors the procedures implemented by state-of-the-art information retrieval systems. Although this system utilizes regular expressions in its search, it does not verify results for false positives, allowing search terms with multiple meanings to slip through the system. The accuracy of the PREP system is 77%.

Meric-Bernstam et al6 matches clinical trials to gene-level alterations by combining natural language processing of gene names and using therapeutics known a priori to target those genes. The authors aimed to automatically match patients with specific mutations to MD Anderson Cancer Center trials that focused on the same mutation. In the final results, 28.4% of patients who matched with clinical trials enrolled in the proposed trials.

Large Language Models

Since the relatively recent advent of LLMs, a growing cohort of researchers has used them to connect patients with clinical trials in various stages of the clinical trial pipeline.

Hamer et al.7 use InstructGPT to partially automate the pre-screening process for patients by cross-referencing 10 synthesized medical profiles of candidates with eligibility criteria of trials in clinicaltrials.gov. The model was able to correctly identify criteria as screenable with an accuracy of 72%.

Jin et al. create a comprehensive LLM (TrialGPT) that has 3 modules: a module (TrialGPT-Retrieval) that predicts patient eligibility based on trial criteria, a second module (TrialGPT-Matching) that predicts patient eligibility, and a TrialGPT-Ranking module that generates scores for each trial to facilitate ranking. TrialGPT was tested on 3 cohorts of 183 synthetic patients with 75 000 trial annotations.

Peikos et al8 use GPT to extract patient-related information from clinical notes and use this to retrieve pertinent clinical trials. GPT was used to construct a query to search for eligible clinical trials for the patient using the extracted information. Their approach outperforms the baseline using TREC 2022 benchmarks.

Nievas et al9 investigated using both open source LLMs (Llama) and proprietary LLMs (GPT) for clinical trial retrieval. The use of open source LLMs mitigates concerns surrounding patient privacy and data leakage. To generate criterion-level explanations for each trial included in the TREC 2011 and 2022 benchmarks based on a patient’s summary with LLMs. They found that the performance of open source LLMs can surpass that of proprietary LLMs, given careful fine-tuning.

This article is an extension and improvement of previous work (CTMine).10 The CTMine system utilized regular expressions to verify that gene/mutation names were correctly represented in the wording of each clinical trial retrieved from ClinicalTrials.gov. To further refine the search for clinical trials by mutation, the authors created a machine learning model to exclude clinical trials not pertinent to a search for cancer mutation–focused clinical trials (such as those with double use or in exclusion circumstances documented above). The CTMine system focused on clinical trials that included patients with mutations in the ALK, BRAF, EGFR, ERBB2, KIT, and KRAS genes. Physicians (2-4 per trial) were then asked to find if clinical trials were relevant to a specific gene, and their responses were compared to the CTMine machine learning model. Results ranged between an F-score of 57.1% and 82.1%, depending on the gene mutation.

With the advent of Large Language Models, we explore whether GPT 4.0 and Gemini 2.0, when given a clinical trial and a gene mutation name via a prompt, would be able to ascertain whether a clinical trial was suited for a patient with that particular gene mutation, even with the presence of confusing information in the clinical trial, as previously mentioned. The positive results demonstrated that the 2 LLMs could successfully perform the proposed task and could be further improved with future fine-tuning of their parameters. The proposed methods are not intended to replace the clinical expertise of clinical research coordinators or physicians, but rather serve as a first step in identifying clinical trials that could be beneficial to patients with a specific cancer mutation. The system can be used for a single clinical trial or to scan a large corpus.

Methods

Clinical Trial Collection

A web crawler was developed using Python to extract mutation data from the COSMIC (Catalog of Somatic Mutations in Cancer) search engine API for specific genes. COSMIC is an online database that catalogs all known cancer mutations associated with particular genes, including identifiers for related studies.

After retrieving mutation data and associated alternate IDs from COSMIC, the crawler constructs a separate query for each mutation and sends it to ClinicalTrials.gov to fetch relevant clinical trials. Each trial’s description is then analyzed to verify that the queried mutation is mentioned, which is not always guaranteed. Regular expressions (outlined in Table 1) were used to search for mutations within the trial descriptions.

| Regular expressions |

|---|

| [a-zA-Z]+[\d]+[a-zA-Z/]*[GENE MUT]+ |

| p.[a-zA-Z]+[\d]+[a-zA-Z/]*[GENE MUT]+ |

| [a-zA-Z]+[\d]+[a-zA-Z/]+[GENE MUT]+ |

| p.[a-zA-Z]+[\d]+[a-zA-Z/]+[GENE MUT]+ |

Next, the system stores the clinical trial text, title, and a link to the gene mutation in a PostgreSQL database. Additional metadata for each trial is also saved, such as trial status, phase, type, and condition.

For example, consider the ALK gene. The CTMine system collects mutation names and alternate IDs related to ALK from COSMIC. It then generates queries for each mutation and associated ID. One trial returned might be “Study of Oral RXDX-101 in Adult Patients With Locally Advanced or Metastatic Cancer Targeting NTRK1, NTRK2, NTRK3, ROS1, or ALK Molecular Alterations (STARTRK-1),” which matches the ALK gene using the regular expressions. However, another trial, “A Long-Term Safety Study of ALKS 5461,” mentions the drug ALKS 5461 rather than the ALK gene, so the system discards this trial since it doesn’t match the mutation criteria.

LLM Prompting and Decision Tree Exploration

The LLMs used were GPT 4.0 and Gemini 2.0. For both LLMs, the default parameters were used. Both LLMs were accessed via their respective API interfaces.

For each clinical trial, the following prompt was given to either GPT 4.0 or Gemini 2.0: “Based on the following clinical trial information, would a patient with a XX gene mutation be eligible for the clinical trial?” where XX is the gene name. The prompt was sent via their respective APIs, and the response was returned in JSON format. The proportion of clinical trials per gene is given in Table 2. Only the title, eligibility criteria, health volunteers, sex, minimum age, stages, and study population information were sent per clinical trial to the LLM. This information was obtained from ClinicalTrials.gov via its API. Clinicaltrials.gov study ids for clinical trials used in this study are included in the supplement. Figures 1 and 2 provide examples of the prompt and data sent to the LLM, along with the response (in this case, from GPT-4).

| Gene | Not_wanted (no) | Wanted (yes) | Total |

|---|---|---|---|

| ALK | 16 | 13 | 29 |

| BRAF | 9 | 39 | 48 |

| EGFR | 49 | 77 | 126 |

| ERBB2 | 12 | 42 | 54 |

| KIT | 59 | 8 | 67 |

| KRAS | 17 | 27 | 44 |

| Total | 162 | 206 | 368 |

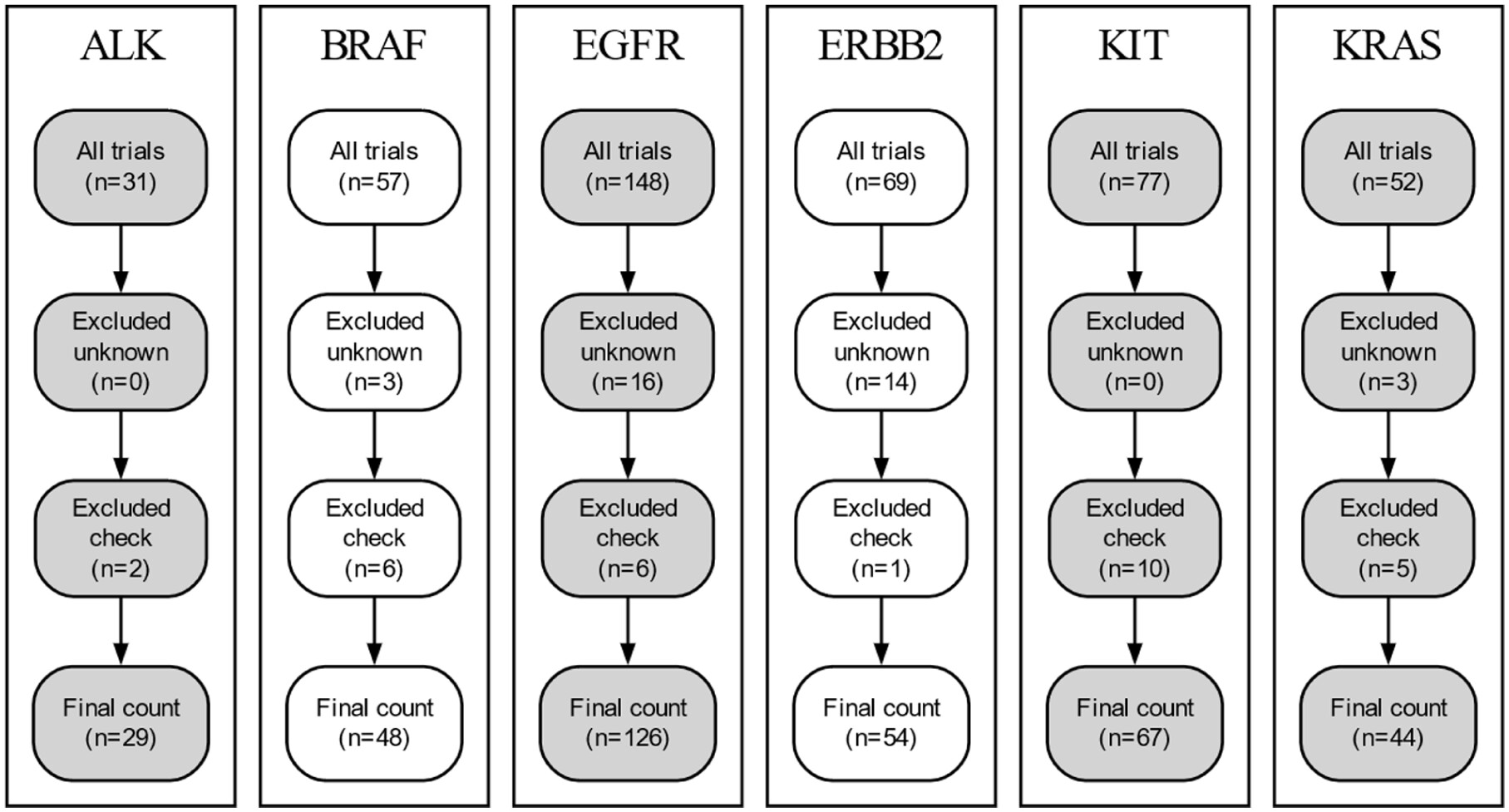

Four oncology residents from Johns Hopkins University were recruited to score each trial. Table 2 features the “grades” that were given by the clinicians as part of the original CTMine system. These grades indicate whether a particular clinical trial is appropriate for a patient with a certain gene mutation. The authors marked the grade as the majority consensus of clinicians, with ties broken in the following order: yes, no, unknown, and yes but with a check. Researchers could also use grades such as “Unknown” or “Useful but Check” in the original system. Studies with these grades were excluded from the current study. Figure 3 is a flow diagram illustrating the number of trials excluded from this study based on the assigned grade.

Next, a decision tree algorithm was used to explore the responses provided by the LLM from a natural language processing perspective, comparing them against the gold standard physician classifications. The decision tree algorithm was implemented using the scikit-learn DecisionTreeClassifier model.11 per gene.

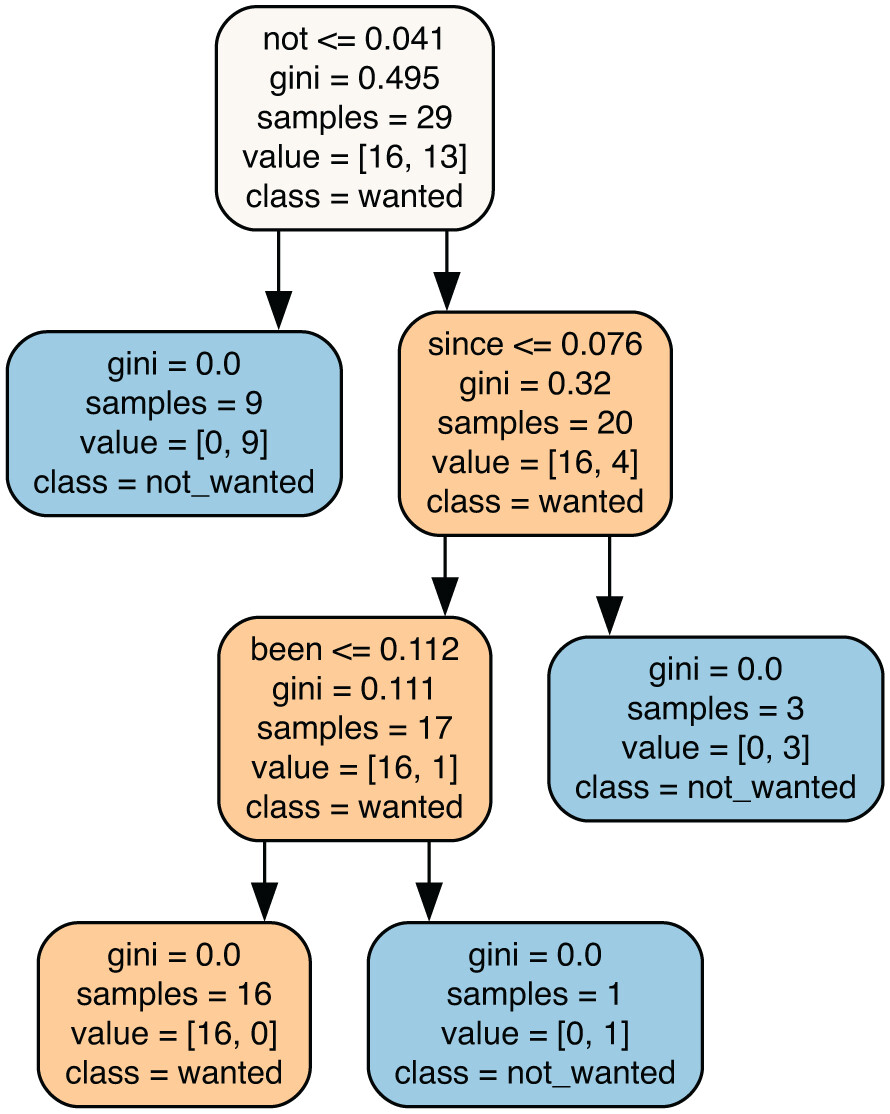

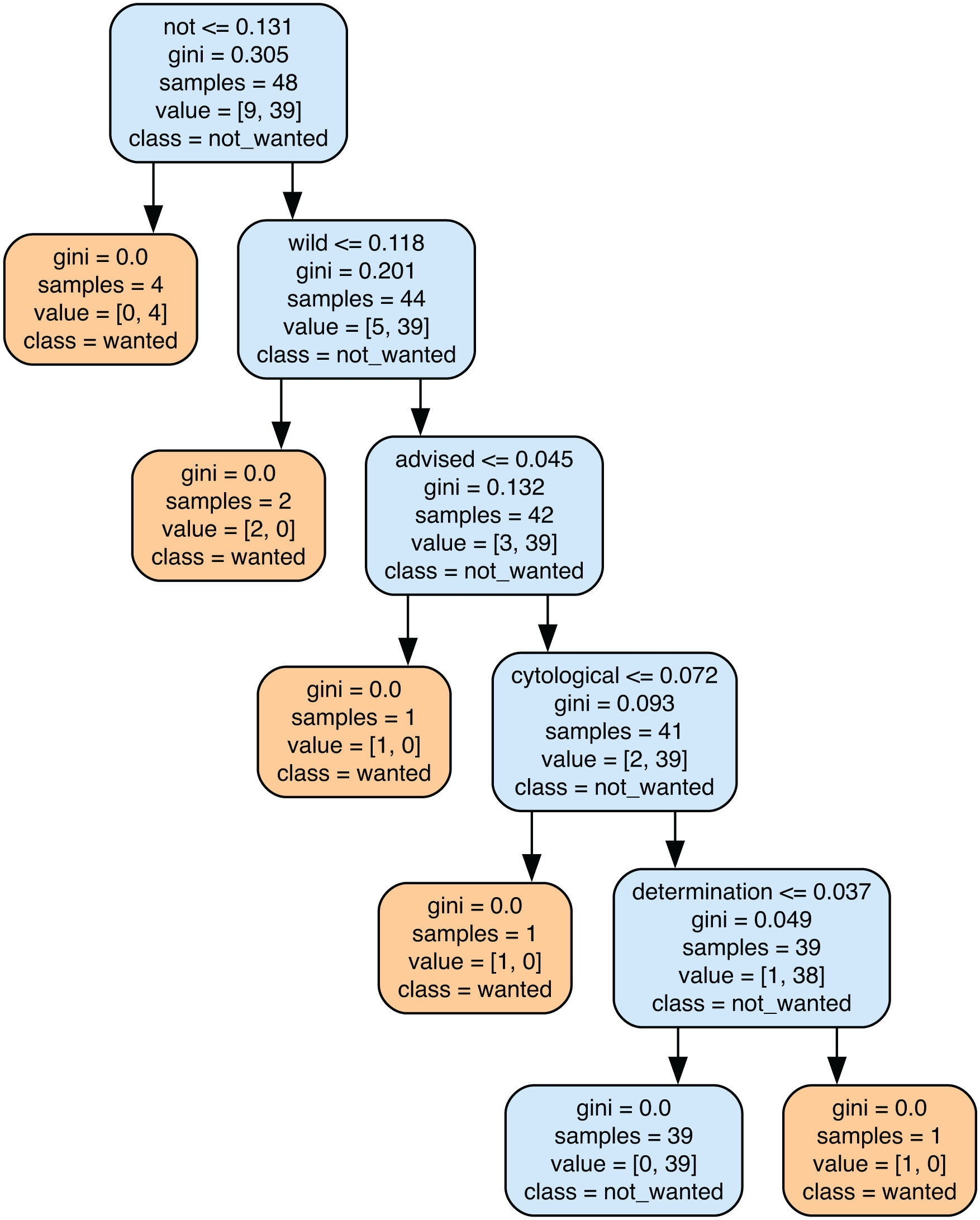

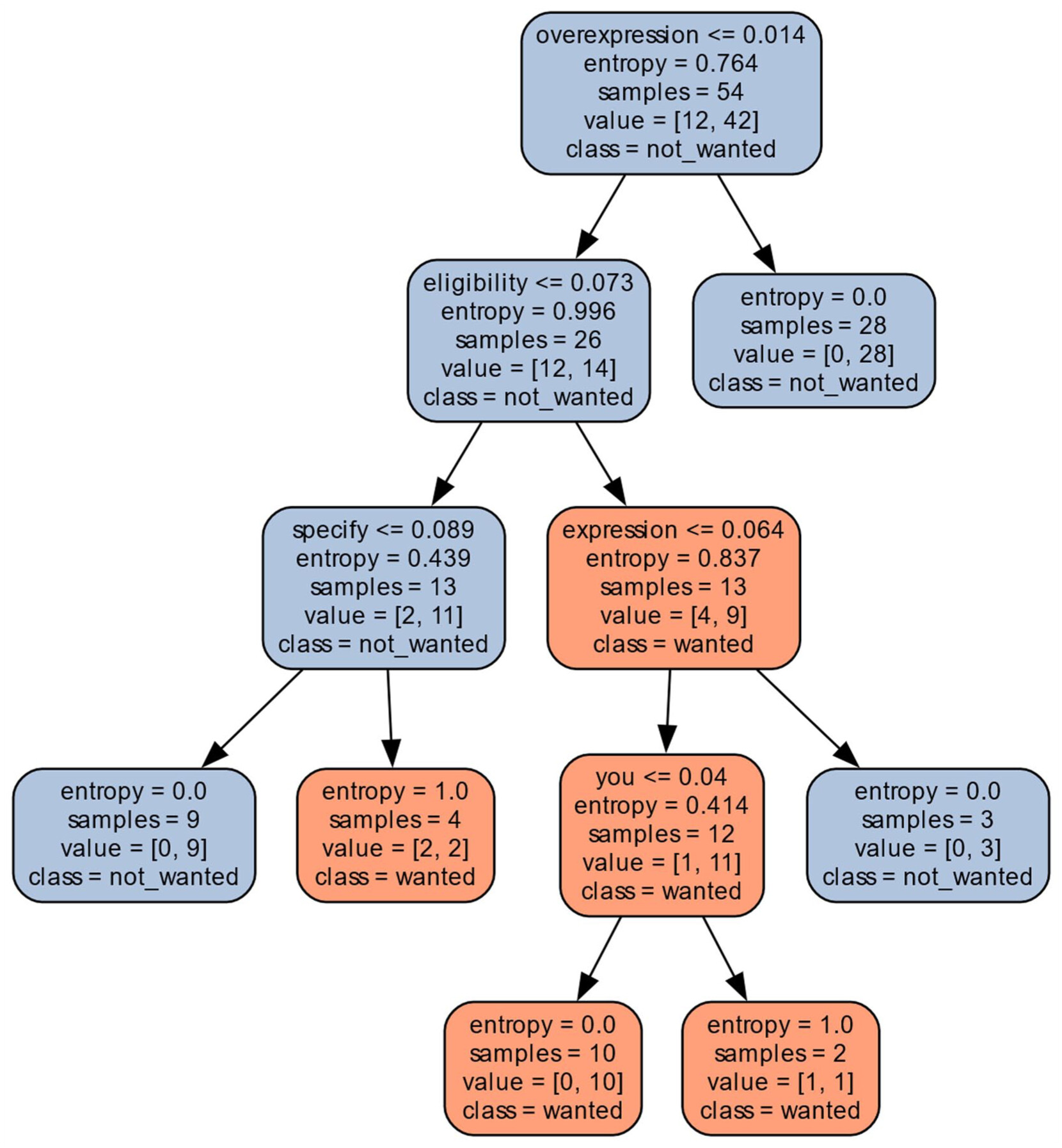

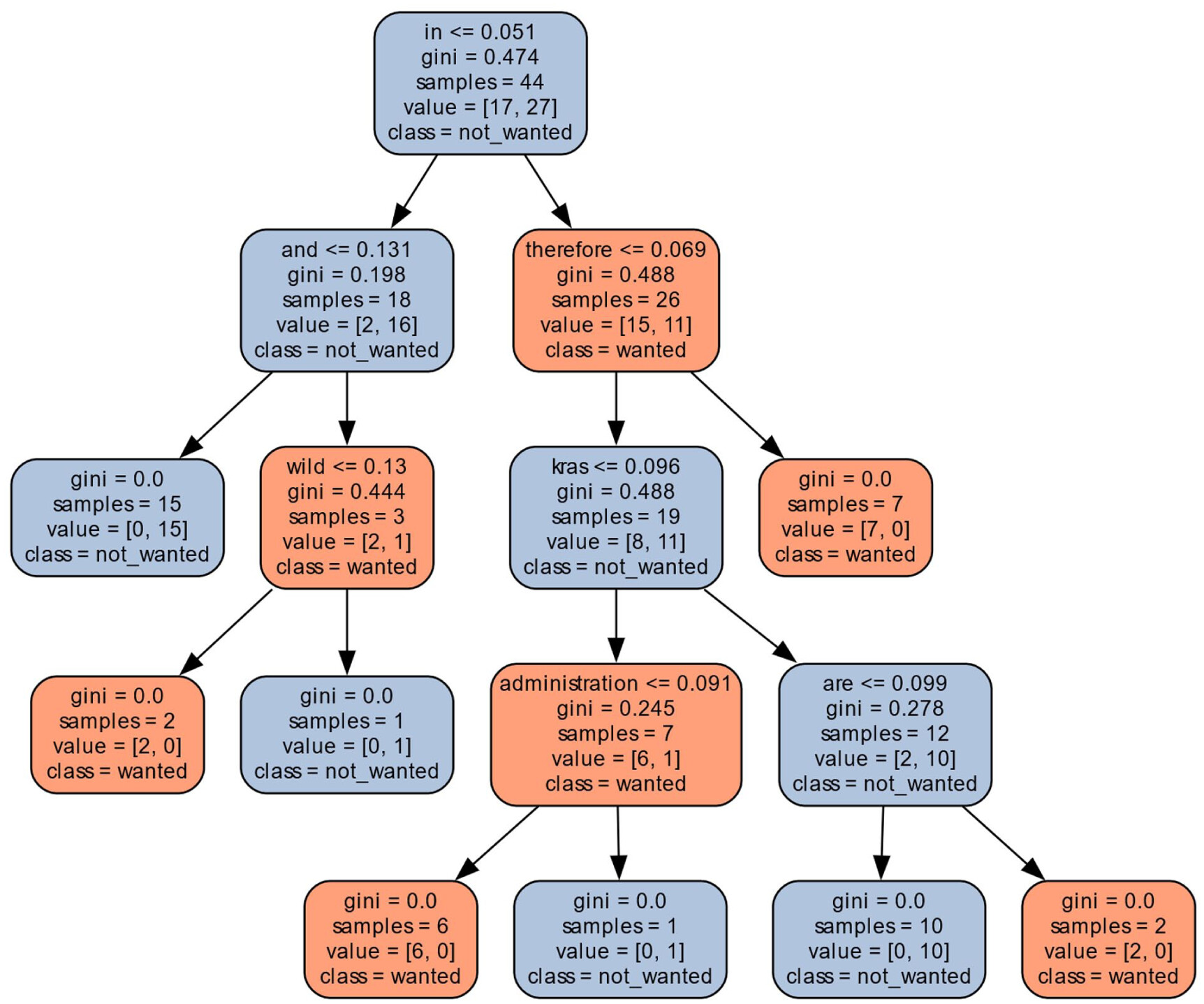

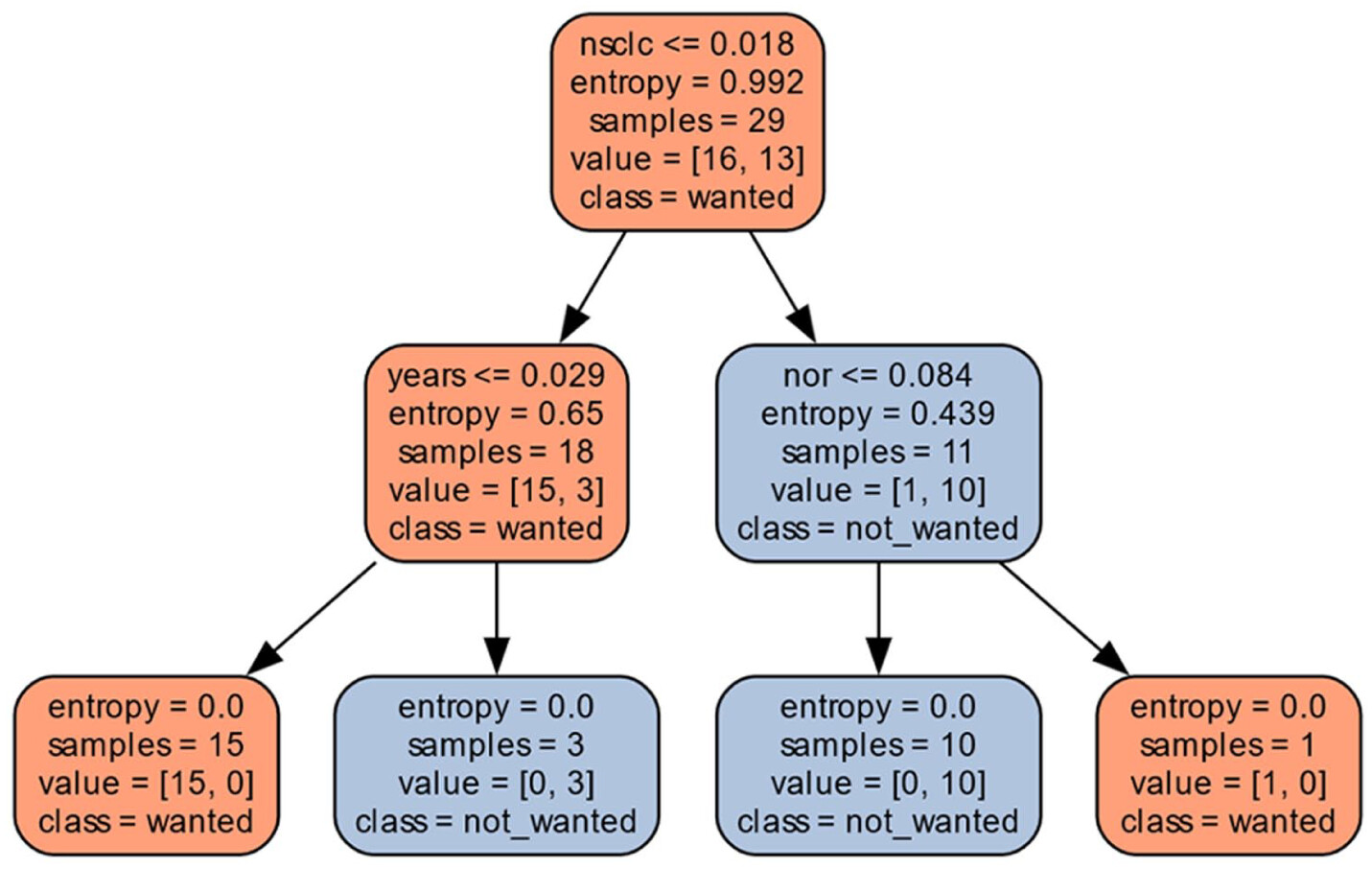

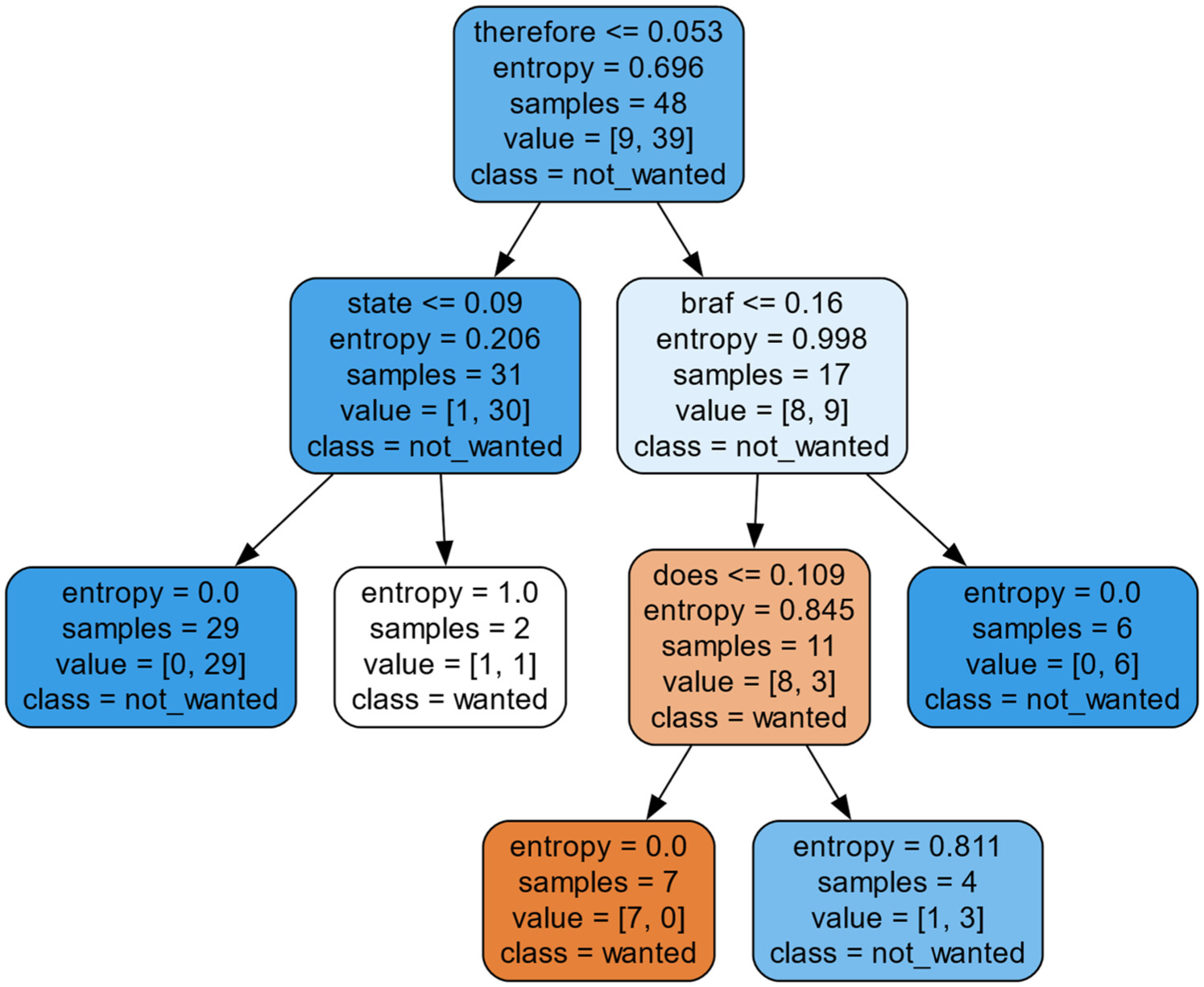

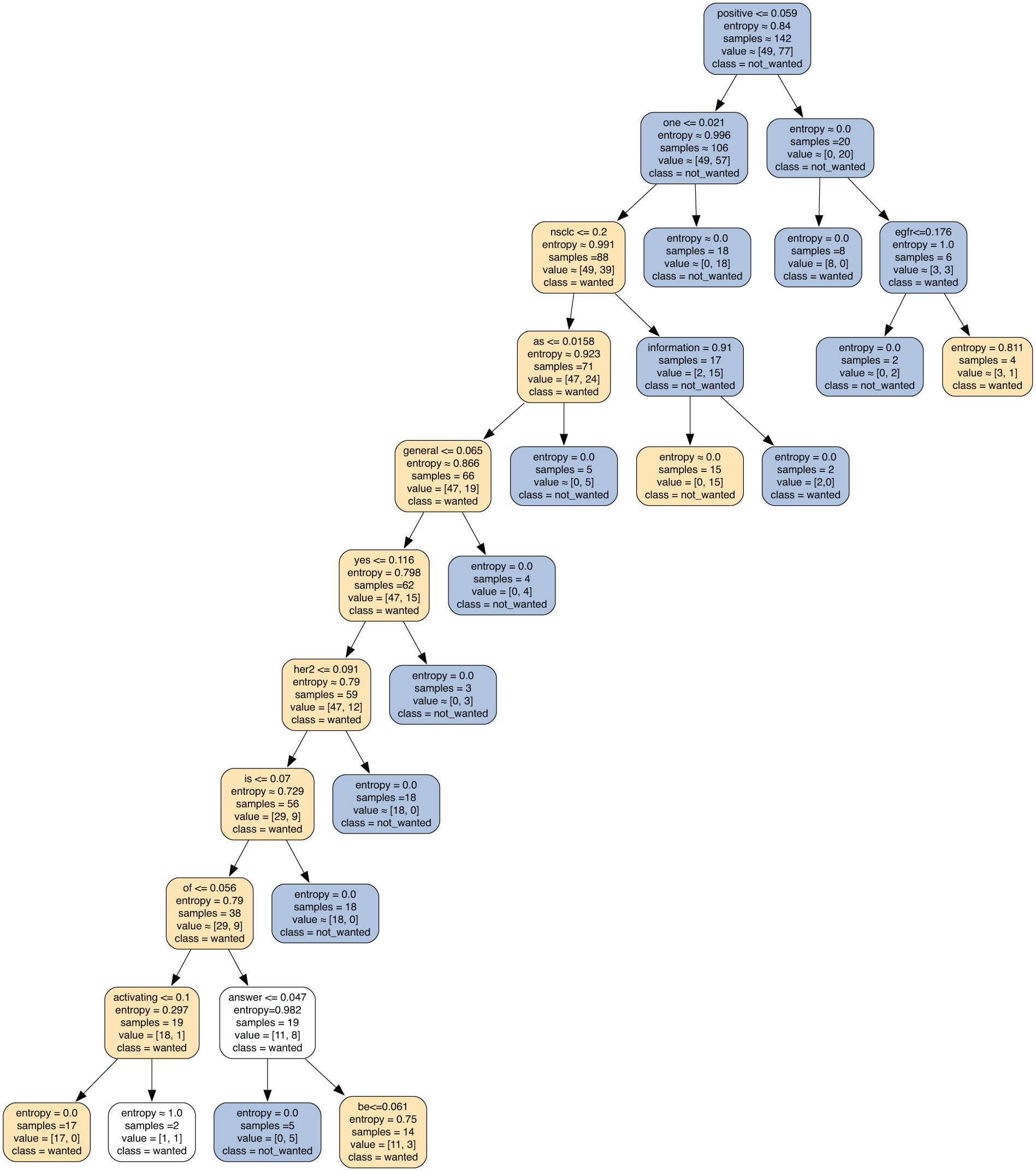

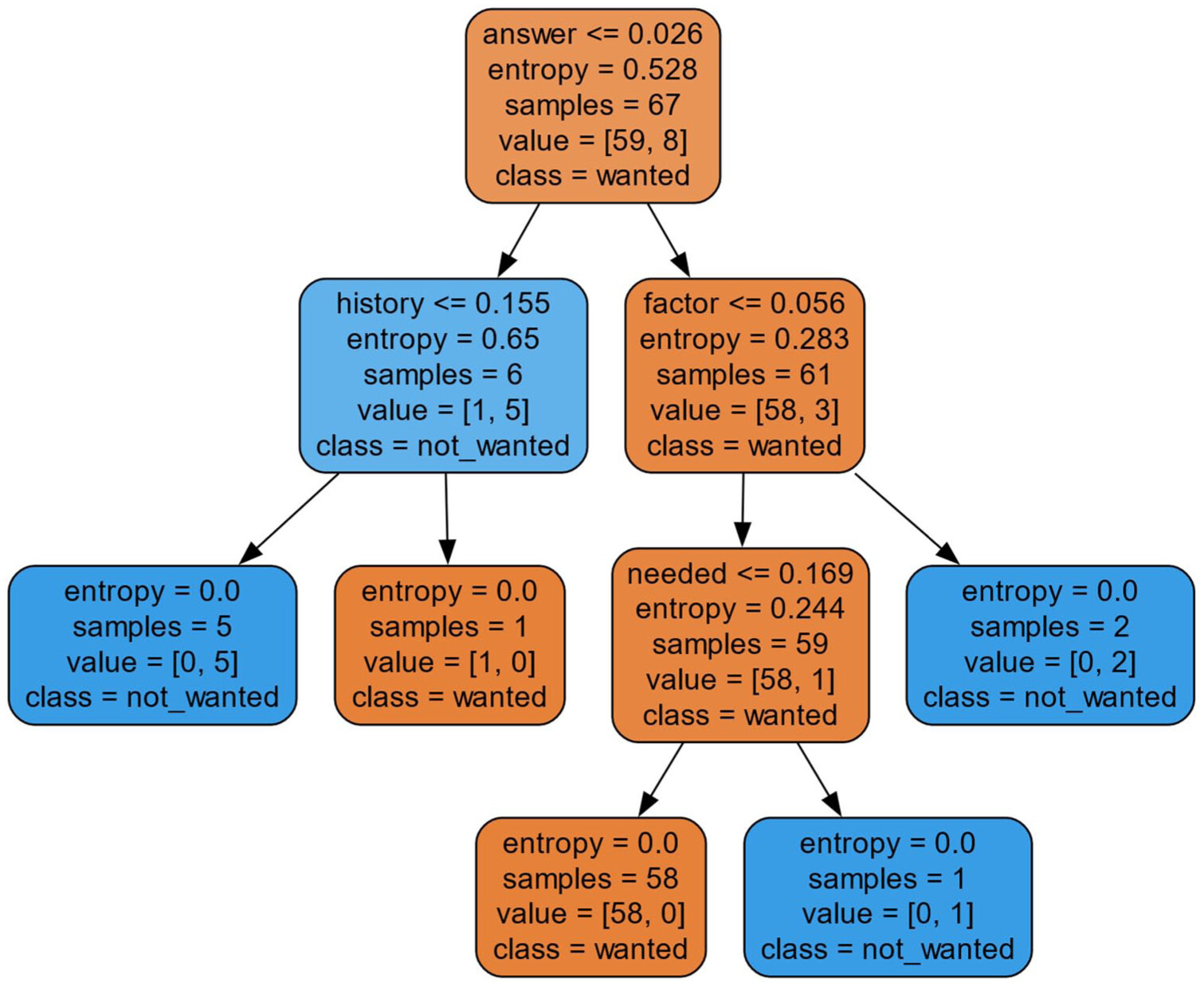

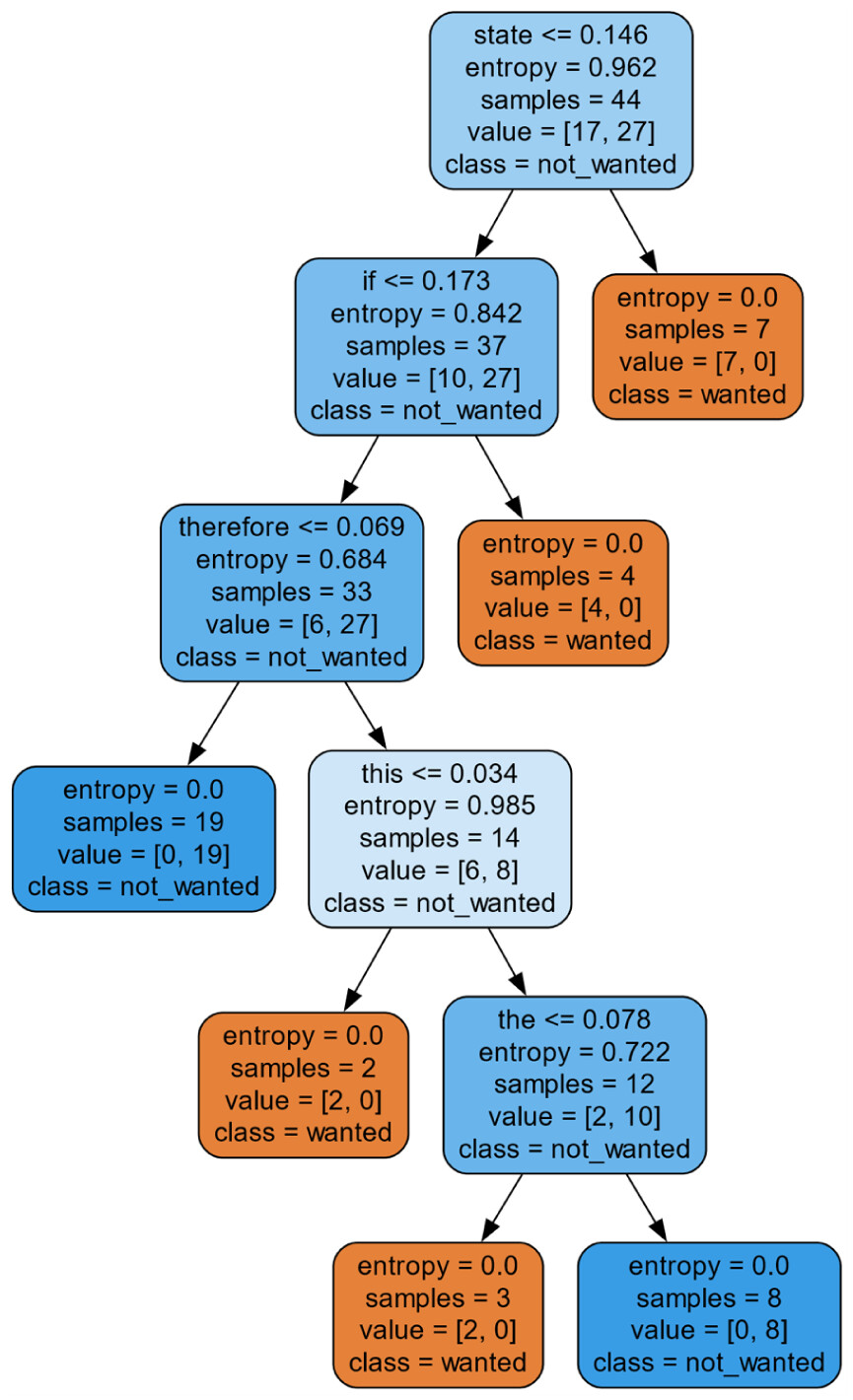

The precision, recall, and F1-score are provided for each gene using GPT 4.0 and Gemini 2.0 in Tables 3 and 4, calculated via fivefold cross-validation with the DecisionTreeClassifier. The authors also used grid search (GridSearchCV)12 to find the best configuration of parameters for each decision tree. The parameters to be optimized were the criterion, maximum depth, minimum number of samples per leaf, and minimum number of samples per split. Tables 5 and 6 provide the optimized parameters for each decision tree model of GPT 4.0, and Tables 7 and 8 present the precision, recall, and F1-score per gene for both LLMs using the best set of parameters per decision tree. The decision trees generated for each gene in GPT 4.0 responses are displayed in Figures 4 to 9. The decision trees generated for each gene in Gemini 2.0 are shown in Figures 10 to 15. The reporting of this study conforms to the STARD 2015 statement.13

| ALK | |||

|---|---|---|---|

| Gold std/Gemini 2.0 | Precision | Recall | F1-score |

| Wanted | 0.37, std = 0.34 | 0.37, std = 0.34 | 0.37, std = 0.34 |

| Not_wanted | 0.62, std = 0.13 | 0.75, std = 0.14 | 0.67, std = 0.10 |

| Weighted avg overall | 0.51, std = 0.22 | 0.59, std = 0.14 | 0.54, std = 0.19 |

| BRAF | |||

| Gold std/GPT 4.0 | Precision | Recall | F1-score |

| Wanted | 0.80, std = 0.04 | 0.90, std = 0.11 | 0.84, std = 0.05 |

| Not_wanted | 0.00, std = 0.00 | 0.00, std = 0.00 | 0.00, std = 0.00 |

| Weighted avg overall | 0.65, std = 0.07 | 0.73, std = 0.07 | 0.68, std = 0.04 |

| EGFR | |||

| Gold std/GPT 4.0 | Precision | Recall | F1-score |

| Wanted | 0.59, std = 0.13 | 0.65, std = 0.20 | 0.61, std = 0.15 |

| Not_wanted | 0.35, std = 0.29 | 0.28, std = 0.24 | 0.30, std = 0.24 |

| Weighted avg overall | 0.49, std = 0.19 | 0.51, std = 0.18 | 0.49, std = 0.17 |

| ERBB2 | |||

| Gold std/GPT 4.0 | Precision | Recall | F1-score |

| Wanted | 0.79, std = 0.05 | 0.81, std = 0.06 | 0.79, std = 0.04 |

| Not_wanted | 0.88, std = 0.04 | 1.00, std = 0.00 | 0.94, std = 0.02 |

| Weighted avg overall | 0.67, std = 0.07 | 0.68, std = 0.06 | 0.67, std = 0.06 |

| KIT | |||

| Gold std/GPT 4.0 | Precision | Recall | F1-score |

| Wanted | 0.00, std = 0.00 | 0.00, std = 0.00 | 0.00, std = 0.00 |

| Not_wanted | 0.93, std = 0.07 | 0.87, std = 0.13 | 0.89, std = 0.07 |

| Weighted avg overall | 0.77, std = 0.07 | 0.88, std = 0.04 | 0.82, std = 0.06 |

| KRAS | |||

| Gold std/GPT 4.0 | Precision | Recall | F1-score |

| Wanted | 0.68, std = 0.09 | 0.93, std = 0.09 | 0.78, std = 0.03 |

| Not_wanted | 0.63, std = 0.41 | 0.30, std = 0.24 | 0.37, std = 0.24 |

| Weighted avg overall | 0.68, std = 0.17 | 0.68, std = 0.06 | 0.62, std = 0.11 |

| Gold std/Gemini 2.0 | Precision | Recall | F1-score |

|---|---|---|---|

| ALK | |||

| Wanted | 0.83, std = 0.24 | 0.67, std = 0.33 | 0.70, std = 0.24 |

| Not_wanted | 0.77, std = 0.23 | 0.87, std = 0.18 | 0.80, std = 0.16 |

| Weighted avg overall | 0.81, std = 0.19 | 0.76, std = 0.19 | 0.75, std = 0.20 |

| BRAF | |||

| Wanted | 0.86, std = 0.08 | 0.74, std = 0.09 | 0.79, std = 0.05 |

| Not_wanted | 0.44, std = 0.17 | 0.43, std = 0.18 | 0.43, std = 0.17 |

| Weighted avg overall | 0.75, std = 0.10 | 0.68, std = 0.09 | 0.70, std = 0.07 |

| EGFR | |||

| Wanted | 0.65, std = 0.10 | 0.66, std = 0.10 | 0.65, std = 0.10 |

| Not_wanted | 0.379, std = 0.092 | 0.411, std = 0.104 | 0.391, std = 0.088 |

| Weighted avg overall | 0.57, std = 0.13 | 0.57, std = 0.12 | 0.57, std = 0.13 |

| ERBB2 | |||

| Wanted | 0.84, std = 0. | 0.76, std = 0.09 | 0.79, std = 0.07 |

| Not_wanted | 0.31, std = 0.19 | 0.47, std = 0.36 | 0.36, std = 0.22 |

| Weighted avg overall | 0.72, std = 0.16 | 0.69, std = 0.10 | 0.69, std = 0.12 |

| KIT | |||

| Wanted | 0.37, std = 0.41 | 0.50, std = 0.50 | 0.37, std = 0.34 |

| Not_wanted | 0.93, std = 0.07 | 0.87, std = 0.13 | 0.89, std = 0.07 |

| Weighted avg overall | 0.87, std = 0.10 | 0.82, std = 0.11 | 0.83, std = 0.10 |

| KRAS | |||

| Wanted | 0.70, std = 0.10 | 0.93, std = 0.10 | 0.79, std = 0.08 |

| Not_wanted | 0.63, std = 0.41 | 0.35, std = 0.25 | 0.43, std = 0.29 |

| Weighted avg overall | 0.68, std = 0.19 | 0.70, std = 0.11 | 0.65, std = 0.15 |

| Gene mutation | GPT4 F1-score | Gemini 2.0 F1-score | P-value |

|---|---|---|---|

| ALK | 0.75, std = 0.20 | 0.54, std = 0.19 | P < .001 |

| BRAF | 0.68, std = 0.04 | 0.70, std = 0.07 | No statistical difference |

| EGFR | 0.49, std = 0.17 | 0.57, std = 0.13 | P < .001 |

| ERBB2 | 0.67, std = 0.06 | 0.69, std = 0.12 | No statistical difference |

| KIT | 0.82, std = 0.06 | 0.83, std = 0.10 | No statistical difference |

| KRAS | 0.62, std = 0.11 | 0.65, std = 0.15 | No statistical difference |

| Gene name | Criterion | Maximum depth | Minimum samples per leaf | Minimum samples split |

|---|---|---|---|---|

| ALK | Gini | 10 | 2 | 10 |

| BRAF | Gini | None | 1 | 2 |

| EGFR | Gini | 10 | 1 | 2 |

| ERBB2 | Entropy | 40 | 2 | 2 |

| KIT | Entropy | 20 | 2 | 2 |

| KRAS | Gini | 40 | 4 | 10 |

| Gold std/GPT 4.0 | Precision | Recall | F1-score |

|---|---|---|---|

| ALK | |||

| Yes | 0.94 | 1.0 | 0.97 |

| No | 1.0 | 0.96 | 0.96 |

| Weighted avg overall | 0.97 | 0.97 | 0.97 |

| BRAF | |||

| Yes | 1.0 | 1.0 | 1.0 |

| No | 1.0 | 1.0 | 1.0 |

| Weighted avg overall | 1.0 | 1.0 | 1.0 |

| EGFR | |||

| Yes | 1.0 | 1.0 | 1.0 |

| No | 1.0 | 1.0 | 1.0 |

| Weighted avg overall | 1.0 | 1.0 | 1.0 |

| ERBB2 | |||

| Yes | 0.86 | 1.00 | 0.92 |

| No | 1.0 | 0.95 | 0.98 |

| Weighted avg overall | 0.97 | 0.96 | 0.96 |

| KIT | |||

| Yes | 0.98 | 1.00 | 0.99 |

| No | 1.0 | 0.88 | 0.93 |

| Weighted avg overall | 0.99 | 0.99 | 0.98 |

| KRAS | |||

| Yes | 0.77 | 1.00 | 0.87 |

| No | 1.0 | 0.81 | 0.90 |

| Weighted Avg Overall | 0.89 | 0.91 | 0.88 |

| ALK | |||

|---|---|---|---|

| Gold std/Gemini | Precision | Recall | F1-score |

| Yes | 1.0 | 1.0 | 1.0 |

| No | 1.0 | 1.0 | 1.0 |

| Weighted avg overall | 1.0 | 1.0 | 1.0 |

| BRAF | |||

| Gold std/GPT 4.0 | Precision | Recall | F1-score |

| Yes | 0.89 | 0.89 | 0.89 |

| No | 0.97 | 0.97 | 0.97 |

| Weighted avg overall | 0.96 | 0.96 | 0.96 |

| EGFR | |||

| Gold std/GPT 4.0 | Precision | Recall | F1-score |

| Yes | 0.96 | 1 | 0.98 |

| No | 1.0 | 0.97 | 0.99 |

| Weighted avg overall | 0.98 | 0.98 | 0.98 |

| ERBB2 | |||

| Gold std/GPT 4.0 | Precision | Recall | F1-score |

| Yes | 1.0 | 1.0 | 1.0 |

| No | 1.0 | 1.0 | 1.0 |

| Weighted avg overall | 1.0 | 1.0 | 1.0 |

| KIT | |||

| Gold std/GPT 4.0 | Precision | Recall | F1-score |

| Yes | 1.0 | 1.0 | 1.0 |

| No | 1.0 | 1.0 | 1.0 |

| Weighted avg overall | 1.0 | 1.0 | 1.0 |

| KRAS | |||

| Gold std/GPT 4.0 | Precision | Recall | F1-score |

| Yes | 1.0 | 1.0 | 1.0 |

| No | 1.0 | 1.0 | 1.0 |

| Weighted avg overall | 1.0 | 1.0 | 1.0 |

Results

Tables 3 and 4 present the fivefold cross-validation results for all genes and GPT 4.0 and Gemini 2.0 using the DecisionTreeClassifier. The results for GPT show that KIT had the highest F1-score (82%, std = 0.06) but at the cost of its precision in relation to clinical trials marked as “wanted.” This same issue appeared with BRAF, where no clinical trials were chosen as “not_wanted.” Decision trees for ALK, EGFR, ERBB2, and KRAS had a more balanced approach between “wanted” and “not_wanted” tags. Overall F1-scores ranged between 49% and 82%, with the average F1-score being 64%.

Per Gemini KIT, the highest score was 83%. Overall F1-scores ranged between 57% and 83% with the average F1-score being 70%.

Table 5 compares the statistical differences between results for GPT 4.0 and Gemini 2.0 per gene. There are statistical differences between the 2 LLMs related to the ALK gene mutation, where GPT 4.0 performed better (75% vs 57%, P < .001), and the EGFR gene, where Gemini performed better (49% vs 57%, P < .001).

Tables 6 and 9 present the optimal parameters identified for both GPT-4 and Gemini 2.0. In this case, both decision trees for their respective LLMs did very well (88%-100% for GPT, 89%-100% for Gemini). Statistical significance cannot be reported, as only 1 model is trained using the top parameters against all training data. Due to this fact, the authors hesitate to use these findings as the definitive results of the system and would instead defer to the results in Tables 3 and 4. However, the decision trees generated by these ultra-fine-tuned models provide us with an interesting insight into the model’s choices from a natural language processing perspective, and we will highlight some of the more notable decisions per gene and LLM.

| Gene name | Criterion | Maximum depth | Minimum samples per leaf | Minimum samples split |

|---|---|---|---|---|

| ALK | Entropy | 40 | 1 | 10 |

| BRAF | Entropy | 20 | 2 | 10 |

| EGFR | Entropy | 20 | 2 | 5 |

| ERBB2 | Gini | 30 | 1 | 2 |

| KIT | Entropy | None | 1 | 2 |

| KRAS |

Starting with Figure 4, which displays the decision tree for ALK mutation and GPT 4.0, it appears that if the word “not” occurs frequently, the clinical trial is not useful for a patient with the ALK cancer mutation, which is unsurprising. This finding is reflected in the following clinical trial (NCT01838577) response from GPT 4.0, where the word “not” appears twice, indicating that a patient with an ALK gene mutation would not be eligible for the trial.

Based on the clinical trial eligibility criteria you provided, a patient with an ALK gene mutation would not be eligible for this trial. The study population specifically includes:

- A case-cohort of patients with a proven EGFR mutation in exons 18 to 21 from tumor material.

- A control cohort of patients known to be somatic EGFR “wild-type,” meaning no mutation detected in exons 18 to 21 from tumor material.

The criteria focus solely on the EGFR gene mutations or the absence of these mutations (wild-type). There is no mention of ALK gene mutations in the study population or eligibility criteria. Therefore, a patient with an ALK gene mutation does not fit the case or control cohort definitions and, thus, would not be eligible for this trial.

Gemini focused on the presence of the NSCLC (Non-Small Cell Lung Cancer) acronym in Figure 10. It ruled that the clinical trial was inappropriate for the patient with an ALK mutation if that acronym was present. NSCLC was found in the eligibility criteria for the trial NCT01838577:

"eligibilityCriteria": "* Histologically or cytologically diagnosed NSCLC, all histologies are acceptable.* Patients can be included in the study with any disease stage and at any time during the disease course.* Any type (surgery, RadioTherapy, chemotherapy, targeted agents) of previous treatment and any line of treatment are eligible. . .

According to the BRAF gene GPT 4.0, the words “not,” “wild,” “advised,” “cytological,” and “determination” indicated that the clinical trial was inappropriate for the patient, as shown in Figure 5. An example of the occurrence of these words is given in trial NCT01358812 per the eligibility criteria and the GPT 4.0 answer itself:

"eligibilityCriteria": "Inclusion Criteria:* Histologically confirmed colorectal adenocarcinoma;* Availability of formalin-fixed paraffin embedded tumor block from primary or metastasis;* KRAS and BRAF wild-type status of primary colorectal cancer or related metastasis;* :Question:Based on the following clinical trial information, would a patient with a BRAF gene mutation be eligible for the clinical trial?Answer:Based on the eligibility criteria provided for the clinical trial, a patient with a BRAF gene mutation would not be eligible to participate. The inclusion criteria specifically require "KRAS and BRAF wild-type status of primary colorectal cancer or related metastasis." This means the patient’s colorectal cancer must not have mutations in either the KRAS or BRAF genes to be eligible for the trial. Therefore, a patient with a BRAF gene mutation would not meet these criteria and would be considered ineligible for treatment.

The decision tree (Figure 11) chose different words for BRAF per Gemini’s responses to rule out a clinical trial, such as therefore and BRAF, highlighted below in Gemini’s answer for study NCT01838577:

Question:Based on the following clinical trial information, would a patient with a BRAF gene mutation be eligible for the clinical trial?Answer:No. While the criteria state that patients with NSCLC (Non-Small Cell Lung Cancer) at any stage and with any prior treatment are eligible, the *studyPopulation* section specifies that this trial is for patients with proven EGFR mutations or those with wild-type EGFR (no EGFR mutations). The information provided describes a study looking at EGFR, not BRAF. Therefore, a patient with a BRAF mutation would *not* be eligible for this particular trial.

Per the ERBB2 mutation (Figure 7), the decision tree for GPT 4.0 data found that the words overexpression, eligibility, specify, and expression were keywords that determined if a clinical trial was appropriate for a patient. For example, in trial NCT01248897, the word overexpression occurs twice, marking this clinical trial as inappropriate for a patient with the ERBB2 mutation.

Question:Based on the following clinical trial information, would a patient with an ERBB2 gene mutation be eligible for the clinical trial?Answer:Based on the clinical trial information provided, the eligibility for the trial is primarily based on HER2 status and prior treatment history. The trial mentions inclusion criteria for HER2-positive breast cancer patients, which are determined by either IHC3+ or FISH+ assays, indicating overexpression or amplification of the HER2 protein.The ERBB2 gene, also known as HER2, is responsible for the production of the HER2 protein. Therefore, if a patient has an ERBB2 gene mutation that results in HER2 positivity (such as overexpression or amplification), they would meet the HER2-positive criteria part of the inclusion.

For Gemini and ERBB2 (Figure 13), the decision tree found that the appearance of words exclusion and carcinoma ruled out a clinical trial, reflected in trial NCT00863122:

"eligibilityCriteria": "Inclusion Criteria:* Concurrent or prior malignancy, other than curatively treated carcinoma-in-situ or basal cell carcinoma of the skinQuestion:Based on the following clinical trial information, would a patient with a ERBB2 gene mutation be eligible for the clinical trial?Answer:This trial is for patients with neurofibromatosis type 2 (NF2), indicated by the inclusion criteria requiring a diagnosis of NF2. The criteria mention nothing about ERBB2 mutations. . . . To be eligible, they would need to meet *all* other inclusion criteria and *none* of the exclusion criteria.

For the KIT gene, mutation, answer, concurrent, and factor were the most important words used to determine if a clinical trial was acceptable per GPT 4.0 (Figure 8). For example, in trial NCT01806571, the word “concurrent” was important in determining that the trial was suitable for a patient with a KIT mutation.

“eligibilityCriteria”: “Exclusion Criteria” . . . Co-morbid systemic illnesses or other severe concurrent disease which, in the judgment of the investigator, would make the patient inappropriate for entry into this study or interfere significantly with the proper assessment of safety and toxicity of the prescribed regimensQuestion:Based on the following clinical trial information, would a patient with a KIT gene mutation be eligible for the clinical trial?Answer:Based on the provided eligibility criteria for the clinical trial, a patient with a KIT gene mutation would not automatically be excluded from participation. The inclusion criteria specifically mention acute myeloid leukemia (AML) with Kit (CD117) expression, which can be a common feature in patients with a KIT gene mutation. The trial focuses on KIT expression rather than directly on the presence or absence of a KIT gene mutation.However, it is important to note that there are multiple inclusion and exclusion criteria that must be met or avoided. The patient’s overall health status, previous treatments, and concurrent medications, among other factors, must comply with the trial’s requirements as listed in the other criteria.

The decision tree for Gemini 2.0 per the KIT mutation (Figure 14) found that the words answer, history, factor and needed were important for classifying a clinical trial. Per the last clinical trial we see that none of those words are present, and this would indicate that the trial NCT01806571 was appropriate for a patient with the KIT mutation.

Finally, per the KRAS mutation, the decision tree for GPT 4.0 (Figure 9) found that the words in, therefore, kras, administration, are and wild were all important in determining whether the clinical trial was appropriate. For example, in trial NCT02129257, the words indicate that the trial is appropriate.

Exclusion criteria: . . . Male patients with a partner of childbearing potential must agree to use contraception in addition to having their partner use another contraceptive method during the trial. . . .Any of the following in 3 months before inclusion: grade 3-4 gastrointestinal bleeding (unless due to resected tumor), treatment resistant peptic ulcer disease, erosive esophagitis or gastritis, infectious or inflammatory bowel disease, or diverticulitis* Other concomitant or previous malignancy, except: * adequately treated in-situ carcinoma of the uterine cervix * basal or squamous cell carcinoma of the skin * cancer in complete remission for \\>5 years*. . .Question:Based on the following clinical trial information, would a patient with a KRAS gene mutation be eligible for the clinical trial?Answer:Based on the eligibility criteria provided for the clinical trial, there is no specific mention of a KRAS gene mutation being a criterion for exclusion or inclusion. The trial does not specify genetic markers like KRAS mutations as part of the eligibility or exclusion criteria. Therefore, a patient with a KRAS gene mutation would still be eligible as long as they meet the other inclusion criteria and do not meet any of the exclusion criteria listed

Per the Gemini responses, the decision tree generated found that the words state, if, therefore, this and the were important indicators of trial appropriateness (Figure 15). For example, per the last example, the exclusion of the word state and the inclusion of the word indicates that the clinical trial is appropriate.

Discussion

Per fivefold cross validation and using the DecisionTreeClassifier, overall F1-scores for GPT 4.0 ranged between 49% and 82%, with the average F1-score being 64%. Overall F1-scores for Gemini ranged between 57 and 83% with the average F1-score being 70% There are statistical differences between the 2 LLMs related to the ALK gene mutation, where GPT 4.0 performed better (75% vs 57%, P < .001) and the EGFR gene where Gemini performed better (49% vs 57%, P < .001).

In the case of Decision Tree parameter optimization both decision trees for their respective LLMs did very well (88%-100% for GPT, 89%-100% for Gemini). Statistical significance cannot be reported as only 1 model is trained via top parameters, against all training data. Due to this fact the authors hesitate to use these findings as the de facto results. However, the decision trees generated by these ultra fine-tuned models give us an interesting insight into the model’s choices from a natural language processing perspective.

Limitations and Future Work

The limitations of this work lie in the absence of fine-tuning of the LLMs. The authors wanted to explore the concept of using decision trees as the interpreter of LLM responses and to obtain natural language based insight into the LLMs decisions. This could present a scalability issue, however, as each gene would need a decision tree created to interpret responses. Another approach would be to change LLM parameters such as temperature and max-tokens to force the LLM to simply answer “Yes” or “No.”

Another limitation is that we couched our analysis in natural language understanding and the inherent ability of LLMs to find patterns in words from a textual viewpoint. Future work could leverage the implications of LLM choices based on more robust biological implications.

Future work could also include the use of additional large language models and additional mutations. A wider variety of prompts and more complex prompts could also be explored that include multiple inclusion and exclusion criteria. A larger dataset could potentially identify weak points in using LLM for specifying clinical trials for patients. More specialized LLMs, such as those built for the medical domain (ie, ClinicalBERT14 and BioBert15) could also yield interesting and beneficial results.

Additionally, this application could be built into a larger application that clinicians could use to search clinical trials based on a cancer mutation. The application could layer the clinicaltrials.gov API and LLM’s API. However, rate limiting and usage costs could be an issue with using these applications in the long term.

Conclusion

Our proposed system differs and expands on previous work with respect to several points. First, the proposed system does not start from patient profiles or notes, but begins with a specific gene mutation. The resulting performance of the system demonstrates that having only this information can be beneficial, however the system could a module that extracts information from patient profiles could easily be added to the front end of our system. Secondly, this system does not use a benchmark but instead uses a collection of trials that have been hand curated by oncology residents, resulting in novel insights. Another point of impact is that the proprietary LLMs used (GPT 4.0 and Gemini 2.0) were not fine tuned per the data, and still performed well (64% GPT 4.0 and 70% Gemini 2.0). Future Work includes fine-tuning both LLMs, using a combination of proprietary and open-source LLMs. However this work demonstrates the feasibility of using proprietary LLMs “off the shelf” with limited patient information to evaluate clinical trial eligibility.

Ethical Considerations

As all data was de-identified, IRB approval was not required.

Consent to Participate

As this study involved only de-identified data, informed consent from participants was not required.

Declaration of Conflicting Interests

The authors declared no potential conflicts of interest with respect to the research, authorship, and/or publication of this article.

Funding

The authors received no financial support for the research, authorship, and/or publication of this article.

ORCID iD

Lisa Gandy https://orcid.org/0000-0001-6487-8064

References

1. Thirunavukarasu AJ, Ting DSJ, Elangovan K, Gutierrez L, Tan TF, Ting DSW. Large language models in medicine. Nat Med. 2023;29(8):1930-1940.

2. Xiao C, Xu SX, Zhang K, Wang Y, Xia L. Evaluating reading comprehension exercises generated by LLMs: a showcase of ChatGPT in education applications. In: Proceedings of the 18th Workshop on Innovative Use of NLP for Building Educational Applications (BEA 2023); 2023:610-625.

3. ClinicalTrials.gov. Trends and charts on registered studies. 2024. Accessed October 3, 2025. https://clinicaltrials.gov/about-site/trends-charts

4. Bui DD, Zeng-Treitler Q. Learning regular expressions for clinical text classification. J Am Med Inform Assoc. 2014;21(5):850-857.

5. Frenz CM. Deafness mutation mining using regular expression based pattern matching. BMC Med Inform Decis Mak. 2007;7:32-36.

6. Meric-Bernstam F, Brusco L, Shaw K, et al. Feasibility of large-scale genomic testing to facilitate enrollment onto genomically matched clinical trials. J Clin Oncol. 2015;33(25):2753-2762.

7. Hamer D, Schoor P, Polak TB, Kapitan D. Improving patient pre-screening for clinical trials: assisting physicians with large language models. arXiv preprint arXiv:230407396. 2023.

8. Peikos G, Symeonidis S, Kasela P, Pasi G. Utilizing ChatGPT to enhance clinical trial enrollment. arXiv preprint arXiv:230602077. 2023.

9. Nievas M, Basu A, Wang Y, Singh H. Distilling large language models for matching patients to clinical trials. J Am Med Inform Assoc. 2024;31(9):1953-1963.

10. Gandy LM, Gumm J, Blackford AL, Fertig EJ, Diaz LA Jr. A software application for mining and presenting relevant cancer clinical trials per cancer mutation. Cancer Inform. 2017;16:1176935117711940.

11. DecisionTreeClassifier. n.d. Accessed March 13, 2025. https://scikit-learn.org/stable/modules/generated/sklearn.tree.DecisionTreeClassifier.html

12. GridSearchCV. n.d. Accessed March 13, 2025. https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.GridSearchCV.html

13. Bossuyt PM, Reitsma JB, Bruns DE, et al. STARD 2015: an updated list of essential items for reporting diagnostic accuracy studies. Radiology. 2015;277(3):826-832.

14. Huang K, Altosaar J, Ranganath R. Clinicalbert: modeling clinical notes and predicting hospital readmission. arXiv preprint arXiv:190405342. 2019.

15. Lee J, Yoon W, Kim S, et al. BioBERT: a pre-trained biomedical language representation model for biomedical text mining. Bioinformatics. 2020;36(4):1234-1240.

Cite

Cite

Cite

OR

Download to reference manager

If you have citation software installed, you can download citation data to the citation manager of your choice

Information, rights and permissions

Information

Published In

Article first published online: February 23, 2026

Issue published: January-December 2026

Keywords

Authors

Author Contributions

LG had the original study concept. FT wrote the code to interface with large language models and performed data collection. LG and FT conducted the data analysis and co-wrote the initial manuscript draft. LG prepared the final version of the initial manuscript. Both authors reviewed and approved the final manuscript

Metrics and citations

Metrics

Publication usage*

Total views and downloads: 202

*Publication usage tracking started in December 2016

Publications citing this one

Receive email alerts when this publication is cited

Web of Science: 0

Crossref:

There are no citing articles to show.

Figures and tables

Figures & Media

Tables

View Options

View options

PDF/EPUB

View PDF/EPUBAccess options

If you have access to journal content via a personal subscription, university, library, employer or society, select from the options below:

loading institutional access options

Alternatively, view purchase options below:

Access journal content via a DeepDyve subscription or find out more about this option.