Introduction

Modern medicine is increasingly focusing on patients’ individual aspects and unique characteristics, defining a new field: precision medicine.

1 This new approach includes the use of preclinical, clinical, and surgical tools to guarantee the best treatment for each patient. To address this need, surgery has started to evolve towards the creation of instruments to minimize the impact of anatomical variability between patients, which constitutes one of the greatest issues for the standardization of surgery.

2To overcome this problem, imaging techniques offer the best tool to thoroughly represent the patient's anatomy and CT-scan and MRI images surely are the most suitable instruments, giving the high level of details offered.

3 These techniques, although very important and informative, have intrinsic limits, such as bi-dimensionality of the image and the minimum contrast-enhancement difference between some organs.

4 The surgeon, in fact, needs to perform a building in mind process to completely understand the anatomy and the spatial relationships between the intraabdominal organs’, healthy and pathological tissues. This is why three-dimensional virtual models (3DVMs) have gained popularity overtime and represent perfect tools to reach the goals set by precision medicine.

5 These models are, indeed, easy to understand, rich of detailed information and, most of all, completely patient-tailored. Several publications in literature have already highlighted the pros of such technology,

6–9 which requires on the other hand a high level of expertise and last generation softwares to be used.

3D models find their application in a large number of scenarios, starting from the pre-surgical discussion of a clinical case (eg, complex renal mass) to training for novice surgeons,

10–12 to intraoperative navigation.

13But the real step forward in this setting is undoubtedly represented by the possibility to be guided by such reconstructions during surgery, performing augmented reality (AR) procedures.

Some preliminary experiences have developed and already tested this AR technology in different surgical settings, mainly for prostate and renal cancer surgery.

14Pioneering results have been achieved for robotic radical prostatectomy (AR-RARP),

15 partial nephrectomy (AR-RAPN)

16 and kidney transplantation (AR-RAKT) in patients with large atheromatic plaques,

17 demonstrating advantages in terms of safety and/or oncologic and functional outcomes.

18, 19In all these experiences, a specific software-based system was used to superimpose the 3DVMs on the endoscopic view displayed by the remote da Vinci surgical console via TilePro™ multi-input display technology (Intuitive Surgical Inc., Sunnyvale, CA, USA). Using a 3D mouse, a dedicated assistant was allowed to manually pan, zoom, and rotate the 3DVM over the operative field.

However, the constant need for an assistant to correctly overlay the 3D model on the real anatomy during the different phases of the surgical procedure, impacted significantly the technology-related costs both in terms of human resources and money, which needs to be overcome to make this technology widely available.

20To advance in this field of research, we aimed to explore innovative ways to reach a fully automated HA3DTM model overlapping via different strategies. The goal of this study is to present the feasibility of two different technologies to allow automation of the 3DVM anchoring to the real organ and to assess their accuracy in the overlapping process.

Patients and Methods

The current prospective observational study, aimed to test the feasibility of two different automatic AR software and included consecutive patients with a radiological diagnosis of an organ-confined single renal mass with the indication to perform RAPN from January 2020 to December 2022. Patients were divided into two different groups according to the time the procedure was performed (Group A from 01/2020 to 12/2021 and Group B from 01/2022 to 12/2022). The study was conducted in accordance with good clinical practice guidelines and informed consent was obtained from the patients for the use of the CT scan images to create the 3D models. After consulting our Ethical Committee no specific approval was required from them, because the study only focused on the feasibility of the technology without any influence on intraoperative decision making or outcomes. All patients underwent abdominal four-phase contrast-enhanced computed tomography (CT) within 3 months before surgery. Exclusion criteria were the presence of anatomic abnormalities (eg, horseshoe-shaped, ectopic kidney), multiple renal masses, qualitatively inadequate preoperative imaging (eg, CT images with slice acquisition interval >3 mm or suboptimal enhancement) and imaging older than three months. In addition, the intraoperative presence of the bioengineer and the software developer was essential for the success of the automatic AR surgical procedure and consequently for the study purpose. Therefore, in the absence of either of these professionals, the patients were excluded.

To perform automatic 3D-AR-guided RAPN, independently from the technological strategy tested, a team consisting of different professional figures was needed: first, the bioengineer was responsible to create the 3D-model; second, the software developer had to set the software for the automatic superimposition; finally, the surgeon was in charge to perform the surgery using these technologies.

Bioengineers working at Medics3D (

www.medics3d.com, Turin, Italy) created hyper-accuracy 3D (HA3D™) models using a dedicated software starting from multiphase CT images in DICOM format. From these files, as previously described,

15–17 images are segmented and the patient specific model is built, thoroughly displaying the organ, tumor, arterial and venous branches, and the collecting system. Each model was generated in .stl format and was therefore uploaded on a dedicated platform, to be downloaded and displayed by every authorized user.

To perform a totally automatic AR intraoperative navigation, two different strategies were tested by the software developers. The common goal was to infer the 6 degrees of freedom (6-DoF) of the kidney (3 for position and 3 for rotation) in Euclidean Coordinates, from the RGB (Red Green Blue) images streaming from the intraoperative endoscope. The 6-DoF were used to determine the anchor point for the overlay of the 3DVM to the image, to augment the endoscope video stream.

The first strategy consisted of the employment of computer vision (CV) technology, specifically the adaptive thresholding method, that allows to segment an RGB image isolating those pixels whose color falls inside a defined range. This strategy exploited the distinctive reddish shade of the kidney to isolate it from the rest of the image and allowed the use the organ itself as a “landmark” to determine the position for the 3DVM. Rotation for the virtual superimposed model was obtained using heuristics that fitted the segmented kidney's borders to a bounding ellipse and then predicted the organ's rotation using this ellipse minor and major axis orientation. These heuristics worked under some empirical assumptions concerning the rotation ranges the kidney may assume intraoperatively, as it is constrained by its blood vessels and other structures. One of the main challenges was represented by the color spectrum of the intraoperative field shown via endoscopic camera, mainly represented by shades of red which sometimes foiled the thresholding ability to segment.

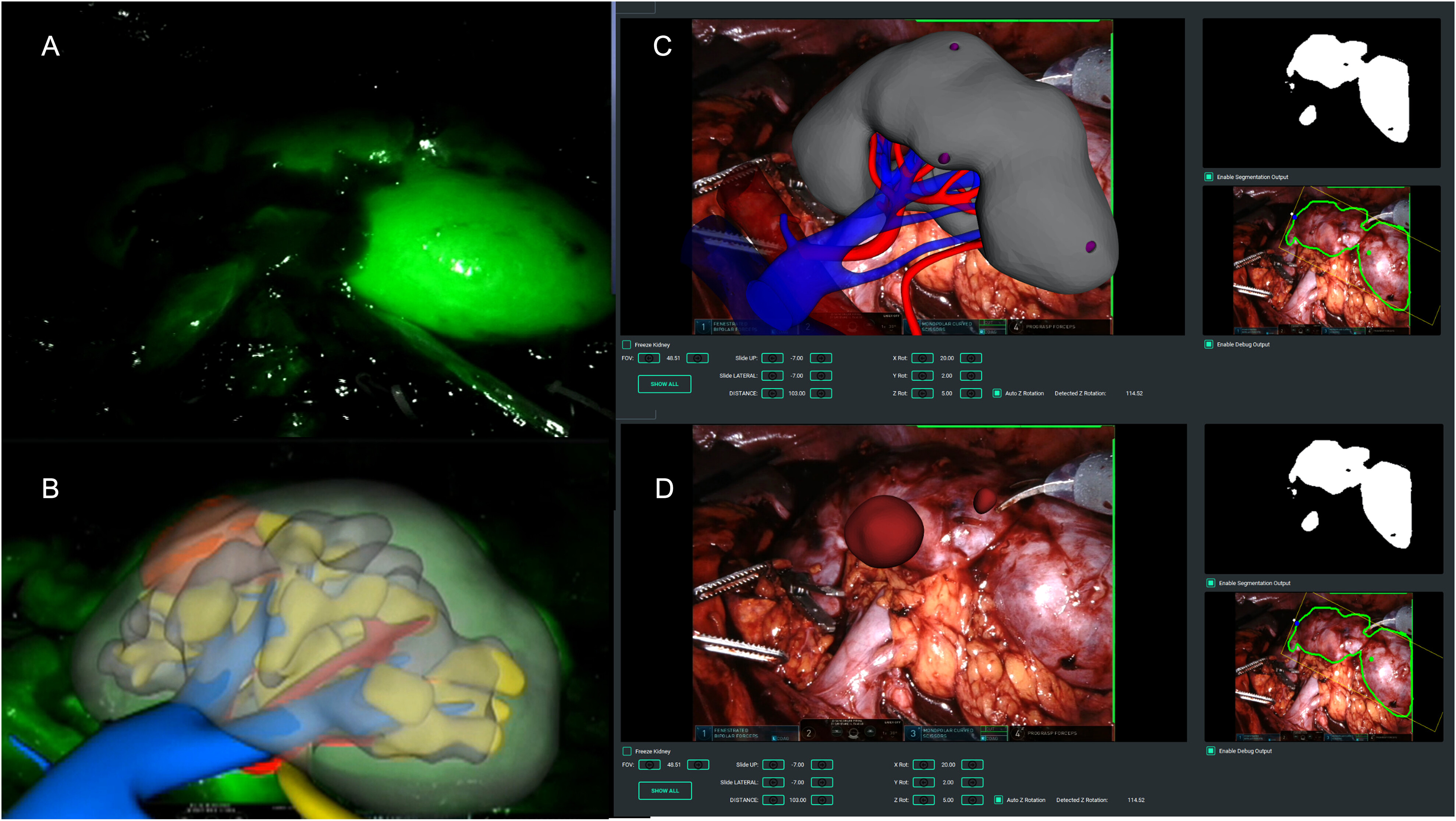

This issue represented by the similarity of colors of the surgical field was overcome by super-enhancement of the target organ's color, using the NIRF Firefly® fluorescence imaging technology offered by daVinci dedicated camera.

21 The final software (named IGNITE), was able to identify the correct position of the real organ, leveraging its shiny green aspect over the dark surrounding background, and to automatically anchor the 3D virtual kidney.

22The second strategy attempted was based on machine-learning strategies, specifically the convolutional neural network (CNN) technology, which allow to process live images directly from the endoscope. To make this technology work, frames from prerecorded robotic partial nephrectomies videos were extracted, so that the CNN could be trained to recognize its position and segment its tissue.

23 Starting from an autonomously assembled tagged images of kidneys obtained from a video library of RAPNs, the software developer processed data using ResNet.50 (ie, Residual Network) with its output layer customized to return the kidney segmentation mask. This mask is an image whose black pixels identify the background and elements not of interest, red pixels identify the kidney, and blue pixels identify the medical instruments. The obtained network, once trained, was able to get as inputs the frames from the endoscope, and to output this segmentation mask, later processed by CV algorithms as per the first method. The rotation of the organ can be evaluated by analyzing the geometric properties of the kidney, with particular attention on the organ's center of mass, the major and minor axis’ orientation, and the dimension of the organ itself. Once these data have been set, the final software (named iKidney) was able to process it and superimpose the 3D model over the endoscopic live video images, transmitting the merged result to the robotic console. CNN-based strategy was able to circumvent the need for fluorescence use, by providing very accurate segmentation of the kidney using endoscope RGB images. (Note: in terms of Intersection over Union, IOU, standard measurement for segmentation CNNs performances, our trained network performance is between IOU = 0937 and IOU 0985).

All RAPNs were performed by a highly experienced surgeon exploiting one of the two technologies described. Regardless the technology used, after trocar placement and robot docking, the anterior face of the kidney was exposed in order to visualize the organ.

When the CV based strategy was used, indocyanine green (0.1–0.5 mg/Kg) was intravenously injected, enhancing the whole organ thanks to its bright green, with the surrounding tissues being dark grey or black. At this point, the registration phase (ie, the 3DVM overlay) required a precise evaluation of both position and rotation of the kidney: the green kidney was included in a virtual box to test the different pixels to find its most probable center of mass, setting the x and y coordinates of this point. The further rotation of the organ was partially hampered by the presence of the vascular pedicle and the ureter, so the model may be less precise. This is why, for the fine-tuning of the model, a professional operator was required to manually modify the model's position, to reduce to a minimum the mismatches. At this point, the model was automatically anchored, allowing the surgeon to switch to normal color vision and perform the robotic surgery.

In case the second strategy based on CNN was used, the hindrances represented by the vessels and collecting system were excluded by the software, which automatically limited the degrees of freedom, recalculated the rotations and produced an adjusted result. After the recalculating process, the iKidney software was able to use this information to superimpose the 3DVM on the live endoscopic images.

In case of endophytic masses, regardless the technology used, the surgeon marked the lesion's perimeter on the organ's surface, guided by the 3DVM, and therefore performed the surgery following the plane indicated by the model in an automatic AR fashion (

Figure 1).

For each patient enrolled in the study, we prospectively collected demographic data including age, body mass index (BMI), comorbidities classified according to the Charlson's Comorbidity Index (CCI),

24 and clinical tumor size, side, location, and complexity according to the PADUA score.

25 Perioperative data including management of the renal pedicle, type and duration of ischemia, the anchoring time of the 3D model, static and dynamic overlap errors and postoperative complications graded according to the Clavien-Dindo classification

26 were also collected. Finally, pathological data including TNM stage and functional outcomes (ie, serum creatinine and the estimated glomerular filtration rate (eGFR) 3 months after surgery) were collected.

Statistical Analysis

Patient characteristics were compared using Fisher's exact test for categorical variables and the Mann-Whitney test for continuous variables. Results were expressed as median (interquartile range [IQR]) or mean (standard deviation [SD]) for continuous variables, and as frequency and proportion for categorical variables. Baseline and postoperative functional data (eg, serum creatinine, eGFR) were compared using paired-sample T-tests. Data were analyzed using Jamovi v.2.3. The reporting of this study conforms to STROBE guidelines.

27 No power calculation to estimate the sample size selected for the study was performed.

Discussion

Among the new ancillary technologies created to intraoperatively drive the surgeon during robotic procedures,

21, 28, 29 augmented reality currently covers an increasingly interesting role.

5The main advantage related to this technology is the possibility to avoid the “building in mind” process necessary to understand two-dimension cross-sectional imaging; in addition, the surgeon can be constantly assisted during its intervention by a virtual model reproducing the patient specific anatomy.

15, 16 Up to now, AR has been intraoperatively used to allow the co-registration of 3D models over the real anatomy, but the process was complex and totally manual, requiring the presence of an expert operator. In addition, this process may be less precise due to the operator's experience (eg, misunderstanding of kidney's rotation) and discrepancies with the real anatomy.

20 The real advance in this field was therefore represented by the possibility to be guided by such reconstructions with an independent and totally automatic anchoring system, with the aim to perform pure automatic AR procedures,

30–32 potentially overcoming the current limitations given by the unprecise knowledge of the different anatomical structures of interest to perform RAPN. Even if a proper study of the kidney and renal pedicle anatomy is done preoperatively, the intraoperative location of such structures, particularly the vessels, is not always clear, leading many surgeons to adopt surgical approaches in which there is no need to identify, isolate, dissect and clamp the renal pedicle. This is the case of preoperative arterial embolization and off clamp approaches, in which the enucleation is conducted without any renal pedicle management.

33, 34 However, even in these cases, the automatic AR technology can be of aid, giving the surgeon, especially the one running his/her learning-curve, a constant and proper knowledge of the arterial branches’ location, in order to let him feel confident in embarking for an off-clamp approach with the safety to be able to switch to a selective or global clamping in case of any need.

With the current study, we present our preliminary experience of automatic AR–RAPN aided by two different types of technologies, based on new artificial intelligence strategies that leverage the process of images detection and processing as well as the deep learning theories. These artificial intelligence tools have found great spread in recent years, being able to analyze a huge amount of data in a little time, shortening the diagnostic workup process and data processing, like for COVID-19 at the time of the pandemic.

35, 36 Focusing on a selection of patient's characteristics and symptoms, the deep learning-based software were able to score the risk of COVID-19 infection.

35 Similarly, some deep-learning algorithms were able to identify interstitial pneumonia after consultation of huge number of CT-scan images,

36 while others contributed to the nutritional monitoring in medical cohorts analyzing automatically food habits of a selected population.

37 Leveraging the potential given by these type of studies, we tested deep learning strategies applied to intraoperative images during RAPN, to make the target organ identifiable by the software and to allow the anchorage of the 3DVM in a totally automatic manner.

The first strategy, based on computer vision (CV) algorithms, requires the identifications of intraoperative anatomical landmarks, serving as reference points. In our previous experience, we applied this technology during robot-assisted radical prostatectomy (RARP) using the bladder catheter as a reference

38 while during RAPN we decided to use the whole dissected kidney as the intraoperative landmark to be identified by the software. To make it more easily depictable by the software, it was also super-enhanced by the use of ICG.

The automatic ICG-guided AR technology was able to anchor the 3DVM to the real kidney without human intervention in all the cases, with a mean registration time of 7 s. Particularly, the IGNITE software correctly estimated the position of the kidney and its orientation in the three spatial axes and allowed to maintain the model overlapped even during the movements of the camera.

22However, an assistant was required to fine tune the overlay of the 3DVM, in particular focusing on the rotation of the organ in the abdominal cavity. In addition, ICG may have enhanced some microvascular aspects potentially leading to overlapping errors.

This first strategy approach experienced some technical limitations, mainly due to specific intraoperative factors such as light variations, endoscope movements and ICG-related color differences reflecting the vascular heterogeneity.

To overcome these issues, a new convolutional neural network (CNN) based software version was created.

23 Thanks to this technology, each pixel belonging to the kidney could be independently identified, avoiding the need of specific landmarks. By manually extracting and tagging still image frames from recorded robotic surgeries procedures, it is possible to determine the rotation, position and scale assumed by the organ. The tagged images are therefore used as a training set for deep learning algorithms able to assist the intraoperative 3D organ registration.

Thanks to the CNNs, it was possible to detect the kidney using its intrinsic characteristics (ie, pixels) rather than its ICG-based visual conditions, significantly increasing the organ detection and making the intraoperative ICG injection unnecessary. Thanks to this second technology, the kidney's border identification was more precise, increasing the registration success during preliminary computational tests. In fact, when comparing the two strategies in terms of co-registration outcomes, the latter resulted in longer time but without any failure in anchoring the virtual model to the real organ (

Table 3).

However, this second technology showed some limitations, such as the sporadic lack of precision in anchoring the model mask to the real kidney. That is probably due to co-registration errors of the 3DVM along its three main axes. Nevertheless, the tests performed on this second technology showed that the iKidney software was able to guarantee a visually accurate 3DVM overlay during the surgery (as assessed by the surgeons performing the procedure), if at least one of the axes of the kidney mask was fully visible.

Since a fully automatic method is not yet available, minimal human assistance was still required to set the axis’ origin during the initial registration phase (ie, fixing the rotation and/or correcting the translation).

Another issue which may decrease the CNN technology's accuracy was represented by the movements of the robotic instruments at the time of the 3DVM co-registration process. De Backer et al

39 recently published an intriguing study specifically addressing this current limitation of AR, describing an algorithm based on deep learning networks able to detect in real-time all “nonorganic” items (eg, instruments) in the surgical field during RAPN and robot-assisted kidney transplantation.

All these considered, one open question still remains: how many and which technologies can be integrated to create the “ultimate” artificial intelligent software, able to allow an automatic co-registration process of a virtual model over the real anatomy in a live surgical setting with a high grade of precision? The growing body of literature continues to provide new evidence on this field, analyzing all its multifaceted potentialities.

40There are several limitations in this study: we are currently in an embryonic and experimental stage of the software, which in fact can only be used for a well-defined part of the surgical procedure. In fact, the software needs the target organ to be stationary in the surgical field with no instruments (eg, robotic scissors or clamps) obstructing the overlapping process. In addition, at present, many professionals are needed with a large human resource commitment. However, with continued development, these limitations are expected to be reduced and resolved, and will decrease as the automatic coregistration process is standardized.

However, the future perspectives in this field of research will be oriented to ameliorate the code-writing of these algorithms in order to make the co-registration process faster and more efficient, without any need of human assistance. To make it possible, not only a higher amount of data to be analyzed is needed, but also a translational process able to integrate all the artificial intelligence software together is encouraged, to optimize the complexity of such multifaceted developing technological tool.