Introduction

Alarm sequences in telecommunication network infrastructure are critical information sources that can be used to identify performance degradation, faults, and recovery events across the network as well as through their distributed component structure. Radio Access Network (RAN) Base stations and other infrastructure units emit these alarms and are crucial for operational monitoring. However, they are treated in isolation or with a limited temporal context in most cases. This siloed handling of these alarms constrains the predictive and diagnostic utility of alarm data, particularly in high-density or fault-prone environments. Recent works in time series analysis, pattern mining,

1 and probabilistic modelling

2 offer promising opportunities to transform these alarm logs into interpretable and actionable insights.

3 However, most existing approaches rely on frequent pattern extraction, sequence classification, or anomaly detection without integrating prediction and causality inference.

4 The quantification of alarm resilience is seldom addressed, and even when it is, it is not done within a unified framework.

This work introduces RAPTOR (Resilience-Aware Prediction and Tracking of Operational Risks from Alarm Information Flows), a data-driven pipeline that models alarm sequences generated from RAN base stations across telecommunication networks. By combining temporal clustering (via Dynamic Time Warping), Markov transition modelling, and resilience inference, the proposed framework uncovers the temporal and causal structure among alarms. Further, based on the identified causality, the framework outlines a method for forecasting future alarm events using interpretable probabilistic models and quantifies the resilience or fragility of specific alarms based on their propagation and resolution behaviours. This work hypothesises that it is possible to stratify patterns in alarm co-activation, transition complexity, and risk potential in each base station. This is done by analysing alarm dynamics across base stations with varying levels of alarm activities.

The findings demonstrate that when temporal behaviours of the alarms in each base station are encoded, even lightweight statistical models can yield accurate, explainable predictions and serve as a foundation for network resilience engineering. The core issue with telecommunication system alarms is that, despite abundant alarm data, it is significantly challenging to extract actionable insights that can quantify alarm behaviour and capture their interactions. Temporal irregularity in the occurrence of alarms and misalignment in the alarm logs are significant issues, as multiple issues can trigger the same alarm and are mostly unpredictable, with uneven time intervals. More often, a robust alignment technique is required to compare and interpret alarm patterns effectively, as fixed-window or synchronous models fail to handle variability.

Further, because alarm logs lack explicit causal labels, many alarms may appear as infrequent, isolated events, requiring approaches to discover the hidden causality. Sparse alarm transitions also reduce the discoverability of alarm causality sequences and their meaningful interpretation, which limits the use of approaches such as supervised learning due to minimal ground truth information. Approaches such as those using deep learning

4 operate as black boxes. The factors of alarm transitions, causality, persistence, escalation, and recovery potential are mostly missing from these approaches, thereby limiting operational understanding and decision-making across the network infrastructure. The complexity of network infrastructure and operational methods at the base station level gives rise to many distinct alarm types, with significant variations in alarm activation patterns across base stations. This high dimensionality and heterogeneity across base stations require sensitive and contextual models that can adapt to localised behaviour and operating conditions.

Considering the inherent challenges of real-world alarm logs, the RAPTOR framework proposes a resilience-aware prediction and tracking of operational risks from information flows in the form of alarm transitions, reusable across heterogeneous base stations. The following three research questions form the underlying core of this framework:

RQ1 Temporal Causality: How can temporal similarity and sequential alignment techniques be used to uncover latent causal structures in alarm sequences across heterogeneous telecommunication base stations?

RQ2 Predictive Modelling: To what extent can first-order Markov models accurately forecast the next alarm events in time-ordered sequences, and how does this vary across base stations with differing activity levels?

RQ3 Resilience Quantification: How can the resilience of individual alarms be quantified using transition-based metrics, and what insights do these measures provide about fault persistence, escalation risk, and system recovery?

Considering these three research questions, RAPTOR proposes a unified and interpretable framework for analysing, forecasting, and interpreting alarm dynamics in telecommunication base stations. Unlike prior approaches that focus narrowly on correlation mining,

5,6 or black-box prediction,

3 the proposed method integrates temporal alignment, probabilistic modelling, and resilience assessment into a coherent pipeline. This approach can help network managers and maintenance engineers schedule and plan maintenance of critical telecommunications infrastructure by providing early warning, causality tracing, root-cause insights, and alarm interpretability.

Related work

Telecommunication base station alarms have always served as critical metrics for service disruption diagnosis and management. Several efforts have been made to understand the behaviour of the alarm systems through the analysis of causal and temporal dependencies across the network. Early efforts attempted to study telecom alarm management involves expert knowledge and rule-based methods to identify the correlation between alarms and their possible causes. Brugnoni et al.

7 proposed a real-time fault diagnosis system for the Italian telecom network using a heuristic framework combining alarm pattern identification, fault hypotheses selection, and investigatory explanation. Jakobson and Weissman

5 presented the idea of alarm correlation and defined fault propagation as an acyclic graph. In this graph, the edges represent the causally related alarms. These early works demonstrated the utility of alarm grouping and filtering based on known dependency rules. However, these efforts were limited due to the challenges arising from human knowledge acquisition and increased network complexity and size.

Subsequent efforts include the study conducted by Bouloutas et al.,

8 which used protocol models to explore and assess alarm faults. The study assumed predefined network models and did not consider data-driven insights from alarm logs, limiting generalisability. Subsequent studies attempted to explore the application of data-mining approaches for analysing recurrent temporal and correlation patterns among the alarms. A notable method is the Telecommunication Network Alarm Sequence Analyser (TASA), proposed by Klemettinen et al.

9 TASA treats alarm logs as event sequences and uses episode mining methods to identify recurring alarm sequences.

Another branch of work has focussed on measuring similarity and building networks of alarms based on historical co-occurrence patterns. Lin et al.

10 argue that directly computing pairwise similarity (e.g. via Euclidean distance or Dynamic Time Warping) on alarm time series can be misleading, because alarms may have complex positive or negative correlations. They propose a shuffling-based similarity index that measures the likelihood of the observed overlap of two alarm sequences compared to random chance, which is then used to construct device-to-device alarm correlation networks for the functional grouping of network elements that often experience concurrent alarms. Fournier-Viger et al.

6 models the telecom network as a dynamic graph of nodes (network elements) and links, and then extracts alarm correlation rules that describe how alarms spread through that graph.

Beyond descriptive correlation, statistical and machine learning models were used to predict impending alarms or faults based on observed alarm sequences. Salfner et al.

11 developed a Semi-Markov “Similar Events Prediction” model that treats each alarm type as a state and uses sojourn times to estimate the probability of a failure within a specified window; it proved effective in live telecom systems but suffered from state-space explosion as network size grows. Salaun et al.

12 introduced the DIG-DAG structure to compactly encode all possible alarm chains in a log, and a querying mechanism to extract predictive patterns from this structure. Building on this, Desbouvries et al.

13 analytically compared recurrent neural networks (RNNs) with Hidden Markov Models (HMMs) for modelling alarm time series. While HMMs rely on a fixed number of states, RNNs (especially LSTM networks) can, in principle, capture longer and more complex sequences of alarm dependencies. Li et al.

3 presents a data-driven alarm prognosis model for cellular base stations that uses an ensemble of deep learning classifiers to tackle the heterogeneity and class imbalance in alarm data by extracting a rich set of features (e.g. alarm counts, durations, timestamps) and training multiple learners whose outputs are combined.

Telecommunication networks are also characterised by alarm storms due to compounding alarm activities arising from a single fault. Alarm storms complicate the network operations and demand the need to quantify alarm resilience. In essence, it becomes important to understand the propensity of an alarm to escalate into other alarms or self-resolve. Recent work has explored this avenue by modelling the alarm escalation patterns, recurrence, and absorption behaviours. Abele et al.

14 presented the idea of Root Cause Alarms to be the instigators of an alarm storm and suggested the early detection of these alarms to reduce cascading effects. Li et al.

15 developed an unsupervised association mining approach to quantify each alarm’s propagation tendency in a telecom network. The approach first cluster alarms, discards duplicates, and then identifies the root causes with an accuracy of 91%. Wang et al.

16 proposed the Alarm Behaviour Analysis and Discovery (AABD) framework to capture the flapping and parent-child dependencies across a 2G–4G network. The dependencies are used to derive per-type flapping and escalation metrics. Zhao et al.

17 developed a time-decay factor to identify recent alarms influencing resilience. Despite these advancements, most studies focussing on resilience assessments remain retrospective and are primarily coupled with real-time alarm prediction. The existing approaches do not sufficiently capture the critical factors for proactive risk mitigation, that is, the dynamics between escalation, suppression, and retriggering of alarms.

While prior work has made significant advances in temporal pattern mining, event prediction, and resilience evaluation, these areas have evolved mainly in parallel. Existing systems typically lack a unifying framework that connects prediction, interpretation, and resilience quantification in a data-driven yet operationally meaningful way. RAPTOR addresses this prevailing gap by unifying three capabilities that existing alarm-analysis tools treat in isolation: it captures the temporal causality that underlies alarm cascades, generates resilience-aware forecasts of forthcoming alarms, and quantifies alarm behaviour through metrics such as absorption time, recurrence propensity, and escalation likelihood.

Methodological framework

The RAPTOR framework evolved from an iterative, data driven research process grounded in the previous “Alarm Webs” framework.

1 Alarm Webs demonstrated that it is possible to uncover co-activation patterns across a RAN base station’s temporally aligned alarm sequences. However, when large-scale RAN data involves multiple base stations, three limitations become apparent: (a) absence of predictive capabilities, (b) probabilistic interpretation of alarm transitions is lacking, and (c) an inability to quantify alarm stability or fragility. Therefore, RAPTOR represents a means to address these gaps through successive design cycles using real operational RAN base-station alarm data. RAPTOR evolved as a unified pipeline for causality discovery, forecasting, and resilience assessment of RAN alarms.

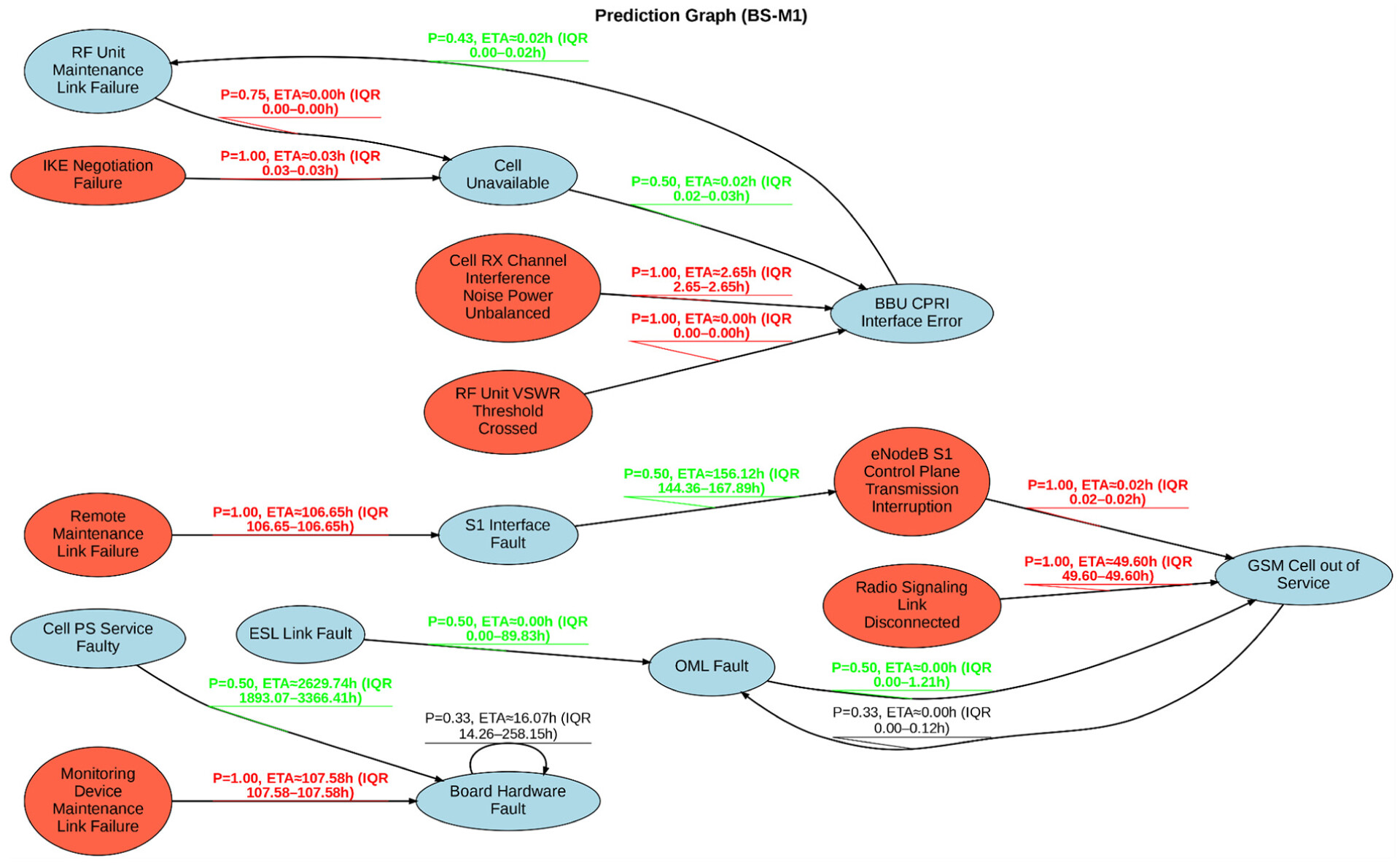

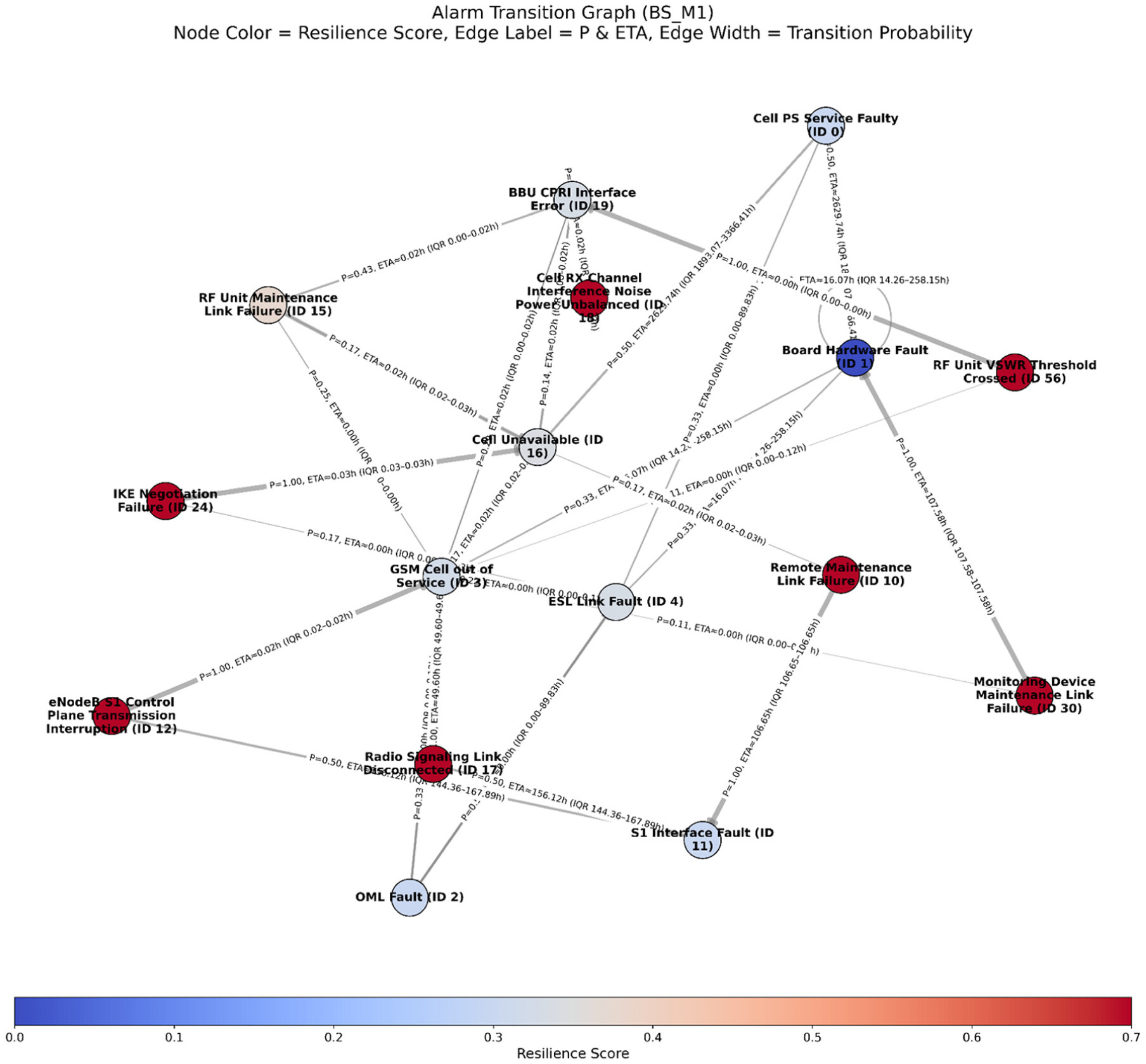

Figure 1 outlines the proposed RAPTOR framework. This framework is designed to interpret alarm behaviour across base stations by integrating temporal clustering, Markov modelling, and resilience analytics of the alarms. The raw alarm logs from RAN base stations are modelled as temporally structured sequences, which are then used to identify co-activated alarm patterns, model probabilistic transitions, and quantify network resilience to enable prediction, interpretability, and early detection of cascading risks in telecommunication networks from alarm logs alone.

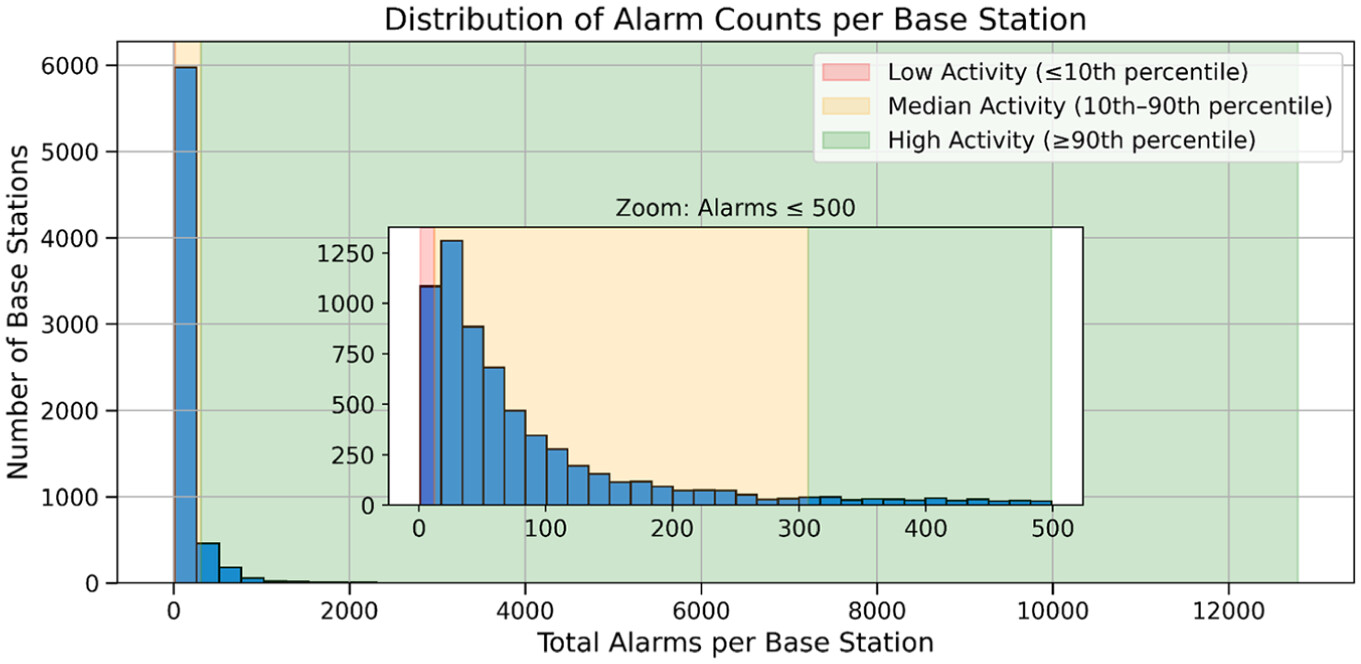

The alarm log dataset from a leading UK telecommunications network operator, spanning 2019–2021, was used for this framework. Each alarm has 13 attributes that capture the severity, type and base-station ID, location, timestamps for occurrence/clearance/acknowledgement, cleared and acknowledged status flags, log and equipment serials, and the base station’s maintenance status. These fields support temporal sequencing, spatial analysis, and station-level context across approximately 12,000 base stations. RAPTOR uses this comprehensive schema to model the alarm propagation within RANs (and not between RANs), and extends this to next-alarm prediction, and resilience assessment.

Figure 2 illustrates the distribution of alarm occurrences across the dataset.

Data processing and encoding

In the data logs for the RAN base station alarms, each base station generates a continuous, time-ordered sequence of alarm events. To ensure the reliability of temporal event ordering, records with missing information are removed, and timestamp values are converted to a standard format. The alarms are treated as a sequence of transitions from one alarm to the next by associating each alarm entry with the time of occurrence and the base station ID where it was triggered. Unique numerical identifiers are assigned to each alarm type to enable consistent referencing across the dataset. These sequences also include a “No Alarm” state denoted by ϕ in this paper. This state is treated as a first-class alarm type and is not synthetically introduced. A “No Alarm” label is added only when an alarm represents the final event in a sequence (which is analogous to a leaf node in a causal graph representing the temporal chain of alarm-triggering events). A transition of represents the empirical resolution of an alarm into a stable state.

For each base station, a station-specific analysis is performed using the ordered sequence of alarms extracted. These sequences are then used to create a temporal activation profile for every alarm type (within each base station), capturing the time intervals between consecutive activations (in hours). For each alarm type within a base station, a cumulative temporal activation profile is constructed. This temporal activation profile encodes the elapsed time since first activation (i.e.

) and is used as input to DTW. This represents the global temporal evolution of the alarm’s activity, rather than capturing only its local inter-arrival gaps. The complete set of these alarm profiles (a valid alarm profile is one where each alarm must appear at least twice) constitutes the alarm activity dictionary for each base station.

Algorithm 1 summarises the process.

Similarity estimation and temporal clustering

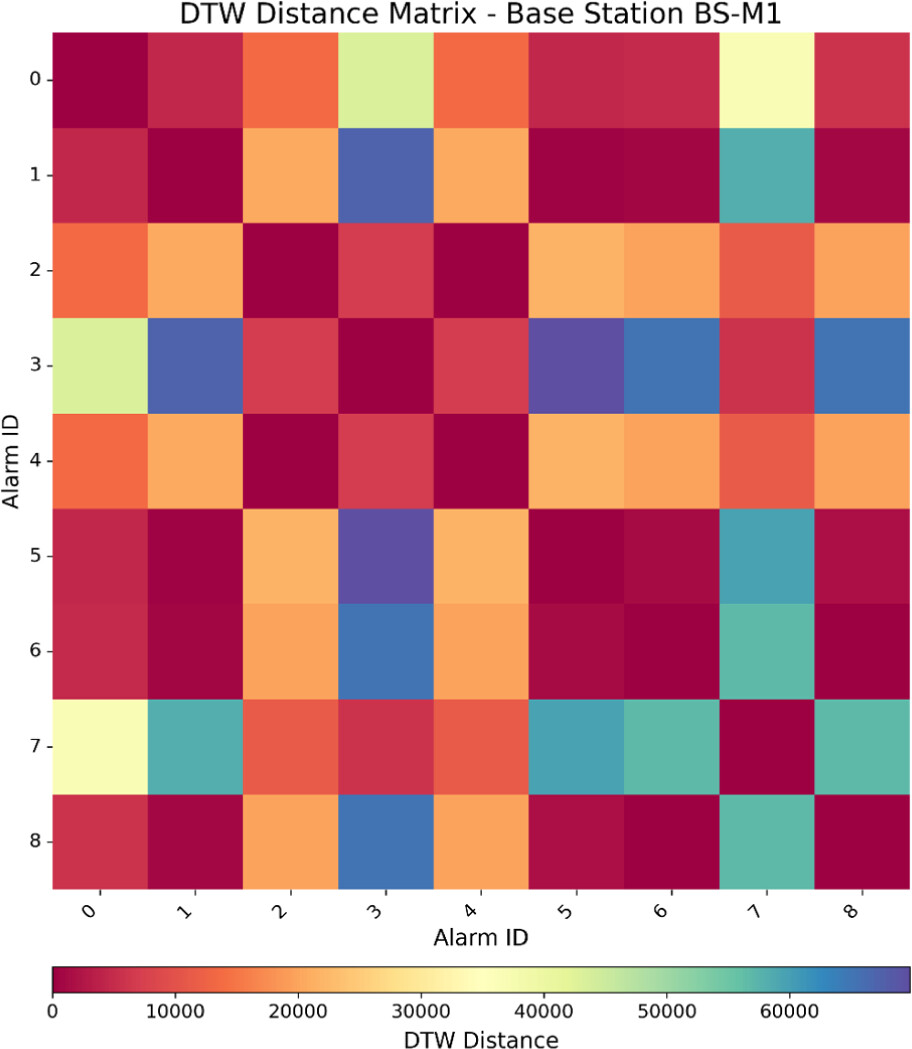

The quantification of similarity between each base station’s temporal alarm activation profiles is implemented using Dynamic Time Warping (DTW). This results in a symmetric matrix containing pairwise distances representing the temporal relationships among all valid alarms (a DTW matrix is illustrated as a heatmap in

Figure 3). This matrix provides a basis for clustering alarms by considering the comparable activation behaviour of temporal sequences. Agglomerative hierarchical clustering is applied on the condensed DTW distance matrix to merge alarms with minimal temporal dissimilarity, thereby creating an initial behavioural profile of the alarms. The resulting dendrogram, as shown in

Figure 4, denotes functional or causal proximity of the alarms after grouping these alarms into clusters. he choice of DTW allows for the analysis of sequences with varying lengths and non-linear time shifts as compared to rigid metrics like Euclidean or Manhattan distance.

18,19 Moreover, compared to other plausible approaches in similar problem domains, such as Longest Common Subsequence (LCSS), Smith-Waterman (SW), and Needleman-Wunsch (NW) algorithms, DTW offers better trade-offs between computation efficiency and temporal structure-preserving accuracy.

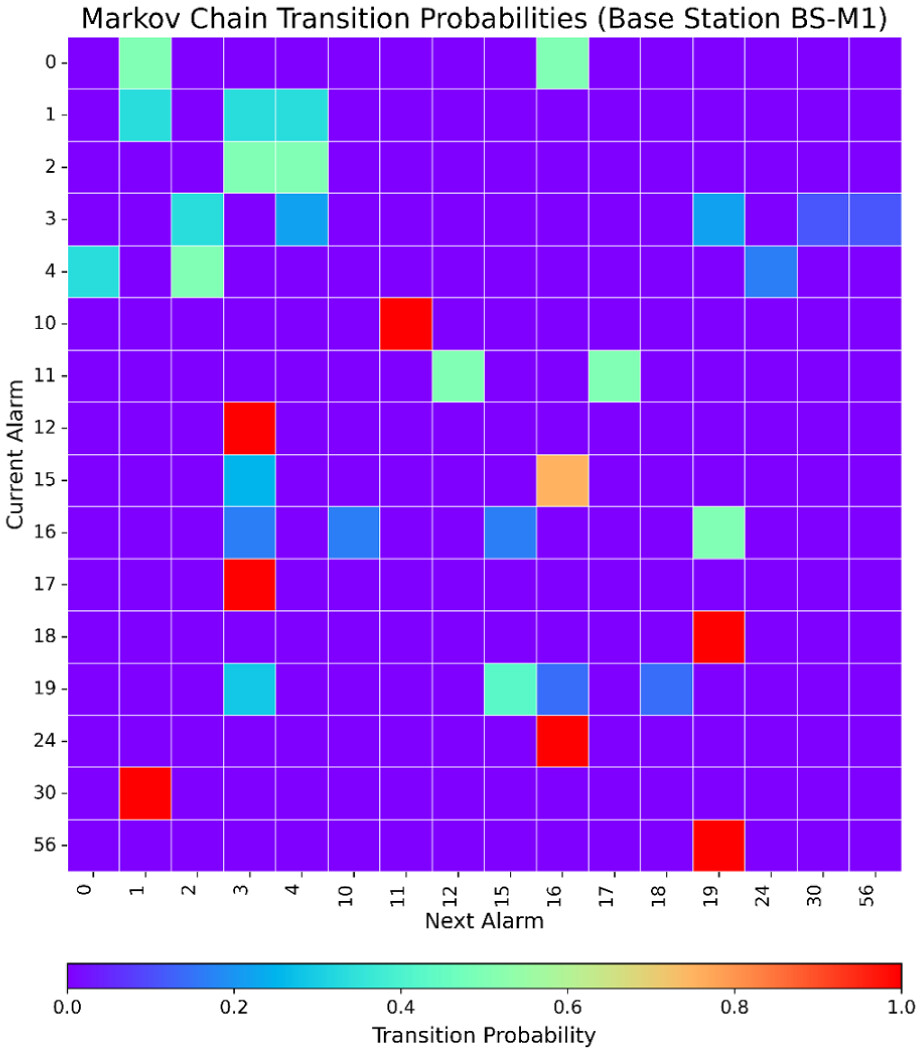

18,20Markov chain modelling of alarm transitions

The probability that an alarm transitions to the next alarm based on its current state is modelled using a first-order Markov chain. The first-order Markov chain modelling allows for an efficient and interpretable framework for predictions. Markov chain models have been widely accepted to provide a robust modelling of system degradation and fault evolution, even from short alarm sub-sequences.

21–23 Moreover, empirical evidence suggests that Markov chains can outperform higher-order models on systems with repeated behaviours.

24,25 Simultaneously, it also enables smoother classification decisions, thereby reducing false positives.

26 Algorithm 2 outlines this process. For each base station, the ordered sequence of alarms

S (from

Algorithm 1) is used to record every observed transition between consecutive alarms. Within each S, every pair of consecutive alarms’ transitions is recorded. A count matrix keeps track of these grouped transitions and records how often an alarm is followed by another. The raw counts are converted to transition probabilities by normalising each row of the count matrix. This resulting probabilistic transition matrix represents the likelihood of each possible next alarm and forms the basis of the Markov model.

Forecasting and evaluation

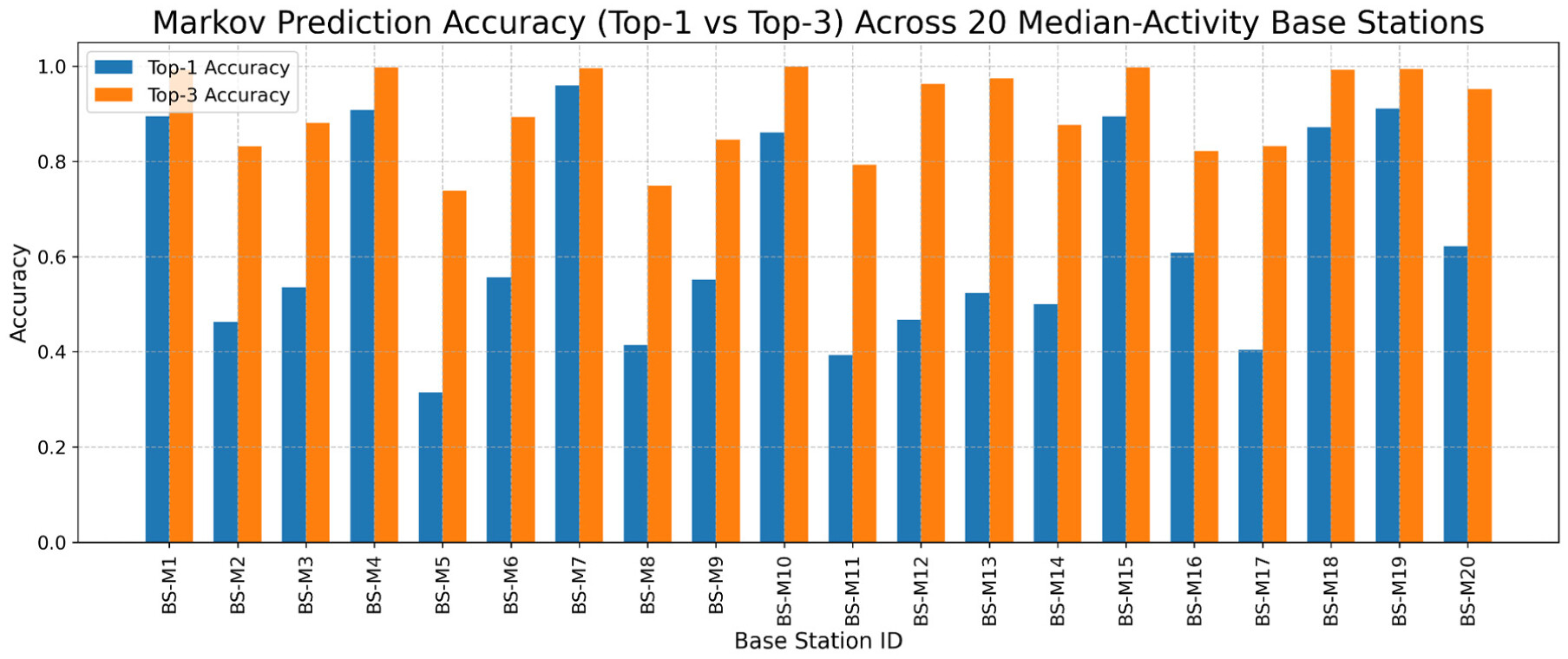

This section outlines how the forecasting of the alarm evolution and causality is handled. Based on the Markov transition matrix, the most likely alarms to occur next are predicted and arranged in descending order of their probability. The top-N alarms with the highest probabilities of occurrence, given that an alarm has already occurred, are selected as the model’s forecast for that alarm in a base station. The model’s prediction accuracy is evaluated by comparing predictions to the actual alarm transitions in each sequence of alarms.

Definition 1. Top-1 Accuracy. Measures how often the most probable next alarm predicted by the model matches the actual next alarm observed in the sequence. It is computed as:

Definition 2. Top-N Accuracy. Measures whether the actual next alarm appears among the N most probable alarms predicted for the current state. It is given by:

To evaluate the model’s predictive performance, two accuracy metrics are defined – Top-1 and Top-N accuracy. The Top-1 accuracy measures how often the model’s prediction matches the next predicted alarm (Definition 1). Similarly, the Top-N accuracy extends this metric by treating the model’s prediction as correct if the next predicted alarm is within the top N most probable subsequent alarms. Both metrics are averaged over all the transitions to provide an overall accuracy score for each base station. To assess generalisation and to graphically represent the causality, this analysis focuses on the base stations with median alarm activity, comparing their transition matrices and predictive accuracies. This analysis can be applied to high- or low-activity base stations just as easily. To determine whether the learned Markov structure consistently captures alarm progression patterns across different network contexts, this cross-station evaluation becomes essential.

Alarm-level resilience analysis

In the context of alarm networks (be it within a base station or across interconnected systems), resilience refers to an alarm’s ability to withstand, contain, or recover from cascading fault propagation. A resilient alarm will not frequently trigger secondary alarms, will not persist for long, and will tend to transition towards a stable “No Alarm” state. In contrast, alarms with low resilience will more likely propagate, repeat, or remain unresolved, thereby contributing to operational fragility of the base station or network. An alarm’s resilience in a network of alarms indicates how well an alarm can withstand or recover from fault cascades. A resilient alarm is unlikely to trigger other alarms. Even if it is triggered, it is unlikely to stay active for long and usually returns to a stable “No Alarm” state. In contrast, an alarm with low resilience tends to propagate faults, occur frequently, or stay unresolved for extended durations. Primarily, these low-resilience alarms increase the base stations and, by extension, the wider network’s overall fragility. Propositions 1–3 provides three metrics for evaluating the resilience characteristics of alarms by exploiting the transition probability matrix.

Proposition 1. Cascade Entropy. It represents the uncertainty or spread in how an alarm can evolve into other alarms (measures the diversity of transitions originating from a given alarm):

A higher entropy value indicates broader propagation potential and a lower ability to contain cascading behaviour.

Proposition 2. Self-loop Probability. Quantifies the likelihood of an alarm repeating itself without resolving or transitioning to another state:

A higher value of implies persistence or unresolved fault conditions, thereby indicating reduced resilience.

Proposition 3. Absorption Probability. Measures the likelihood that an alarm transitions into a “No Alarm” state, representing recovery or containment (a higher absorption probability corresponds to faster resolution and stronger resilience):

A composite resilience score is defined as a weighted aggregation of the min-max normalised values of the three individual metrics to combine these measures. It can be represented as:

Where , , and are the normalised cascade entropy, self-loop probability, and absorption probability for alarm AI, computed across all alarms in the set. A higher indicates greater resilience (i.e. the alarm is less likely to propagate, less prone to repetition, and more likely to resolve). The weights , , and specify the relative importance of the three metrics and can be tuned according to operational priorities. The weights are set to , , and to prioritise alarms that demonstrate containment and recovery over those that propagate in this work. These weights can be experimentally tuned based on expert feedback and requirements analysis with network infrastructure stakeholders (managers and maintenance engineers).

Limitations and future research

The RAPTOR framework offers sufficient generalisability and versatility to be effective across domains that involve sequential, time-dependent signals such as alarms and sensor readings. This claim is supported by prior evidence. Comparable frameworks using similar datasets have been successfully applied in a wide range of domains, including relay anomaly detection in power systems,

26,27 DDoS attack prediction,

23,28 real-time fire detection in sensor networks,

19,29 healthcare support trajectory analysis,

30 and alarm flood identification in chemical process industries.

21,22 That being said, the RAPTOR framework has its limitations through the individual components that make up the framework. DTW is notably characterised by its over-compression and over-stretching issues, where it maps multiple points to one, which can potentially lose critical signal features.

18 The first-order Markov assumes a short memory and ignores long-range dependencies and causal effects.

24 Moreover, it struggles with novelty detection and may misclassify unknown states into existing states if not provided with sufficiently large and diverse samples.

31 Consequently, the resilience scoring inherits these limitations and may misrepresent alarm resilience transitions in complex evolving sequences. There are multiple avenues to address these limitations that set a clear tone for future research directions. DTW can be extended using an adaptive penalty function to mitigate pathological matching and improve similarity accuracy. Alarm transitions can also be modelled using higher-order dynamic Markov chains to capture complex trends and integrate anomaly substitution strategies to prevent anomalies from infecting future predictions. Unknown states and hidden correlations can be addressed through the addition of “

” unknown states and multivariate joint sequences. Future extensions of this work will also include modelling multi-alarm transitions, integrating network topology for spatial inference, or combining with root cause analytics such as Viterbi-based fault isolation to develop fully autonomous alarm intelligence systems.

Conclusions

This paper presents a comprehensive, interpretable, and scalable framework, RAPTOR, for alarm sequence analysis in RAN base stations. The core domain challenges of temporal variability, causality inference, sparse and heterogeneous data, and limited interpretability of alarms in telecommunication network operations have been addressed through this work. RAPTOR addressed RQ1 (Temporal Causality) by making use of DTW-based clustering and transition graphs, which revealed latent time-aligned alarm dependencies, and also identified station-specific alarm co-activation trends. Further, RQ2 (Predictive Modelling) was addressed by constructing Markov transition matrices. The Top-1 and Top-3 prediction accuracy metrics evaluated the effectiveness of this approach and were benchmarked across base stations with different activity levels. Finally, RQ3 (Resilience Quantification) was addressed by defining resilience scores based on cascade entropy, self-loop probability, and the alarm absorption likelihood within and across base stations.

Empirical evaluation on a real-world dataset demonstrated that the framework robustly adapts to varying alarm densities and produces interpretable predictions and resilience insights. Although the evaluation in this work focussed on medium-activity base stations, the model performs well for both low and high-activity base stations, which involved complex but structured stochastic dynamics (high-activity base stations) as well as simple deterministic alarm chains (low-activity base stations). This work contributes to telecommunication network resilience by connecting alarm behaviour to system health inference. RAPTOR provides diagnostic value beyond prediction by quantifying alarm persistence, its cascading potential, and its self-resolution tendency. Future extensions of this work will include modelling multi-alarm transitions, integrating network topology for spatial inference, or combining with root cause analytics to develop fully autonomous alarm intelligence systems.