Large language models for sustainable assessment and feedback in higher education: Towards a Pedagogical and Technological Framework

Abstract

Nowadays, there is growing attention on enhancing the quality of teaching, learning and assessment processes. As a recent EU Report underlines, the assessment and feedback area remains a problematic issue regarding educational professionals training and adopting new practices. In fact, traditional summative assessment practices are predominantly used in European countries, against the recommendations of the Bologna Process guidelines that promote the implementation of alternative assessment practices that seem crucial in order to engage and provide lifelong learning skills for students, also with the use of technology. Looking at the literature, a series of sustainability problems arise when these requests meet real-world teaching, particularly when academic instructors face the assessment of extensive classes. With the fast advancement in Large Language Models (LLMs) and their increasing availability, affordability and capability, part of the solution to these problems might be at hand. In fact, LLMs can process large amounts of text, summarise and give feedback about it following predetermined criteria. The insights of that analysis can be used both for giving feedback to the student and helping the instructor assess the text. With the proper pedagogical and technological framework, LLMs can disengage instructors from some of the time-related sustainability issues and so from the only choice of the multiple-choice test and similar. For this reason, as a first step, we are designing and validating a theoretical framework and a teaching model for fostering the use of LLMs in assessment practice, with the approaches that can be most beneficial.

1 Introduction

As an UNICEF definition affirmed

“AI refers to machine-based systems that can, given a set of human-defined objectives, make predictions, recommendations, or decisions that influence real or virtual environments. AI systems interact with us and act on our environment, either directly or indirectly. Often, they appear to operate autonomously, and can adapt their behaviour by learning about the context” [60].

To connect this topic to the education field, it is possible to affirm that, in terms of technological advancements, theoretical contributions and impact on education, the field of Artificial Intelligence in Education (AIED) has seen success over the past 25 years [6, 62]. Instead of just automating the instruction of students sitting in front of computers, AI could help open up teaching and learning opportunities that would otherwise be difficult to achieve, question conventional pedagogies, or assist teachers in becoming more successful. Other AIED technologies currently track student progress and give tailored feedback to determine if the student has mastered the topic in issue. AIED technologies built to support collaborative learning might gather similar information, and intelligent essay assessment tools can potentially draw conclusions about a student’s knowledge. All of this information and more could be gathered during a student’s time in formal educational settings (the learning sciences have long recognised the value of students engaging in constructive assessment activities), along with information about the student’s participation in non-formal learning (such as learning a musical instrument, a craft, or other skills) and informal learning (such as language learning or enculturation by immersion) [20]. The potential of AI in educational settings, as well as the necessity for AI literacy, places educators at the forefront of these new and exciting breakthroughs that were previously relegated to obscure computer science laboratories. Simultaneously, teachers and administrators are required to have clear perspectives on the potential of AI in education and, eventually, to incorporate this ground-breaking technology into their practice [21]. To deeply focus on the characteristics of AIED concept, for example, Holmes and colleagues [20] created the AIED taxonomy (Table 1), a system that is helpful to categorise AIED tools and applications into three different but intersecting categories:(1) student-focussed, (2) teacher-focussed, and (3) institution-focussed AIED.

| Student focussed AIED | Teacher focussed AIED | Institution focussed AIED |

|---|---|---|

| Intelligent tutoring systems (ITS) | Plagiarism detection | Admissions (e.g., student selection) |

| AI-assisted Apps (e.g., maths, text-to-speech, language learning) | Smart curation of learning materials | Course-planning, scheduling, timetabling |

| AI-assisted simulations (e.g., games-based learning, VR, AR) | Classroom monitoring | School security |

| Automatic essay writing (AEW) | Automatic summative assessment | Identifying dropouts and students at risk |

| Chatbots | AI Teaching Assistant (including assessment assistant) | e-Proctoring |

| Automatic formative assessment (AFA) | Classroom orchestration | |

| Learning network orchestrators | ||

| Dialogue-based tutoring systems (DBTS) | ||

| Exploratory learning environments (ELE) | ||

| AI-assisted lifelong learning assistant |

The aim of this paper is to create and validate a model for sustaining a thoughtfull design and implementation of AI in the authentic assessment processes, informed by the existing scientific literature. The model aims to guide educators in the field of Higher Education in order to explore the power of AI in teaching, learning and assessment practice, triangulating the possibilities of the action of the three main protagonist of the formative process–academics and students–in relation with AI.

2 Theoretical background

Higher education aims to provide meaningful, relevant courses, where graduates can learn to work and live in an increasingly digital society [45]. In our contemporary education system, our students need to be supported in becoming effective lifelong learners, who must be prepared for the assessment tasks they will encounter in their lives, but they also need to become lifelong assessors, possessing assessment skills acquired through continuous work. It could be possible through the implementation of Assessment for Learning intended as an approach that emphasises the assessment process as an "essential moment of the educational experience, characterised by situations in which learners are enabled to analyse and understand the processes in which they are involved and can thus participate in decisions about their learning goals by becoming increasingly aware of their progress" [52, 16 p.56].

Learners as lifelong assessors must be able to:

2.1 AI and education: what about sustainable and authentic assessment?

Students need to gain, during their learning process, the assessment expertise, so the competence required for students to effectively understand assessment criteria and to be able to use the feedback received to close the gap and improve their own learning [51]. They will be supported in this process by teachers who, through continuous assessment and making judgements about the learning products of students, will develop more effective standards of judgement to define the expected competence of students themselves [16 p.76, 43]. But how to really support students’ development of assessment expertise? As Sadler pointed out [51], it is necessary to involve students in direct and authentic assessment experiences, supporting them in the acquisition of the concept of quality, and training them in order to make complex judgements according to a multiplicity of criteria [51]. What are the principles to apply in order to create sustainable assessment contexts and then scaffold authentic assessment experiences? Boud [4] drew up nine useful principles for reflecting on and designing sustainable assessment and feedback practices. For the author, it is indeed important that there is a timely sharing of clear assessment criteria for students; it is also crucial that students are seen as individuals who can achieve success and, in terms of assessment processes, these must be useful in making students confident in their success and in this sense it seems useful to consider separating feedback processes from the awarding of grades. The focus on learning during the assessment process must take priority over the focus on performance: it seems important therefore to support the development of self-assessment competencies and encourage the use of reflective peer assessment practices. One of the last fundamental aspects is related to the completion of the feedback loop as a tool for reviewing student work and finally, the importance of introducing a review process of assessment practices with the implementation of formative assessment processes is emphasised.

In terms of assessment and feedback in connection with AI systems, Swiecki and colleagues affirmed that

“AI-based techniques have been developed to fully or partially automate parts of the traditional assessment practice. AI can generate assessment tasks, find appropriate peers to grade work, and automatically score student work. These techniques offload tasks from humans to AI and help to make assessment practices more feasible to maintain” [56, p.2].

The power of AI related to the assessment and feedback processes is connected to the fact that, while traditional assessment practices could provide a partial overview about the students performance, several AI techniques thanks to their characteristics can promote a wider vision of learning process and progress. In relation to the topic of authenticity and sustainability of assessment, AI systems can help to collect, represent, and assess data in a complex way: authentic assessment processes can be very articulated in terms of task and general design, so AI can be helpful for academics to monitor learning process towards an assessment of student progress [41, 56]. In fact, authentic assessment requires students to “use the same competencies, or combinations of knowledge, skills, and attitudes, that they need to apply in the criterion situation in professional life” [17 p.69]. Authenticity has been recognised as a fundamental element of assessment design that encourages learning. Authentic assessment tries to reproduce the activities and performance criteria often encountered in the workplace, and has been shown to have a favourable influence on student learning, autonomy, motivation, self-regulation, and metacognition; qualities that are significantly associated with employability [64]. Again, international authors even suggest that the authenticity of the assessment tasks is a need for reaching the expert level of problem-solving. Likewise, strengthening the authenticity of an assessment has the potential to have an encouraging effect on student learning and motivation [17, 53].

Finally, UNESCO [38], which has been in the last years amongst the most influential and active institutions that reflect on the implications of AI in society, provided the following guidelines for AI in assessment.This study moves along those research axes.

•

Testing and implementing artificial intelligence technologies is crucial for supporting the assessment of various dimensions of competencies and outcomes.

•

Caution is essential when adopting automated assessment with responses to rule-based closed questions.

•

Employing formative assessment leveraged by artificial intelligence as an integrated function of Learning Management Systems (LMS) is key to analysing student learning data with increased accuracy and efficiency and reducing human biases.

•

Progressive assessments based on artificial intelligence are imperative to provide regular updates to teachers, students, and parents.

•

Examining and evaluating the use of facial recognition and other artificial intelligence for user authentication and monitoring in remote online assessments is paramount.

2.2 Language models and transformers

Language Models (LMs) are defined as “computational models that have the capability to understand and generate human language. LMs have the transformative ability to predict the likelihood of word sequences or generate new text based on a given input” [6, p.39]. A language model is considered “large” (LLM) when it is scaled on the computational resources required, the number of parameters, the size of the dataset used for training [19, 27] and arguably, the capabilities.

Nowadays, the most common LLMs use the transformer architecture: "a model architecture eschewing recurrence and instead relying entirely on an attention mechanism to draw global dependencies between input and output [...] The Transformer is the first transduction model relying entirely on self-attention to compute representations of its input and output without using sequence aligned RNNs or convolution” [63 p.2]. In fact, they defined the transformer architecture, introducing “the self-attention mechanism to determine the relevance of different parts of the input when generating predictions. This allows the model to better understand the relationships between words in a sentence, regardless of their position” [29 p.1, 63].

These models are able to learn, to infer the context, and to produce text by mapping relationships (such as influence and dependance) in series of elements, even when they are far from each other in the sequence (for example, between words that are not consecutive in a sentence). Improved parallelisation, reduced training time, and better benchmarks in several tasks compared to the once prevalent recursive and convolutional neural networks [63] have been crucial to its success.

2.3 The role of large language models

Over the past few years, Large Language Models (LLMs) have become increasingly prevalent in society and educational settings. These AI-powered models are capable of generating, analysing, and summarising text, as well as engaging in dialogic interactions with humans [29]. One of the most well-known examples of LLMs is OpenAI’s ChatGPT (where GPT stands for Generative Pre-trained Transformer), which is based on GPT 3.5 and GPT 4 architectures. Other notable LLMs include Anthropic’s Claude (1, 2 and 3), Microsoft Copilot (another GPT4-based model), Mistral AI’s Mistral Large, and Google Gemini. While these models are extremely powerful, there are concerns about data privacy and results consistency [7]. However, there are other options available. With the release of open-source and open-access models such as Meta’s LLAMA (at the moment at its third iteration), Google Gemma, as well as TII’s Falcon (by the Technology Innovation Institute in Abu Dhabi), Mistral AI’s Mixtral 8x7B and the growth of platforms like HuggingFace, which acts as a repository and framework, there are many possibilities for local LLMs with great capabilities. These models can be customised, fine-tuned, or even trained specifically for one’s use case, allowing for greater flexibility and control [36].

To better understand the possibilities related to the use of these models, we list a series of crucial LLMs’ capabilities for education from the work of various authors:

•

Generate: LLMs can generate human-like text. This can be used to provide detailed explanations, create content, or even generate potential essay or report structures [57].

•

Summarise: LLMs can summarise long pieces of text. This can help in providing concise summaries of lengthy student submissions. The summary can take into account different parameters in the text, providing information exactly on the aspects that the teacher wants to assess [57].

•

Posing and Answering Questions: LLMs can understand a piece of text and answer questions about it as well as asking questions about it, if required to. This can be used to create interactive feedback and learning experiences [57].

•

Translate: LLMs can translate text from one language to another. This can be useful in multilingual educational settings and to adapt content for foreign language student’s inclusion. It also adds to the overall sustainability of the teacher’s job in such situations [57].

•

•

Classify: LLMs can classify text into predefined categories. This can be used for assisted grading or categorising student feedback [57].

•

Detecting plagiarism: By comparing the similarity between different pieces of text, LLMs can help detect potential cases of plagiarism both between students and between students and the source material [22].

•

Measure Semantic Similarity: LLMs can measure the semantic similarity between two pieces of text. This can be used to match student queries with relevant answers or resources and help the teacher in the assessment of the student’s work [69].

•

Generate Feedback: Based on the assessment of a student’s work LLMs can generate personalised feedback. It would work even better if the LLM would have some teacher’s notes on the assignment to work with [54].

•

Assess Knowledge: LLMs can be used to assess a student’s understanding of a topic based on their written submissions, especially if properly trained on correct assignments and having an assessment rubric to refer to [57].

LLMs are able to analyse massive amounts of text, aggregate it, and then offer feedback based on previously established standards [57]. The outcomes of that analysis can be applied to provide feedback to the student as well as to assist the instructor in evaluating the text. With the correct pedagogical and technical framework, LLMs can support teachers in some of the time-related sustainability difficulties, and thus break them free from the sole choice of the multiple-choice test and similar. In detail, Kasneci and colleagues [29] define the following opportunities for teachers and students regarding the implementation of AI in the teaching and learning universitycontext:

•

“For university students, large language models can assist in the research and writing tasks, as well as in the development of critical thinking and problem-solving skills. These models can be used to generate summaries and outlines of texts, which can help students to quickly understand the main points of a text and to organise their thoughts for writing. Additionally, large language models can also assist in the development of research skills by providing students with information and resources on a particular topic and hinting at unexplored aspects and current research topics, which can help them to better understand and analyse the material."

•

"For personalised learning, teachers can use large language models to create personalised learning experiences for their students. These models can analyse student’s writing and responses, and provide tailored feedback and suggest materials that align with the student’s specific learning needs. Such support can save teachers’ time and effort in creating personalised materials and feedback, and also allow them to focus on other aspects of teaching, such as creating engaging and interactive lessons’ [29 pp.2-3].

In specific relation to assessment and feedback practice, the correlation with LLM can be summarised in four different points:

1.

2.

3.

Objectivity: LLMs help to develop more transparent, objective and fair assessment processes, and adhere to the established criteria [5].

2.3.1 Challenges of using large language models in educational settings

A meta-analysis highlighted specific inquiry areas and related subcategories about challenges to the adoption of LLMs in education [70], such as practical challenges (Technology readiness, Performance, Replicability) and ethical challenges (Transparency, Privacy, Equality, Beneficence):

•

Much experimental work is needed to create evidence regarding "improvements to teaching, learning and administrative processes in authentic educational practices" [70, p.100].

•

A lack of replicability emerged from the studies analysed. It could become a barrier to the adoption of the proposed LLMs, and also represents a problem of generalisation of the results. The use of LLM could potentially lead to the sharing and exposure of private and personal data of the individuals involved in the educational process.

•

In terms of equality, the language used in LLM could pose a limitation, and the cost associated with specific language training could potentially hinder equal access and use of these learning resources.

•

Inherent biases introduced during the fine-tuning and training process could present a challenge when adopting LLM in teaching, learning, and assessment processes.

2.4 AI in the constructivist loop

This paper introduces the idea of "AI in the Constructivist Loop", a framework that integrates AI into the processes of teaching, learning and acquisition of knowledge and competences. At the core of this discussion are four concepts: technological mediators, augmented intelligence, supermind, and AI as a boundary object. This framework is based on the theories of Vygotsky [65] and Jonassen [25] regarding technological mediation, further developed through Malone’s supermind [34] and the practical use of AI as a boundary object within Activity Theory.

The constructivist theory, which highlights learning as a process of constructing knowledge in context rather than passively acquiring it, is also based on the idea that humans developed various technologies (artifacts, tools and instruments) to enhance their capabilities: both physical and cognitive. Language is one of those tools, arguably one of the most important. One can see how the creation of AIs, and especially LLMs, fits with the idea of using them as tools for expanding cognitive capability. Such tools serve as technological mediators (or mediating artifacts) between humans and the environment (including the physical environment but also abstract objects and objectives, the community and desired outcomes in general). Vygotsky’s Zone of Proximal Development (ZPD), consists of an area that separates things that learners can do autonomously and things that they can’t do at all [65]. That is the area of things that learners can do with some assistance or support, and that, eventually, they will become able to do unaided. Tools such as LLMs can help expand one’s capacity to navigate ZPD and consolidate knowledge and skills by offering support fostering critical thinking skills, and providing learners with appropriate challenges and assistance. Such kind of technological mediators are therefore considered “mindtools” [25] by acting as textcolorgreenaids and scaffolding elements, thereby bridging the gap between abstract theoretical ideas and practical understanding. This paradigm shift starts from learning technology, to learning through technology, to learning with technology.

The concept of an augmented intelligence is a well-established one. Initially referred to as “intelligence amplification”, it encapsulates the idea of merging human and machine intelligence to enhance, rather than substitute, human’s intellect. At its beginning, in the 50s the idea was still the one to use it as an amplifying tool [2], the phase, in pedagogical terms, was that of learning “through” technology, in the same way as using a ladder to reach a higher spot. The actual paradigm of learning “with” technology, influenced by the constructionist ideas of Papert & Harel [47], is nearer to the current view of augmented intelligence. It is a human-centred partnership model applicable to decision-making, research, learning and planning, where the human is in the loop with the AI [12, 71].

Human-in-the-loop concept is an extensive area of research that covers the intersection of computer science, cognitive science, and psychology [68] and can be considered a semi-supervised human-computer interaction learning method [40], which aims at achieving the accuracy of machine learning and assisting human learning. It implements the high efficiency and accuracy of AI in decision-making through scene recognition, high-quality human annotation, and active learning.

In this augmented environment Malone’s [35] idea of the supermind becomes especially pertinent. Superminds are not necessarily AI-blended, in fact, the idea is that human organisations that act together with an intelligent behaviour are superminds. For example, hierarchies, markets, communities an democracies fall inside that definition. However, focussing on the the AI possible contribution to a hybrid supermind, it would result in a blend of human and machine intelligence that leverages the strengths of both to achieve superior results. In educational settings, this translates into collaborative networks where students, educators and AI collaborate in solving problems and constructing knowledge together. These networks not only improve learning outcomes but also foster a distributed cognitive system that aligns with the constructivist belief that knowledge is socially and contextually constructed.

A practical way of viewing AI in this educational approach is possible through the lens of the Activity Theory framework [13, 28]. In this context AI can be viewed as a boundary object [23], practically, a bridge connecting individuals and groups within the education community such as students, teachers and policymakers. Its role in facilitating discussions and knowledge sharing reflects the idea that learning is a social process influenced by diverse viewpoints.

The framework of "AI in the constructivist loop" presents an approach to incorporating AI in education. By treating AI as a tool for collaboration embracing intelligence tapping into collective intelligence and recognising its role as a connecting factor this framework supports and enhances teaching methods based on constructivism. It not only paves the way for personalised and interactive learning experiences, but also lays the groundwork for future investigations into how AI can revolutionise educational practices further.

3 Research methods

3.1 Objectives and research question

Given the growing interest and preliminary insights offered by existing international literature on the intersection of Artificial Intelligence (AI) and education (AIED), this study seeks to contribute by developing and validating a comprehensive model for integrating AI technologies in educational assessment within higher education contexts. This endeavour follows the cycle of action research and is grounded in literature and practice evidence-based design. The validation approach is the validation through comparison, that involves juxtaposing the outcomes, processes, and perceptions associated with the implementation of the new model against those derived both from more traditional or previously established methods and from other implementations of the new model. By conducting a systematic comparison, researchers can identify the relative strengths and weaknesses of the latest model and its impact on critical issues such as student learning outcomes, engagement, and satisfaction.

This study and model design work is based on one main research question:

•

In what ways can university educators leverage AI tools to enhance the effectiveness, sustainability, and authenticity of assessment practices?

3.2 Model design process

The designed model takes into account the existing literature connected to the topic of Assessment for Learning, Authentic Assessment and Sustainable Assessment [4, 52]. Starting from these literature pieces of evidence, we are working on the development of a model for adopting AI in the assessment processes in the Higher Education context. The model takes into account the role that AI plays in the assessment and feedback practices connected to academics and students in the virtuous cycle of the learning spiral (Table 2).

| Means and ends | Social and physical aspects | Learning, cognition, and articulation | Development |

|---|---|---|---|

| Design | |||

| Identification of goals and subgoals of the target actions (target goals) | Integration of AI with other tools and resources | Support for mutual transformations between actions and operations, facilitating scaffolding and zone of proximal development (ZPD) for learners through personalised feedback | Anticipated changes in the environment and the level of activity they influence, including the roles and responsibilities of academics and students |

| Example: Defining learning outcomes for a new AI-based educational tool. | Example: Ensuring the AI tool works seamlessly with existing learning management systems. | Example: AI assisting in transitioning routine tasks to automated processes. | Example: Predicting changes in teaching roles due to AI integration. |

| Resolution of conflicts between various goals using AI | Integration of target technology with social rules and norms | Self-monitoring and reflection through externalization supported by AI | Transformation of existing activities into future activities supported with the system |

| Example: Balancing different educational objectives with AI capabilities. | Example: Aligning AI usage with institutional policies. | Example: Using AI tools to provide feedback for self-assessment. | Example: Adapting curriculum planning to include AI-driven methodologies. |

| Evaluation Phase 1 | |||

| Criteria for success or failure of achieving target goals using AI | Analysis of social and physical integration of AI | Assessment of internalization and externalization processes supported by AI, including metacognitive skills and self-reflection | Evaluation of the impact of AI implementation on the structure of target actions |

| Example: Setting benchmarks for AI effectiveness in learning outcomes. | Example: Assessing the integration of AI-MAAS feedback mechanisms within existing learning management systems. | Example: Measuring the impact of AI on students’ metacognitive abilities. | Example: Assessing changes in teaching strategies due to AI. |

| Identification and resolution of goal conflicts | Review of integration of AI with tools and resources | Support for problem articulation and help request in case of breakdowns | Dynamics of potential conflicts between target actions and higher-level goals |

| Example: Addressing discrepancies between desired and actual AI performance. | Example: Continuous monitoring of AI compatibility with other educational technologies. | Example: AI providing troubleshooting assistance to users. | Example: Reconciling immediate teaching tasks with long-term educational goals. |

| Evaluation Phase 2 | |||

| Re-evaluation of AI’s role in achieving goals | Further checks on integration of AI with the environment, including the broader educational ecosystem | Continuation of support assessment for internal and external transformations | Continued developmental evaluation of the AI-supported processes |

| Example: Periodically reassessing AI’s contribution to educational objectives. | Example: Ensuring AI remains compatible with evolving educational standards. | Example: Ongoing evaluation of how AI aids cognitive development. | Example: Continuous refinement of AI-MAAS algorithms based on student interaction data and evolving pedagogical needs. |

| Re-evaluation of conflict resolution using AI | Continued alignment of AI with tools and social rules | Further support for internalization and externalization processes | Ongoing evaluation of developmental transformations |

| Example: Updating conflict management strategies as AI capabilities improve. | Example: Ensuring AI compliance with new institutional policies. | Example: Enhancing AI features to better support learning processes. | Example: Continuous assessment of AI’s impact on educational practices. |

| Use | |||

| Final evaluation of AI impact on goal attainment, including sustainability and scalability of the AI-MAAS model | Final integration of AI with the physical and social environment | Long-term support for learning and cognition processes using AI | Final evaluation of developmental transformations induced by AI |

| Example: Assessing the long-term viability of AI in the educational setting. | Example: Ensuring AI tools are fully embedded in everyday educational activities. | Example: Providing ongoing AI-driven learning support. | Example: Reviewing the overall impact of AI on institutional growth and development. |

| Long-term resolution of goal conflicts using AI | Sustained integration of AI with tools and social norms | Ongoing support for learning, cognition, and articulation using AI | Continuous development and transformation with AI support |

| Example: Addressing persistent issues in goal alignment through AI adjustments. | Example: Ensuring continuous adherence to evolving educational standards. | Example: AI facilitating lifelong learning and skill development. | Example: Regularly updating educational practices based on AI insights. |

The model itself will be assessed following four different levels proposed by Kaptelinin and colleagues [28] through the Activity Theory:

•

Design: we will introduce this checklist in order to evaluate the design process itself.

•

Evaluation Phase 1: to ensure the initial validation of the proposed model, a comparison process was initiated by referring to the existing scientific literature, reports, and official documents related to the topic at an international level.

•

Evaluation Phase 2: in the second phase of the evaluation process, we aim to introduce an evaluation of how we are going to propose the use of the model itself, again based on the validated checklists.

•

Use: in this last phase, we will introduce a specific checklist in order to evaluate the model and its related impacts on the teaching, learning and assessment processes.

Every checklist is developed following four different areas:

1.

Means and ends—the extent to which the technology facilitates and constrains the attainment of users goals and the impact of the technology on provoking or resolving conflicts between different goals.

2.

Social and physical aspects of the environment—integration of target technology with requirements, tools, resources, and social rules of the environment.

3.

Learning, cognition, and articulation—internal versus external components of activity and support of their mutual transformations with target technology.

4.

Development—developmental transformation of the foregoing components as a whole [28].

The model will be composed by two layers of adoption:

•

AI Mediated Summative Assessment: layer focussed on assessment processes connected to Technology Enhanced Assessment practices, so the power of AI in connection to the possibility of introducing assessment and feedback timely, customised and informed by AI data [66].

3.3 A comparative method of model validation

In order to promote a first step of validation about the model, that is called AI-MAAS (AI-Mediated Assessment Academics and Students), it was decided to activate a comparison process of the model proposed according to the existing scientific literature, reports and official documents concerning the topic at international level. Starting from a more general overview also in terms of policies and institutional guidance, it is important to cite the following works:

•

UNESCO - Guidance for generative AI in education and research [37]: the guide intends to assist governments in implementing urgent steps, planning long-term policies, and developing human capability to ensure a human-centred perspective of these new technologies.

•

GOV UK, Department for Education - Generative AI in education [59]: the Department initiated a discussion with the sector by starting a Call for Evidence on the use of GenAI in education. The explored areas of interest include Experiences of using GenAI in education; Opportunities for GenAI in education; Concerns and risks of GenAI in education; Enabling use and future predictions.

•

JRC - The Impact of Artificial Intelligence on Learning, Teaching, and Education [58]: it describes the state of artificial intelligence (AI) and its prospective applications in learning, teaching, and education. It lays the groundwork for well-informed policy-oriented work, research, and forward-thinking initiatives that address the potential and difficulties presented by recent AI advancements.

•

U.S. Department of Education, Office of Educational Technology, Artificial Intelligence and Future of Teaching and Learning [61]: Insights and Recommendations, Washington, DC: the report discusses the evident need for knowledge exchange and policy development for using AI systems in educational contexts.

Starting from the recent literature concerning the role and the power of AI in Education, an important study to cite is one of Kamalov and colleagues [26], who explored impacts related to the integration of AI in educational systems, in terms of benefits, challenges and application in the four spheres of (1) Personalised Learning, (2) Intelligent Tutoring Systems (ITS), (3) Assessment Automation, and (4) Teacher–Student Collaboration. Through their work, they propose an overview of practical implication about AI introduction: in fact, they assume that “by personalising learning experiences, automating administrative responsibilities, and delivering real-time feedback, AI is revolutionising the educational landscape, bridging gaps, and encouraging a more inclusive and effective learning environment. Given the importance of integrating AI in education, there is a need to reflect on its implications” [36, p.1]. The area 3 “Assessment Automation” gives us the opportunity to explore benefits and challenges connected to the implementation of AI in assessment processes and allow us to reflect on the concrete implications related to our model structure and application. Other studies reflect on the AI application also in the assessment area, like Swiecki and colleagues [56], who analyse the power and the possible applications of AI systems, assume that “ AI-based techniques have been developed to fully or partially auto-mate parts of the traditional assessment practice. AI can generate assessment tasks, find appropriate peers to grade work, and automati-cally score student work. These techniques offload tasks from humans to AI and help to make assessment practices more feasible to maintain”. In their work, they analysed the domain of Automated assessment construction; AI-assisted peer assessment; Writing analytics; the role of Electronic assessment platforms; the topic of Stealth assessment; the issue of Latent knowledge estimation; the implications related to the Learning processes; the power of AI of scaffolding the transition From inauthentic to authentic and From antiquated to modern and all the related challenges about AI intersection with educational assessment design and experiences both for academics and students. These two papers equipped us with a general, critical and depth analysis of the implications related to AI and assessment and thus the authors sustain the validation process in terms of principles and scientific evidence. In relation with the more concrete level of applications of AI in assessment, to sustain our model it is possible to explore and cite different international reports as:

•

“Assessment ideas for an AI enabled world”, JISC [24]: the report promotes practical examples to maximise the use of AI in assessment through a categorisation of the assessment process itself, with a consequent focus on related specific domains (1. Authenticity; 2.Challenge; 3.Product; 4.Learning; 5.Staff demand; 6.Lifelong Learning).

•

“101 creative ideas to use AI in education, A crowdsourced collection” by Nerantzi and colleagues [42]: collection of general ideas of design and introduction of AI in the educational process, with reference to specific assessment practices in the Higher Education context, promoting the use of different approaches to AI usage for the enhancement of both students and university teachers experience.

These scientific products, already consolidated and recognised by the academic community, represent comparative models of design, implementation and reflection with respect to the AI-MAAS model, which, through a further process of formal validation by means of a Delphi study and thanks to direct classroom experimentation, could represent a valid tool to support the development of innovative and contemporary assessment processes.

4 Results and discussions

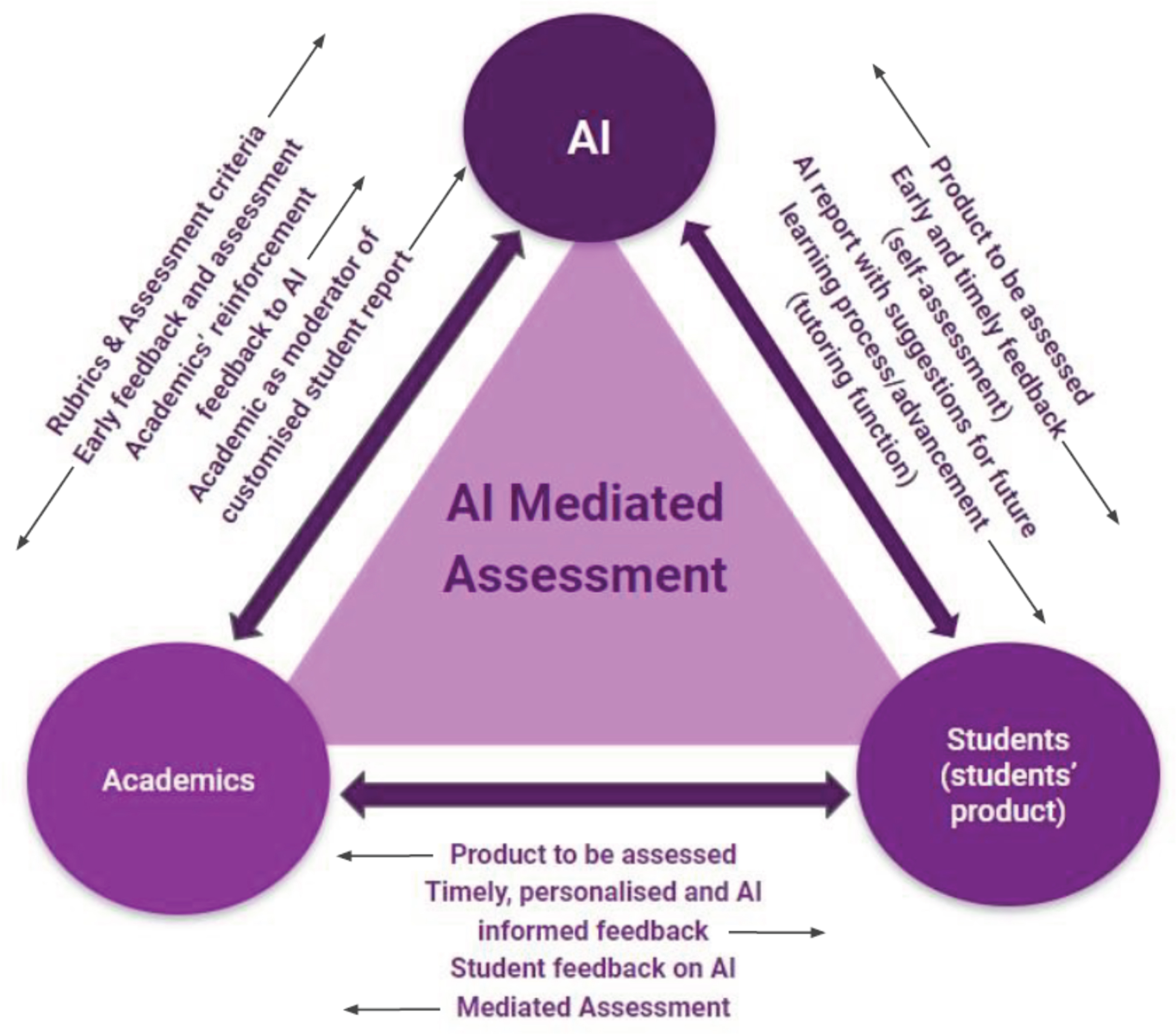

Starting from JISC [66] and UNESCO guidelines [38], we developed our model called AI-MAAS (AI-Mediated Assessment Academics and Students), composed of two different layers of application and interpretation: one with a focus on the implementation of the AI-Enhanced Summative processes(AI-MAAS/S) and one of AI-Enhanced Formative Assessment processes (AI-MAAS/F). The model revolves around three focal points, i.e. with respect to the cyclic and balanced intersection of AI, teachers and students, following Vygotskij and Leont’ev’s model of artefact mediation [9]. AI Mediated Summative Assessment layer of implementation (in Fig. 1) describes the three elements and the connection between them as follows:

•

AI:

◦

Constructive role: AI can help teachers with the construction and delivery of early feedback and assessment. In connection, academics can define and share rubrics and assessment criteria to scaffold the assessment process.

◦

Feedback mechanism: academics play a key role as actors who can give reinforcement feedback to the AI system itself, in order to always improve jointly developed evaluation processes.

◦

Evaluation and Reporting: the relationship between AI and students is characterised by the exchange of the students’ products to be assessed and then AI as the producer of specific reports that contain suggestions for learning improvement. AI with the role of tutor that shares early and timely feedback supported by the academics’ expertise.

•

Academics:

◦

Experts provision: academics as experts able to build and share tailored information to sustain AI actions.

◦

Feedback management: academics as professionals who are able to manage timely, personalised and AI-informed feedback.

•

Students (and student’s product):

◦

Product creation: students as crucial actors able to build specific products to be assessed thanks to the collaboration between academics and AI.

◦

Guidance role: students as important elements in guiding the AI Mediated Assessment processes with focussed feedback.

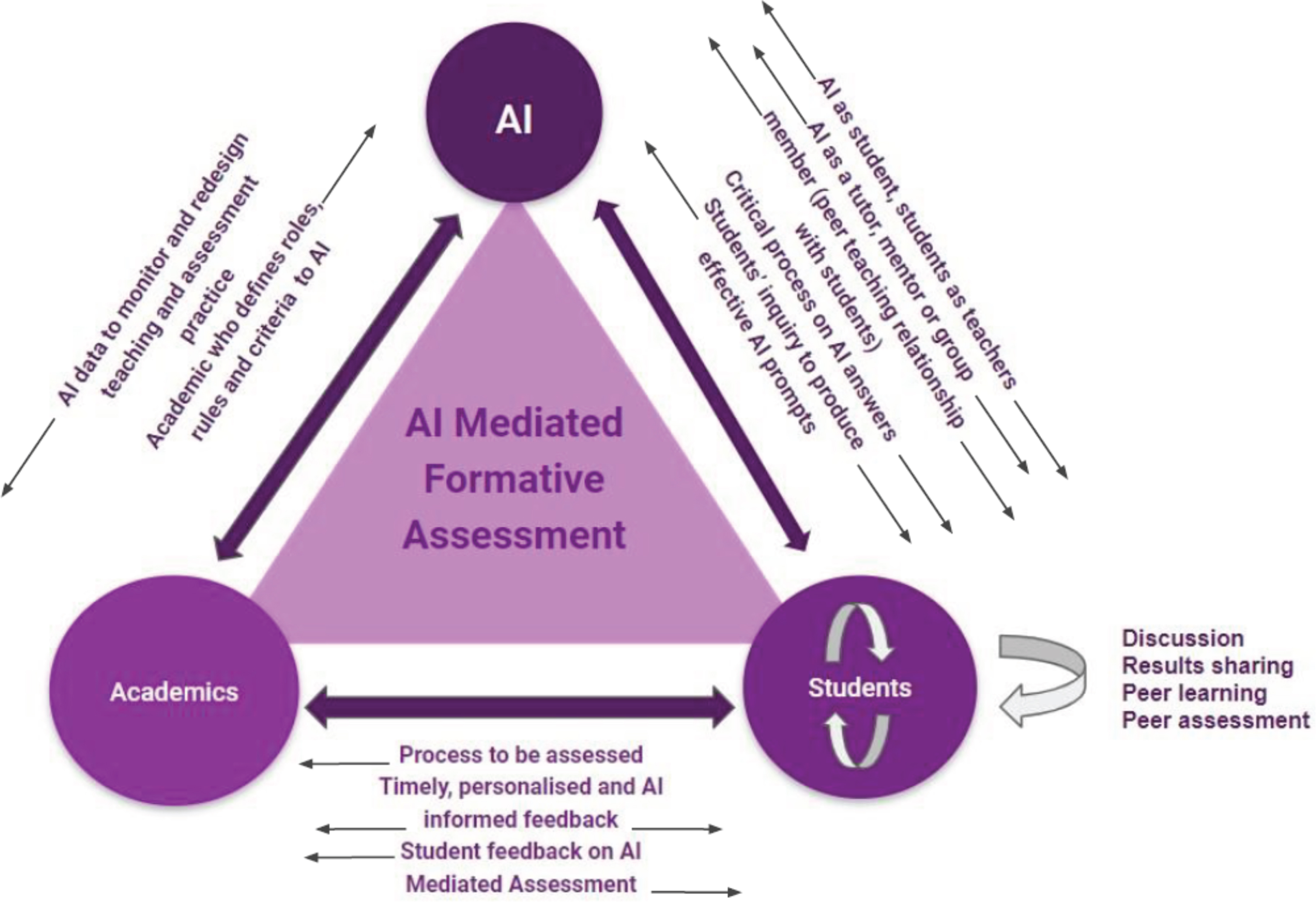

AI Mediated Formative Assessment layer of implementation (Fig. 2) describes the three elements and the connection between them as follows:

•

AI:

◦

Constructive role: the relationship between AI and academics is set up with a dynamic process of exchange in terms of expertise, resources and tasks.

◦

Feedback mechanism: the data produced by AI can be fundamental to monitor and redesign academics’ teaching and assessment practice.

◦

Evaluation and Reporting: AI can play a role as student and the students can act as teachers in order to support and to give prompts to AI that, at the same time, can play the role of tutor, mentor or group member (peer teaching relationship withstudents).

•

Academics:

◦

Expertise Provision: in connection with AI, academics define roles, rules and criteria to AI itself.

◦

Feedback management: in terms of relationships with students, academics pay attention to the assessment of the whole learning process, giving timely, personalised and AI-informed feedback.

•

Students (and students’ product)

◦

Constructive role: students can activate critical thinking actions on AI answers, in order to stimulate deep and complex reflective processes, through specific students’ inquiry to produce effective insights for AI. At the same time, students together can discuss, sharing results produced by academics and AI, also activating peer learning and assessment processes.

◦

Guidance role: students can generate feedback on AI Mediated Assessment itself.

As previously mentioned, the model will be assessed and validated using pieces of evidence from comparison, literature and practice, and following the Activity Theory checklist [28] during the design, validation and experimentation processes.

4.1 Practical implementations of the AI-MAAS Model—Summative Layer (AI-MAAS/S)

AI can serve as a pivotal mediating artefact in summative assessment, bridging academics, students, and the assessment process with students’ work. By embedding specific AI parameters, defined by academics, to guide rubrics and assessment criteria, AI has the potential to enhance the efficiency and relevance of feedback and assessment. This model underscores the interaction between academics and AI in crafting early feedback mechanisms and refining assessment processes, including academics’ contributions to AI finetuning for continuous improvement.

The relationship between AI and students is centred on the assessment of students’ products, where AI plays a crucial role in providing early and timely feedback. This facilitates a more dynamic and responsive assessment cycle, allowing for self-assessment, adjustments and improvements in students’ work based on AI-generated insights. This brings a formative value to the summative assessment.

Moreover, the academic-student interaction is enriched through mediated assessment, where AI supports the creation of assessment materials and step-by-step evaluation processes.

The synergy among these components— academics’ expertise in defining assessment criteria, AI’s capacity to process and assess student products, and the mediated interaction between academics and students— creates a robust framework for summative assessment. This model not only accelerates the feedback loop but also ensures that assessments are more aligned with learning objectives and student needs, ultimately enhancing the educational experience and learning outcomes.

Here are presented three pieces of literature that bring research evidence supporting the structure of AI-MAAS/S model. The first one is specifically designed to act as a support and validation evidence for the model, while the second and the third fit with it and are brought as pieces of evidence as well.

Among the various experimentations that are being conducted to validate and support the AI-MAAS model, one of the most critical for the summative assessment level is about the capability of LLMs to assess students’ written products [1]. This study investigates which of the most advanced models are capable of following a rubric of assessment and evaluating as closely as possible to human expert evaluators. The products to evaluate were written educational intervention designs created by 88 students of a support teachers specilisation program. Following a rubric is of great importance for a coherent and authentic assessment, especially when assessing authentic assignments. This study involves the following axes and elements of the AI-MAAS model:

•

Academics ←→ AI: Rubrics and Assessment Criteria, Early feedback and assessment

•

AI ←→ Students: Product to be assessed

•

Academics ←→ Students: Mediated Assessment

Findings of this study are that OpenAi’s ChatGPT-4 and Anthropic’s Claude 2 were the closest match to an expert human assessment. Moreover, on several occasions, they have been closer to a human evaluator than the other human evaluator. The average accuracy to human evaluation for ChatGPT-4 was 80%, and for Claude 2 was 79% (which is the same accuracy the two human expert evaluators had between them).

Only on the moist complex criteria do humans result in being more consistent between them than LLMs. Criteria such as “quality of assessment design” are something that generalist LLMs cannot yet assess properly. This emphasises the importance of oversight to ensure the reliability and educational alignment of LLMs.

Even better results on this front have been reached by Martin and colleagues [36], who worked on the summative assessment, starting from the need to assign reasoning, conceptualisation and processing tasks, and the fact that correcting large quantities of open-ended responses and tasks often proves unsustainable. They worked on the axes:

•

Academics ←→ AI: Rubrics and Assessment Criteria, Early feedback and assessment, Academics’ Feedback to AI

•

AI ←→ Students: Product to be assessed

They employed machine learning (ML) to create and refine a holistic scoring rubric for evaluating students’ argument complexity when judging the plausibility of competing chemical reactions. In this case, a close match between human scores and the LLM scores was achieved, with an accuracy between 85% and 90%.

Martin and colleagues used a complex approach, which went beyond using a standard "Chat" LLM to achieve their desired outcome. They employed HDBSCAN (Hierarchical Density-Based Spatial Clustering of Applications with Noise) to cluster student topics, map the results onto structures, and manually assign scores to all topics based on the rubric, creating a labeled dataset. They then compared the performance of various Bidirectional Encoder Representations from Transformers [11] pre-trained language models (BERT, RoBERTa, SciBERT) in classifying topics into the 20 rubric categories and discovered that BERT Large Uncased had the best results. Lastly, they trained a deep neural network classifier with the labeled dataset to automate the scoring of new topics based on the rubric. They validated the model using techniques such as generating artificial topics.

Models that are trained on a specific task and population are responsible for producing this excellent result. As such, it should be noted that the procedure currently in use cannot be expected to be utilised by any teacher who is not specialised in Machine Learning.

A third example from Koraishi [31] addresses the use of ChatGPT for teaching and assessing English Foreign Language (EFL) classes. His practice is along the AI-MAAS axes:

•

Academics ←→ AI: Early feedback and assessment, step-by-step AI-supported assessment, AI-supported creation of assessment materials

•

AI ←→ Students: Product to be assessed, Early and timely feedback (placement assessment), AI Report for improvements

•

Academics ←→ Students: Mediated Assessment

In such article, various practical applications of ChatGPT are presented and commented on, particularly concerning assessment and feedback. The use cases discussed include support for LLM in creating tests, generating feedback, personalising assessments, and producing reports. The model’s strongest affordances, which lie within the English language domain, have been wisely utilised by the author. Also this author, notes, however, that the entire process should be supervised and not rely solely on LLM evaluation.

4.2 Practical Implementations of the AI-MAAS Model—Formative Layer (AI-MAAS/F)

AI could be used in an assessment formative way taking into account the virtuous connection between academics, students and AI itself. Starting from the introduction of specific train parameters for AI as academics useful to guide formative assessment experience, it could be possible to create a complex monitoring system to maximise the students’ learning experience. In fact, beginning from the direct action of the university professors whom act as experts in terms of definition of roles, criteria and rules for AI, it is possible to develop a system that could help and customise the collection process of the data produced by AI in order to monitor and redesign the teaching, learning and assessment process. In terms of students, they could participate in the prompting process of AI, in collaboration with academics: in fact, they could co-construct rules and criteria to guide the formative assessment process, in order to improve the timely feedback action produced by AI in direct connection with their work. This process might represent a valid way to strengthen the connection between the three actors of the educational practice and at the same time it can foster comparison and self assessment mechanisms in both students and teachers. Then AI as student and students as teachers in the sharing actions of construction and delivery of assessment and feedback prompts. These practices can support the development of dynamic processes of empowerment of assessment practice, not only in terms of the outputs produced by the AI, but also make the moments of university teachers’ and students’ assessment sustainable and cooperative. At the same time, these mechanisms can foster the development of critical evaluative competences in learners and accompanying academics in a critical revision of their practice and their own parameters of judgement, in order to make assessment participatory, shared and effective. These actions are placed in the domain of the model concerning educational formative assessment, going to propose a complete connection between the various elements of the model and of the educational process in general. Formative assessment, in fact, is a tool for monitoring and sequentially support the learning experience and this example can support the accompaniment of students, promoting at the same time cross-cutting competences and integrating important concepts in Higher Education with student partnership [10] in the teaching design and therefore a global approach to teaching, learning and assessment student centred [67].

4.2.1 AI formative feedback

The following study by Liao and colleagues [33] works specifically on the axis AI←→ Students of the AI-MAAS/F model, and in particular on the AI as a tutor and formative feedback elements. The research introduces an AI-enabled visual report tool, designed to produce data-driven and accessible reports aimed at enhancing educational outcomes. The research design is quasi-experimental, with a pre-test and a post-test, and includes an experimental and a control group of respectively 62 and 63 students enrolled in a biology class in a higher secondary school. In general, AI-enabled reports are proven to augment learning achievements and foster self-regulated learning capabilities over time. On the flip side, they introduce an element of increased test anxiety among students. This study however shows how such tool have the potential to boost self-efficacy in situations in which conventional teacher feedback is hardly sustainable. The reports generated by the AI are proven to be as effective as the teachers’ but have the added advantage of significantly enhancing students’ confidence in their abilities. The experimental group scored higher than the control group in the final test, even they started from a lower score in the pre-test. From the evidences of this study, one can appreciate how such tools are particularly suited for use in large-size class environments, where its benefits on learning achievement and self-regulated learning can be maximised. By leveraging data-driven insights, these tools can complement and, in some cases, enhance the feedback provided by human instructors, offering a scalable solution to personalised education. This research underscores the importance of integrating technology in educational strategies, aiming not only to improve academic outcomes but also to empower students in their learning processes.

4.2.2 AI as a peer in the educational design process

Here is presented a yet unpublished study that brings research evidence supporting the structure of the AI-MAAS/F model. The experimentation was part of the studies explicitly conducted to validate the model.

The proposed example is the outcome of a experiment in a course offered for primary and secondary school’s teachers specialising in supporting students with specific learning needs and/or disabilities at different levels of education. The class consisted of 102 teachers participating to the “instructional design with the use of ICT for inclusion” course where a strong interactive and practical nature of the proposed teaching activities characterised the lessons.

The experiment took place during the completion of an authentic task regarding the design of an inclusive teaching activity using ICT. The goal was that of achieving specific competence learning outcomes for most of the participants. AI in this context should serve as a peer instrumental to fostering processes of cognitive dissonance, critical thinking and self-assessment.

Participants were divided into groups of four or five who were asked in an initial phase of group work to: 1. select the most appropriate technological tool to design their educational intervention and 2. design the intervention itself, which would then be presented in plenary at the end of the design process. Half the groups could use ChatGPT-3.5 for the first task and the remaining half could use it for the second. Students were invited to interact with ChatGPT as if it was a peer and an active group member, practice that fall in the following axes of AI-MAAS/F:

•

Academics ←→ AI: Academics defines roles; rules and criteria to AI

•

AI ←→ Students: AI as group member; critical process on AI answers; students’ inquiry to produce effective AI prompts

•

Academics ←→ Students: Academics defines roles, rules and criteria on how to use employ AI; students’ feedback on AI

This experimentation brought notable results and feedback to support and tune the AI-MAAS/F model, especially regarding the approaches that students may have when relating to an AI in a group (an AI-in-the-loop). The main approach was that of consulting the “AI member” in the brainstorming phase and, sometimes, in a final summarising phase. It was also given from several groups the role of the “Prioritiser” that fouses the work on the most important issues. On the flip side, the AI member was only relegated to that roles. In the majority of the groups it wasn’t really kept up to pace in a step-by-step manner with the group discussion. Feedbacks highlight that several group initially relied on ChatGPT as matter expert, or an “oracle”, but, thanks to the discussion with other members, applied critical thinking to the AI’s opinions and come up with better solutions with the help of human group members.

This experience was useful to promote the use of AI tools not only as an “oracle” but giving to the group the possibility of integrating these systems in the creative authentic process, through a reflection design flow, scaffolding at the same time specific competences for students and activating process of comparison between their work and the prompt given by AI tools, scaffolding self-assessment and inner feedback processes [44].

5 Conclusions and future research actions

Starting from the opportunities connected to the use of AI in education from both perspectives of students and teachers [29], it is important to understand how to better include these new opportunities to enhance teaching, learning and assessment processes in Higher Education contexts. For this purpose, our research is contextualised in an academic environment that has to cope with constant renewal in terms of approaches and strategies to deal with a major change at design, organisational and conceptual level. In connection with the topic of assessment and feedback from a perspective of assessment for learning, sustainability and authenticity [4, 52], it is important to reflect and design specific formative and practical actions to sustain students and teachers in the implementation of AI systems as powerful agents to support the progress of the educational system.

The studies analysed as a validation for the model, seem to support it in its different layers (Summative and Formative) and axes and modalities of educational interactions between Academics, Students and AI. Given the evidences in its support, the final advice is to try and translate it in to practice in different contexts. The authors would welcome any feedback.

In terms of future research perspective, the designed actions include, after the validation of the AI-MAAS model through a Delphi study, experimentation using the model with academics, with a following phase which will comprehend the impact analysis and the assessment of the efficacy. In fact, it is planned to design and implement a Delphy study process, including 30 experts in the field of Education, with a more specific focus on Assessment and AI area in Higher Education context. In the Delphi survey, the opinions, generated by the individuated group of experts across the multiple discussion rounds organised on a specific topic, will be collected. The multi-round structure will be introduced sequentially thanks to a specific software. The structured group communication process should create a convergence or a divergence of opinions, producing a more dynamic and accurate collection of data [3, 14]. To sum up, our the Delphi process will be divided in the following different steps:Furthermore, the AI-MAAS model use will be experimented into several other authentic educational settings, through an iterative redesign process of the learning and assessment experience following the principles of action research.

1.

Defining and recruiting experts.

2.

Developing Delphi questionnaire and online implementation (e-Delphi).

3.

Round 1: first collection of the data from the experts, based on the questionnaire’s prompts.

4.

Analysis and design of Round 2: phase characterised by the results analysis and in connection with non-consensus issues. The feedback sustains the experts’ comparison of their initial opinions with the group result. Additional rounds are organised until the achievement of an acceptable level of consensus free of issues or controversies [8].

Acknowledgments

The authors wish to express their gratitude to Prof. Anna Serbati of the University of Trento for her insightful and valuable advice, which contributed to the success of this work.

Conflict of interest

The authors declare that they have no conflict of interest.

References

1. Agostini D., Are large language models capable of assessing students’ written products?Research Trends in Humanities Education & Philosophy11 (2024), 38–60.

2. Ashby W.R., An introduction to cybernetics, Chapman & Hall, London, 1956.

3. Beiderbeck D., Frevel N., von der Gracht H.A., Schmidt S.L.andSchweitzer V.M., Preparing, conducting, and analyzing Delphi surveys: Cross-disciplinary practices, new directions, and advancements, MethodsX,8 (2021), 101401.

4. Boud D., Sustainable assessment: rethinking assessment for the learning society, Studies in Continuing Education22(2) (2000), 151–167.

5. Chai F., Ma J., Wang Y., Zhu J.andHan T., Grading by AI makes mefeel fairer? How different evaluators affect college students’perception of fairness, Frontiers in Psychology15 (2024), 1221177.

6. Chang Y., Wang X., Wang J., Wu Y., Yang L., Zhu K.andXie X., A survey on evaluation of large language models, ACM Transactions on Intelligent Systems and Technology15(3) (2024), 1–45.

7. Chen L., Zaharia M.andZou J., How is ChatGPT’s behavior changing over time?arXiv preprint arXiv:2307.09009 (2023).

8. Chuenjitwongsa S., How to conduct a Delphi study, Medical Education (2017).

9. Cong-Lem N., Vygotsky’s, Leontiev’s and Engeström’s cultural-historical (activity) theories: Overview, clarifications and implications, Integrative Psychological and Behavioral Science,56(4) (2022), 1091–1112.

10. Cook-Sather A., Bovill C.andFelten P., Engaging students as partners in learning and teaching: A guide for faculty, John Wiley & Sons, Hoboken, NJ, 2014.

11. Devlin J., Chang M.W., Lee K.andToutanova K., BERT: Pre-training of deep bidirectional transformers for language understanding, arXiv preprint arXiv:1810.04805 (2018).

12. Englebart D.C., Augmenting human intellect: A conceptual framework, SRI Summary Report AFOSR-3223 (1962).

13. Engeström Y., Activity theory and expansive design, in: Theories and practice of interaction design, S. Bagnara and G. Cramton Smith, eds., Lawrence Erlbaum Associates, Mahwah, NJ, 2006, pp. 3–23.

14. Gnatzy T., Warth J., von der Gracht H.andDarkow I.L., Validating an innovative real-time Delphi approach-A methodological comparison between real-time and conventional Delphi studies, Technological Forecasting and Social Change78(9) (2011), 1681–1694.

15. González-Calatayud V., Prendes-Espinosa P.andRoig-Vila R., Artificial intelligence for student assessment: A systematic review, Applied Sciences11(12) (2021), 5467.

16. Grion V.andSerbati A., Valutazione sostenibile e feedback nei contesti universitari. Prospettive emergenti, ricerche e pratiche, PensaMultimedia, Lecce, 2019.

17. Gulikers J.T., Bastiaens T.J.andKirschner P.A., A five-dimensional framework for authentic assessment, Educational Technology Research and Development,52(3) (2004), 67–86.

18. Herrington J.andHerrington A., Authentic assessment and multimedia: How university students respond to a model of authentic assessment, Higher Educational Research & Development,77(3) (1998), 305–322.

19. Hoffmann J., Borgeaud S., Mensch A., Buchatskaya E., Cai T., Rutherford E.andSifre L., An empirical analysis of compute-optimallarge language model training, in: Advances in Neural Information Processing Systems, vol. 35, Curran Associates, Inc., Red Hook, NY, 2022, pp 30016–30030.

20. Holmes W., Bialik M.andFadel C., Artificial intelligence in Education: Promises and implications for teaching & learning, The Center for Curriculum Redesign, Boston, MA, 2019.

21. Holmes W.andTuomi I., State of the art and practice in AI in education, European Journal of Education,57(4) (2022), 542–570.

22. Huang B., Chen C.andShu K., Can large language models identify authorship?arXiv preprint, arXiv:2403.08213 (2024).

23. Huvila I., Anderson T.D., Jansen E.H., McKenzie P., Worrall A., Boundary objects in information science, Journal of the Association for Information Science and Technology,68(8) (2017), 1807–1822.

24. JISC, Assessment ideas for an AI-enabled world, 2023. https://repository.jisc.ac.uk/9234/1/assessment-ideas-foran-ai-enabled-word.pptx.

25. Jonassen D.H., Carr C., Hsiu-Ping Y.Computers as mindtools for engaging learners in critical thinking, Tech Trends,43 (1998), 24–32.

26. Kamalov F., Santandreu Calonge D.andGurrib I., New era of artificial intelligence in education: Towards a sustainable multifaceted revolution, Sustainability15(16) (2023), 12451.

27. Kaplan J., McCandlish S., Henighan T., Brown T.B., Chess B., Child R., Gray S., Radford A., Wu J.andAmodei D., Scaling laws forneural language models, arXiv preprint, arXiv:2001.08361 (2020).

28. Kaptelinin V.andNardi B., Acting with Technology: Activity Theory and interaction Design, MIT Press, Cambridge, MA, 2006.

29. Kasneci E., Sessler K., Küchemann S., Bannert M., Dementieva D., Fischer F., Gasser U., Groh G., Günnemann S., Hüllermeier E., Krusche S., Kutyniok G., Michaeli T., Nerdel C., Pfeffer J., Poquet O., Sailer M., Schmidt A., Seidel T., Stadler M., Weller J., Kuhn J.andKasneci G., ChatGPT for good? On opportunities and challenges of large language models for education, Learning and Individual Differences103 (2023), 102274.

30. Koedinger K.R.andCorbett A., Cognitive tutors: Technology bringing learning sciences to the classroom, in: The Cambridge handbook of the learning sciences, R.K. Sawyer, ed., Cambridge University Press, New York, NY, 2006, pp. 61–77.

31. Koraishi O., Teaching English in the age of AI: Embracing ChatGPT to optimize EFL materials and assessment, Language Education and Technology3(1) (2023).

32. Krugmann J.O.andHartmann J., Sentiment analysis in the age of generative AI, Customer Needs and Solutions, 11(1) (2024), 3.

33. Liao X., Zhang X., Wang Z.andLuo H., Design and implementation of an AI-enabled visual report tool as formative assessment to promote learning achievement and self-regulated learning: An experimental study, British Journal of Educational Technology (2024).

34. Malone T.W., How human-computer ’superminds’ are redefining the future of work, MIT Sloan Management Review,59(4) (2018), 34–41.

35. Malone T.W., Superminds: The surprising power of people and computers thinking together, Little, Brown Spark, New York, NY, 2018.

36. Martin P.P., Kranz D., Wulff P.andGraulich N., Exploring new depths: Applying machine learning for the analysis of student argumentation in chemistry, Journal of Research in Science Teaching (2023), 1–36.

37. Miao F.andHolmes W., Guidance for generative AI in education and research, 2023. https://doi.org/10.54675/EWZM9535.

38. Miao F., Holmes W., Huang R.andZhang H., AI and education: A guidance for policymakers, UNESCO Publishing, Paris, 2021.

39. Mollick E.R.andMollick L., Assigning AI: Seven approaches for students, with prompts, 2023. https://doi.org/10.2139/ssrn.4475995.

40. Monarch R.M., Human-in-the-Loop Machine Learning: Active Learning and Annotation for Human-centered AI, Simon and Schuster, New York, NY, 2021.

41. Murphy V., Fox J., Freeman S.andHughes N.“Keeping it Real”: A review of the benefits, challenges and steps towards implementing authentic assessment, All Ireland Journal of Higher Education9(3) (2017).

42. Nerantzi C., Abegglen S., Karatsiori M.andMartınez-Arboleda A.(Eds.), 101 creative ideas to use AI in education, A crowdsourced collection, 2023. https://doi.org/10.5281/zenodo.8355454.

43. Nicol D.J.andMacfarlane-Dick D., Formative assessment and self-regulated learning: A model and seven principles of good feedback practice, Studies in Higher Education31(2) (2006), 199–218.

44. Nicol D., Resituating feedback from the reactive to the proactive, in: Feedback in higher and professional education, D. Boud and E. Molloy, eds., Routledge, London, 2012, pp. 34–49.

45. Nieminen J.H., Bearman M.andAjjawi R., Designing the digital in authentic assessment: is it fit for purpose?Assessment & Evaluation in Higher Education,48(4) (2023), 529–543.

46. OpenAI, Teaching with AI, 2023. https://openai.com/blog/teaching-with-ai.

47. Papert S.andHarel I., Situating constructionism, in Constructionism, S. Papert and I. Harel, eds., Ablex Publishing Corporation, Norwood, NJ, 1991.

48. Roll I.andWylie R., Evolution and revolution in artificial intelligence in education, International Journal of Artificial Intelligence in Education26 (2016), 582–599.

49. Rouse W.B.andSpohrer J.C.Automating versus augmenting intelligence, Journal of Enterprise Transformation8(1–2) (2018), 1–21.

50. Sadiku M.N.andMusa S.M., Augmented intelligence, in:A Primer on Multiple Intelligences, S.M. Musa, ed., Springer, Cham, 2021, pp. 191–199.

51. Sadler D.R., Formative assessment: Revisiting the territory, Assessment in Education,5(1) (1989), 77–84.

52. Sambell K., McDowell L.andMontgomery C., Assessment for learning in higher education, Routledge, London, 2013.

53. Sambell K., McDowell L.andBrown S., “But is it fair?”: An exploratory study of student perceptions of the consequential validity of assessments, Studies in Educational Evaluation23(4) (1997), 349–371.

54. Scarlatos A., Smith D., Woodhead S.andLan A., Improving the validity of automatically generated feedback via reinforcement learning, arXiv preprint arXiv:2403.01304 (2024).

55. Sun X., Li X., Zhang S., Wang S., Wu F., Li J.andWang G., Sentiment analysis through LLM negotiations, arXiv preprint arXiv:2311.01876 (2023).

56. Swiecki Z., Khosravi H., Chen G., Martinez-Maldonado R., Lodge J.M., Milligan S., Selwyn N.andGašević D., Assessment in the age of artificial intelligence, Computers and Education: Artificial Intelligence3 (2022), 100075.

57. Tamkin A., Brundage M., Clark J.andGanguli D., Understanding the capabilities, limitations and societal impact of large language models, arXiv preprint arXiv:2102.02503 (2021).

58. Tuomi I., The impact of artificial intelligence on learning, teaching and education, Publications Office of the European Union, Luxembourg, 2018.

59. UK Department for Education, Generative artificial intelligence (AI) in education, 2023. Retrieved from https://www.gov.uk/government/publications/generativeartificial-intelligence-ai-in-education/generative-artificialintelligence-ai-in-education.

60. UNICEF, Policy guidance on AI for children, 2021. https://www.unicef.org/globalinsight/media/2356/file/UNICEFGlobal-Insight-policy-guidance-AI-children-2.0-2021.pdf.

61. U.S. Department of Education, Office of Educational Technology, Artificial intelligence and future of teaching and learning: Insights and recommendations, Washington, DC, 2023.

62. VanLehn K., The behavior of tutoring systems, International Journal of Artificial Intelligence in Education,16(3) (2006), 227–265.

63. Vaswani A., Shazeer N., Parmar N., Uszkoreit J., Jones L., Gomez A.N., Kaiser L.andPolosukhin I., Attention is all you need, in: Advances in Neural Information Processing Systems, vol. 30, Curran Associates, Inc., Red Hook, NY, 2017, 5998–6008.

64. Villarroel V., Bloxham S., Bruna D., Bruna C.andHerrera-Seda C., Authentic assessment: Creating a blueprint for course design, Assessment & Evaluation in Higher Education43(5) (2018), 840–854.

65. Vygotsky L.S., Mind in Society: Development of HigherPsychological Processes, Harvard University Press, Cambridge, MA, 1978.

66. Webb M., A Generative AI primer, JISC, 2023. https://nationalcentreforai.jiscinvolve.org/wp/2023/05/11/generativeai-primer/#3-1.

67. Wright G.B., Student-centered learning in higher education, International Journal of Teaching and Learning in Higher Education23(1) (2011), 92–97.

68. Wu X., Xiao L., Sun Y., Zhang J., Ma T.andHe L., A survey of human-in-the-loop for machine learning, Future Generation Computer Systems135 (2023), 364–381.

69. Xu S., Wu Z., Zhao H., Shu P., Liu Z., Liao W.andLi X., Reasoning before comparison: LLM-enhanced semantic similarity metrics for domain specialized text analysis, arXiv preprint arXiv:2402.11398 (2024).

70. Yan L., Sha L., Zhao L., Li Y., Martinez-Maldonado R., Chen G.andGašević D., Practical and ethical challenges of large language models in education: A systematic scoping review, British Journal of Educational Technology55(1) (2024), 90–112.

71. Zheng N.N., Liu Z.Y., Ren P.J., Ma Y.Q., Chen S.T.,Yu S.Y.andWang F.Y., Hybrid-augmented intelligence: collaboration and cognition, Frontiers of Information Technology & Electronic Engineering18(2) (2017), 153–179.

Cite

Cite

Cite

OR

Download to reference manager

If you have citation software installed, you can download citation data to the citation manager of your choice

Information, rights and permissions

Information

Published In

Article first published online: July 16, 2024

Issue published: July 31, 2024

Keywords

Authors

Metrics and citations

Metrics

Publication usage*

Total views and downloads: 1876

*Publication usage tracking started in December 2016

Publications citing this one

Receive email alerts when this publication is cited

Web of Science: 8 view articles Opens in new tab

Crossref: 16

- When ChatGPT joins the team: a mixed-methods study of AI-mediated collaborative lesson design

- Automatic Feedback Generation for Japanese Reading Comprehension Short-Answer Questions Using Discourse-Structure-based Answer Diagnostic Graphs

- Dialogic Pedagogies in the Digital Age: Exploring the Role of AI-Mediated Interactions in Enhancing Critical Thinking in Higher Education Classrooms

- Rethinking Ethical Responsibility and Data Governance in Academic Assessment Using Large Language Models

- A systematic and bibliometric review of artificial intelligence in sustainable education: Current trends and future research directions

- Technology in Education. Smart and Innovative Learning

- 2025 International Conference on Information and Communication Technology for Development for Africa (ICT4DA)

- Applied Artificial Intelligence in Business

- Can AI replace experts in the evaluation of cultural heritage? Based on the controlled experiments conducted on six architectural heritages

- 2025 International Conference on Education Technology and Computers (ICETC)

- View More

Figures and tables

Figures & Media

Tables

View Options

View options

PDF/EPUB

View PDF/EPUBAccess options

If you have access to journal content via a personal subscription, university, library, employer or society, select from the options below:

loading institutional access options

Alternatively, view purchase options below:

Purchase 24 hour online access to view and download content.

Access journal content via a DeepDyve subscription or find out more about this option.