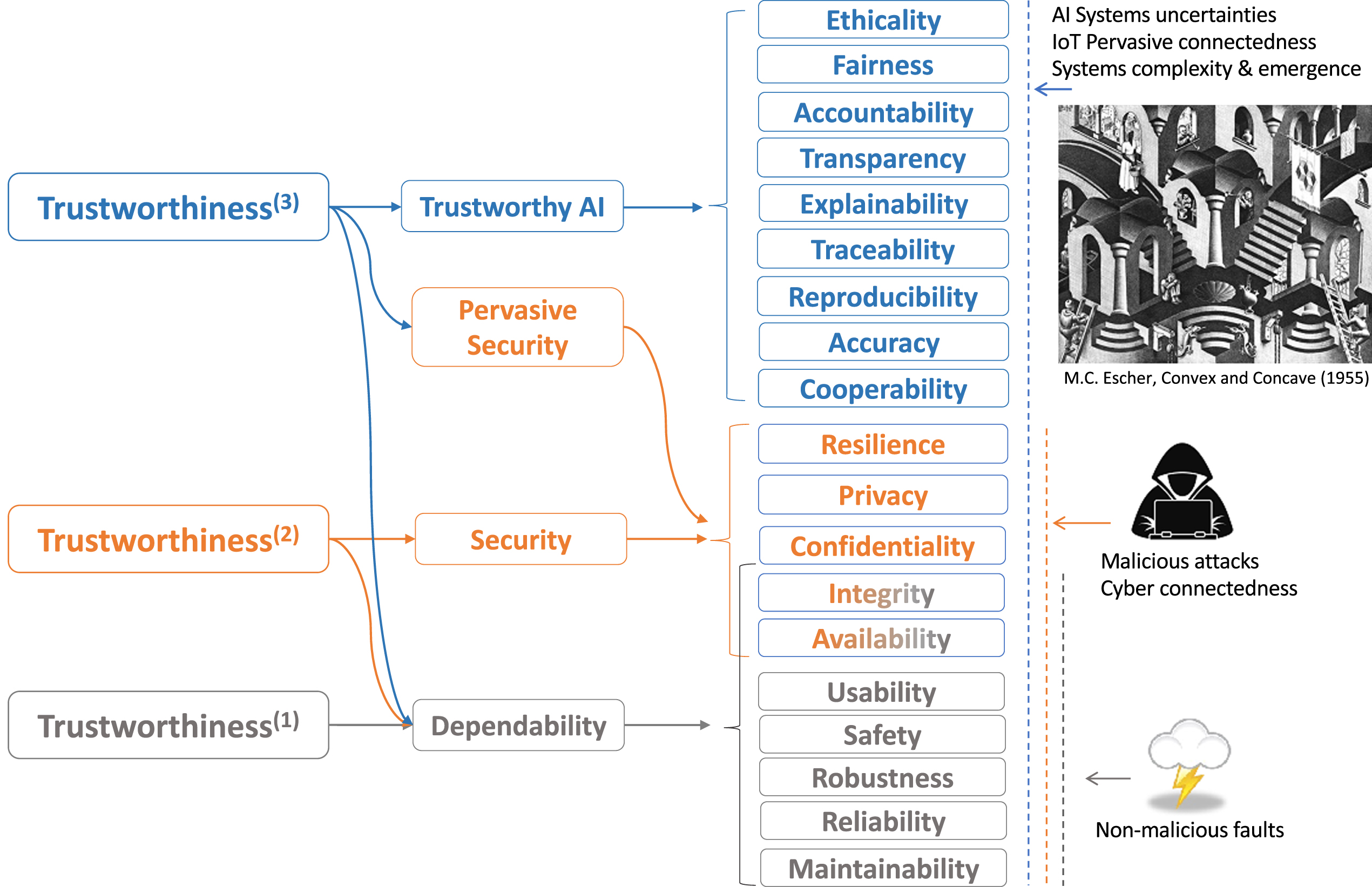

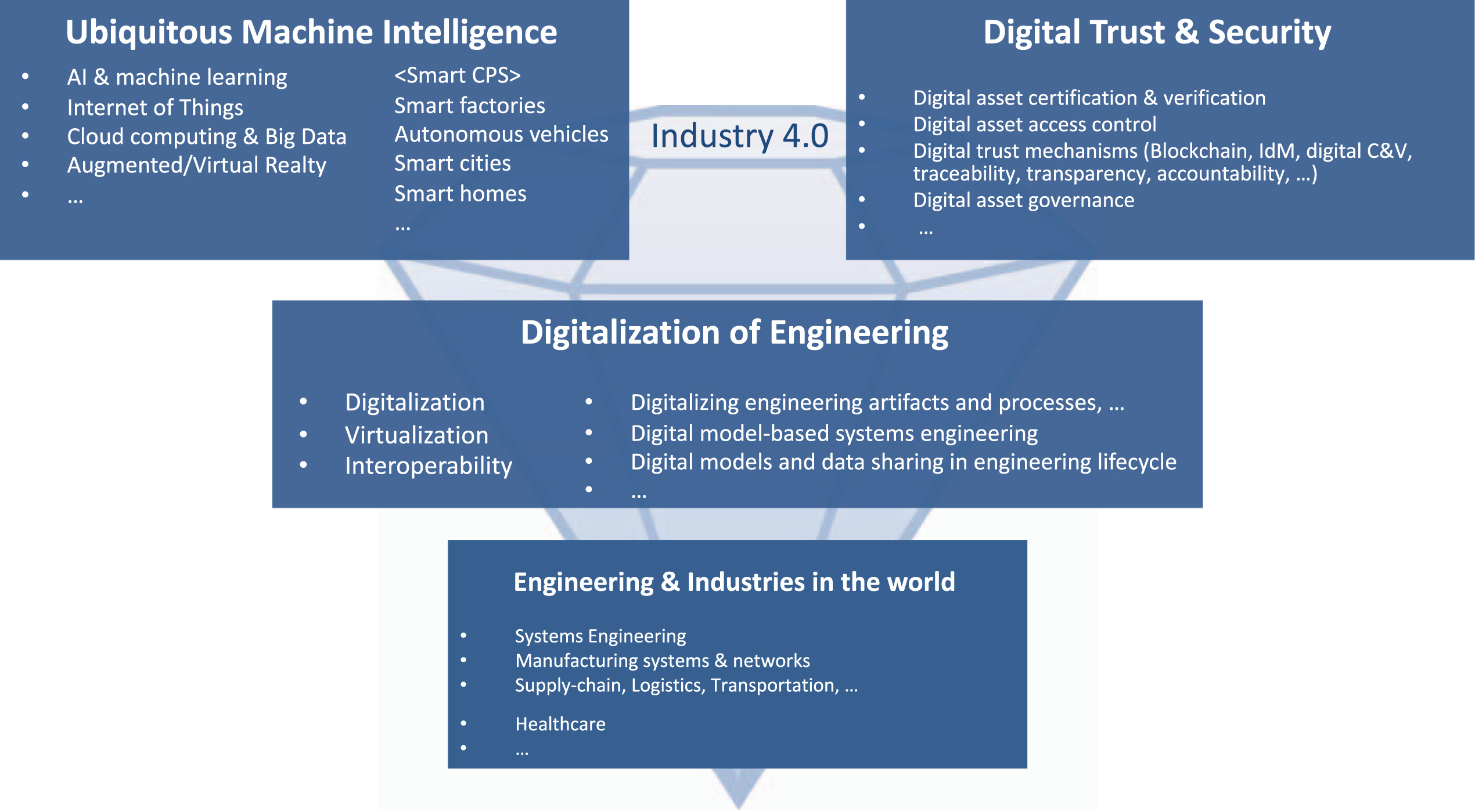

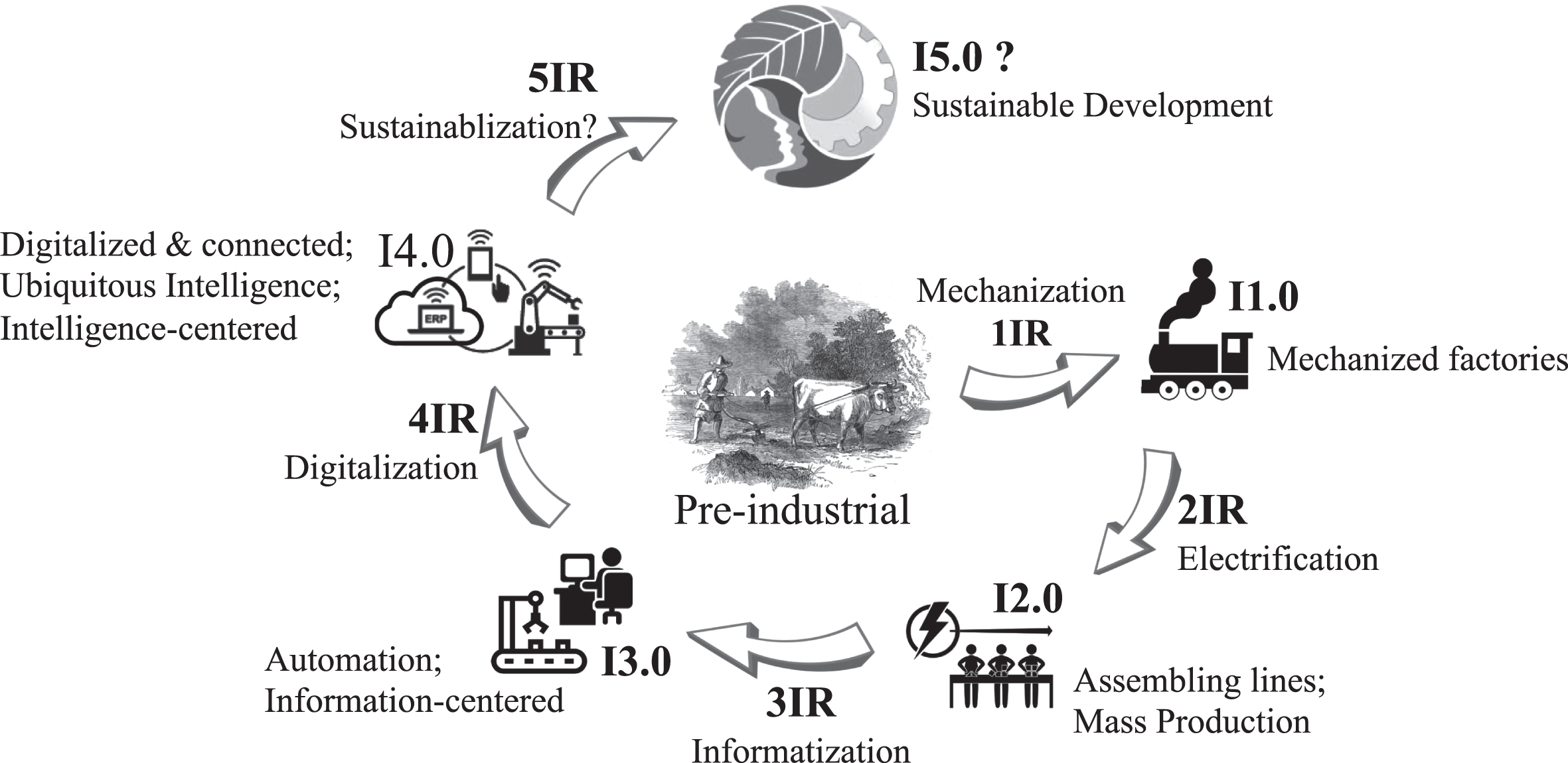

The disruptive digital technologies in the 4IR not only offer transformative opportunities but also bring in uncertainties and have triggered broad concerns and research on systems trustworthiness. The focused concerns of trustworthiness have been shifting and expanding to cover new issues that pop up in the new technologies used in each generation of engineering systems. This section discusses the trustworthiness concerns of Industry 4.0 systems, starting from examining the evolution of the trustworthiness of engineering systems, then focusing on trustworthy AI systems –the pivotal component of Industry 4.0 systems.

4.2 Trustworthy AI

In the last decade, AI achieved remarkable advances. Just to name a few, AlphaGo (

Silver et al.,

2018), using deep neural networks-based reinforcement learning, won the world number one player of Go game, which is regarded as the most challenging board game for computers to win. AlphaGo’s achievement marks a new milestone of machine intelligence towards superhuman intelligence on specific tasks. Although it is still a pilot project and has no broader adoption yet, Waymo One, the commercial taxi service operating with level 4 autonomous vehicles, has been offered on the streets in a city (

Waymo One, 2020). This service marks a new level of integrated intelligent capabilities of an AI system on a type of complex tasks which previously only humans could do. Another remarkable advance is Generative Adversarial Networks (GANs) (

Creswell et al., 2018;

Goodfellow et al.,

2020). A GAN consists of a pair of deep neural networks, a generator, and a discriminator; together, they can generate realistic images and other contents as requested (see examples at

https://thispersondoesnotexist.com/). GANs lead to many potential applications but also open the door for deepfake.

For many decades, the AI community’s focus has been on extending machine intelligence capabilities. Although the advances of AI today are still domain-specific (or narrow AI) rather than general AI, the remarkable achievements, the disruptive impacts, and the fast growth of applications of AI systems triggered concerns and research on trustworthy AI systems. The concerns are mainly from two perspectives. (1) Ethical AI, focusing on social effects, mainly ethical considerations, essentially, the profound effects AI systems bring to humans, human groups, and human society, and advocating using AI to benefit humans as a central principle. (2) Reliable AI, focusing on the technical performance, or dependability, concerning the competency of AI systems on technical matters. There is a broad range of expected properties of trustworthiness in AI systems.

Ethical AI is an emerging interdisciplinary research field, gathering together researchers from a wide range of areas, including computer science, philosophy, sociology, anthropology, public policy, law, and others. Some thought leaders and researchers conducted pioneering work in the field. The Association for the Advancement of Artificial Intelligence (AAAI) organized a panel of leading AI researchers that conducted “Asilomar Study of Long-Term AI Futures” in 2008. The study explored a wide range of topics on societal impacts and guidance of AI research (

AAAI Presidential Panel on Long-Term AI Future, 2009). The study assessed concerns and perspectives about disruptive outcomes of superhuman intelligence, explored ethical and legal issues associated with autonomous systems, and identified near-term AI research challenges and opportunities, including enhancing people’s privacy, enhancing human-AI collaboration and interaction, making ML and reasoning transparent to people, and preventing using AI for malevolent purpose. Continuing the effort to guide AI research for good, the AI100 2016 report (

Stone et al., 2016) addressed that AI research is “shifting from simply building systems that are intelligent to building intelligent systems that are human-aware and trustworthy.” The report collectively presents the view and prospects of a panel of experts on the opportunities and challenges of AI and policy recommendations in eight selected AI application domains targeting people’s lives in a typical North American city in 2030. They addressed challenges regarding how to build safe and reliable systems, gain public trust concerning safety, security, and privacy, make AI systems behave ethically and overcome bias, and how AI systems smoothly interact with humans. In the long term, ethical AI concerns the fundamental relations between AI systems and humans. There are concerns about “technological singularity” or “intelligence explosion,” partially reflected by the dystopia depicted in fiction. Experts in the AI field believe those radical outcomes remain fictional and are not immediate threats; well, some thought leaders suggest “avoid strong assumptions regarding upper limits on future AI capabilities” (

Future of Life Institute, 2017). Given AI’s profound impacts on human society, it is necessary to review AI systems’ purposes, usages, and impacts and create principles and regulations for guiding AI research and development to avoid harming humans and human society. The 23 Asilomar principles (

Future of Life Institute, 2017) and the EU HELG ethical AI guide (

EU AI HLEG, 2019) reflect a broad range of concerns about ethical issues of AI systems. EU AI HELG defined trustworthy AI as three components: lawful AI, ethical AI, and robust AI. The guide proposed four ethical principles: (1) Respect for human autonomy; (2) Prevention of harm; (3) Fairness; (4) Explicability, covering transparency, auditability, traceability, and explainability. On the ground of these four principles, the guide further proposed a list of seven requirements: (1) Human agency and oversight; (2) Technical robustness and safety, including security, accuracy, reliability, and reproducibility; (3) Privacy and data governance; (4) Transparency, including traceability, explainability, and communication; (5) Diversity, non-discrimination and fairness; (6) Societal and environmental wellbeing; (7) Accountability. The EU guide also discussed technical and non-technical methods to implement the requirements and proposed a list of questions used to assess the trustworthiness of AI systems.

The ethics of AI studies what is right/wrong regarding the purpose and usages of AI, based on the profound effects AI systems bring to humans and human society. The central principle of ethical AI is about using AI to benefit humans and human society. In the upper part of

Fig. 5, ethicality is about the extent to which an AI system complies with this principle.

As addressed by the AI100 2021 report (

Littman et al., 2021), “AI systems and humans have complementary strengths;” thus, “combined, they can accomplish more than either alone.” Technically, it remains a challenge regarding how to team up humans and AI systems effectively. For the fundamental ethical principles of trustworthy AI (

EU AI HLEG, 2019), no doubt human-AI teaming is the right direction to go, not only for maximizing capability and performance but also for ethical consideration.

Cooperability is about the extent to which an AI system facilitates and supports human-machine teaming for complex problem-solving, including the channels or methods to enhance interactions and collaborations between humans and machines.

Cooperability covers c

ontrollability; the latter is about the ability of an AI system that allows humans to monitor and control the system.

Fairness is about whether an AI system fairly treats people of different groups regarding race, gender, age, cultural background, and others. Fairness received much attention in recent years when AI systems started being used for some life-changing scenarios, such as hiring decisions, financial credit evaluation, and judicial decisions (

Mehrabi, et al., 2021). Fairness is a highly challenging topic for complex human societal reasons. Technically, fairness can be treated as bias-free. The recent deep learning progress reflected by GANs can be used to create real-like samples for balanced data in ML.

Accountability is the availability and integrity of the identity of an entity that performed an operation in the AI system of concern. In simple, it is who (human operators or autonomous components in an AI system) did what and when and the responsibility for that. In the scenarios of a security incident, an accident, an error, or a system failure, accountability helps to identify the causes, make responsibility clear, and avoid future mistakes.

Transparency is about the extent to which how the AI system operates is transparent to various stakeholders, such as operators, business partners, auditors, and users. Transparency is a basis for ensuring ethicality, fairness, and privacy and facilitating controllability and cooperability.

Explainability is the ability to explain the outcomes and processes of an AI system to humans. The major challenge is that in ML, neural networks have low-level coding for feature representation which is inherently hard to be explained in high-level knowledge. A very large number of parameters and complex structures of deep neural networks make the interpretability further harder.

Traceability is about the ability to collect and document the provenance of the data used and produced, the models used and trained, and the operating processes of an AI system. Traceability is the basis for transparency and supports explainability.

Reproducibility is about whether a model instance can be rebuilt with the same AI algorithm and data and whether an experiment or, more generally, a process running with an AI model can be reproduced. Reproducibility is essential to science and engineering.

Reliable AI reflects people’s concern about systems’ technical performance when more and more AI systems are used or embedded in a large engineering system in the 4IR. Naturally, this aspect of concern brings the focused attention partially back to more classical trustworthiness properties of engineering systems but with a focus on AI systems or AI components and their impact on the larger systems. This article uses the term “dependability” to cover all expected properties (for trustworthiness) on the technical performance aspect. The concept of dependability here is broader than what was defined in (

Avizienis et al., 2004).

Reliability is the probability of a system functioning without failure for a given period of time.

Maintainability is about how easy to make a system maintain healthy, updatable, and upgradeable.

Resilience is about the ability of a system to restore it to a working state when it is damaged in situations such as natural disaster events or cyber-attacks.

Safety is about how safe to humans a system is. The broad use of AI components in engineering systems makes safety a significant concern.

Accuracy is the measure of errors made by a model or system; in the context of ML, it is critical to have high accuracy for new data beyond the data used for training the model. In Systems Engineering, robustness is defined as “the degree to which a system or component can function correctly in the presence of invalid inputs or stressful environmental conditions”(

ISO/IEC/IEEE, 2010). In ML,

robustness is about the stable outcomes in the presence of perturbations in inputs and could be measured with sensitivity. In deep learning, lack of robustness is a critical cause for the possible deepfake by GANs. The general meaning of robustness is similar to resilience. In robustness, the perturbations are on a small scale. Usability is how easy a system is to be used, and usability is beyond human-machine interaction. Poor usability can lead to failures in many other properties in modern systems, including security and safety.

Security is defined as the well-known definition of the CIA triad: Confidentiality (Prevention of unauthorized access to the protected resources or disclosure of the protected information), Integrity (absence of unauthorized alterations), and Availability (Readiness for correct services for authorized users). Some other interesting security properties can be defined on top of the CIA triad. For example, authenticity is the integrity of information content and its provenance (origin). Obviously, in the digital and connected environment, failures in security have broad impacts and can compromise other trustworthiness properties. Privacy is another big concern in AI. Since data is the fuel for AI systems, privacy concerns about whether the data gathering, holding, processing, usage, sharing, and governance respect people’s privacy.

As illustrated by

Fig. 5, the trustworthiness of AI systems is the new collection of concerns when the systems evolve into Industry 4.0 systems. Interestingly, on the other hand, the digitalization of engineering artifacts, processes, and enterprises in the 4IR could support achieving the expected trustworthiness properties of AI systems.

In digital engineering transformation, it is an excellent opportunity for the engineering design community at large to bring the new capabilities of AI and the trustworthy AI principles together in various engineering systems design for human society to leverage the power of AI and at the same time to avoid or minimize the potential negative impacts.